Unified AI ecosystems simplify the way businesses manage artificial intelligence by bringing multiple tools, models, and workflows into one platform. This approach reduces costs, improves security, and eliminates inefficiencies caused by disconnected tools. The AI orchestration market is growing fast, expected to reach $11.47 billion by 2025, yet 95% of AI projects fail due to poor integration. Platforms like Prompts.ai, Databricks, Microsoft Azure AI, Alphabet Vertex AI, Amazon Bedrock, and IBM Watsonx address these challenges by unifying AI workflows, optimizing costs, and enhancing governance.

| Platform | Models Supported | Cost Management Features | Governance & Compliance Tools | Scalability Features |

|---|---|---|---|---|

| Prompts.ai | 35+ LLMs | TOKN credits, pay-as-you-go | Audit trails, centralized oversight | Unified model access, fast deployment |

| Databricks | Leading LLMs + open-source | FinOps, serverless compute | Unity Catalog, PII classification | 99.95% uptime, scalable pipelines |

| Microsoft Azure AI | 11,000+ models | PTUs, Batch API, Spot VMs | Purview, 100+ certifications | Auto-scaling, global deployment |

| Alphabet Vertex AI | 200+ models | Flexible pricing, serverless training | Model Armor, IAM controls | Managed pipelines, feature store |

| Amazon Bedrock | 100+ models | Serverless notebooks, Spot pricing | Guardrails, SageMaker Catalog | Elastic infrastructure, visual ETL |

| IBM Watsonx | Granite + BYO models | Hybrid cloud scaling, resource automation | Factsheets, Guardium AI Security | MLOps pipelines, hybrid environments |

Unified AI ecosystems are no longer optional - they’re critical for organizations aiming to stay competitive. Choose a platform that aligns with your goals, simplifies integration, and delivers measurable results.

Top 6 Unified AI Platforms Comparison: Features, Models, and Capabilities

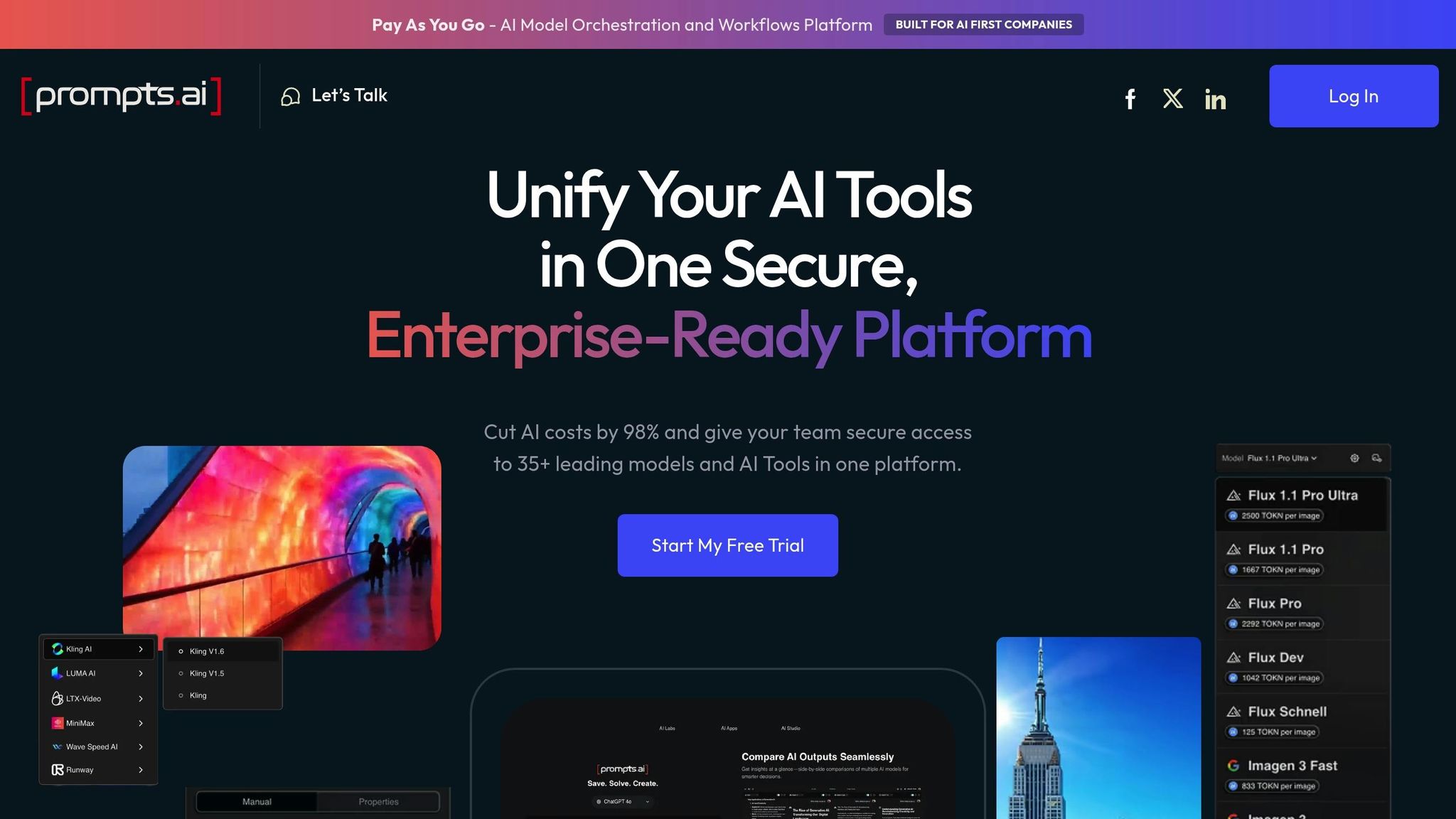

Prompts.ai brings together over 35 top language models - including GPT-5, Claude, LLaMA, Gemini, Grok-4, Flux Pro, and Kling - into a single, unified platform. This eliminates the need for juggling multiple subscriptions or dealing with complicated logins. By centralizing AI tools, it simplifies management and reduces the hassle of maintaining separate systems.

The platform also offers a side-by-side comparison feature, enabling teams to test and evaluate multiple models simultaneously. This makes it easier to identify the best-performing model for a specific task in real time, removing the guesswork from AI selection and ensuring the right tool is matched to the right workflow.

Prompts.ai uses a pay-as-you-go TOKN credit system, which charges based on actual token usage. This approach can reduce software expenses by as much as 98% compared to managing individual subscriptions for multiple platforms. The built-in FinOps layer provides detailed tracking of token usage across all models and teams, offering real-time insights into spending. By tying costs directly to business outcomes, organizations can better manage budgets and maximize their return on investment.

In addition to cost savings and simplified integration, Prompts.ai enhances governance and security for enterprises. The platform includes robust governance tools and detailed audit trails for every workflow, making it ideal for industries with strict regulatory requirements, such as finance and healthcare. It provides full visibility into AI usage across teams and models, ensuring centralized oversight. This not only helps maintain compliance but also prevents security gaps that often arise when AI tools are managed in isolation - all without adding extra administrative complexity.

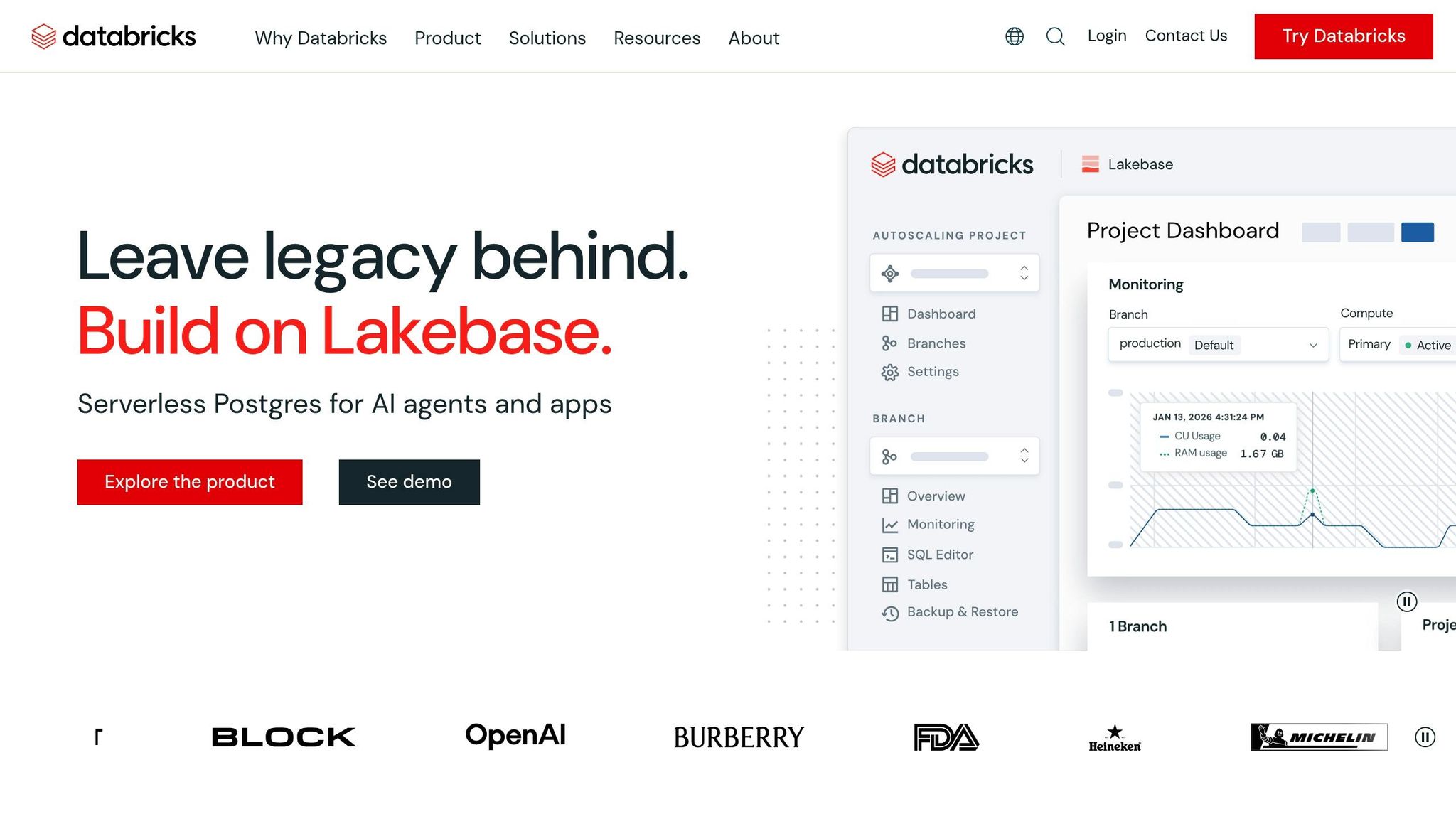

Databricks' Mosaic AI brings together every stage of the AI lifecycle, from preparing data to monitoring models in production. The platform offers Foundation Model APIs that provide secure, scalable access to leading LLMs like Meta Llama, Anthropic Claude, and OpenAI GPT.

One standout feature is its AI Functions, which embed models directly into SQL queries, notebooks, and pipelines. These include task-specific functions like ai_summarize, ai_translate, and ai_extract, alongside the more flexible ai_query, which works with any hosted model. Databricks also integrates with widely-used libraries such as Hugging Face Transformers, LangChain, and PyTorch, simplifying the use of open-source models. To ensure consistency and control, Unity Catalog acts as a unified governance layer, offering lineage tracking and detailed access controls for models, vector indexes, and functions. This integration framework supports both operational efficiency and compliance needs.

Databricks goes beyond integration by prioritizing governance across the AI lifecycle. PepsiCo, for example, uses Unity Catalog to securely manage over 6 petabytes of data and 1,500 users worldwide. The system’s centralized access control is built on ANSI SQL standards, enabling granular protections like row-level filters and column masks to safeguard sensitive data. Features such as automated PII classification and comprehensive audit logs simplify adherence to regulations.

"Unity Catalog has enabled us to centralize our data governance, providing robust security features and streamlined compliance processes... which is crucial for adhering to stringent data protection laws, including GDPR." - Michael Ewins, Director of Engineering

The Mosaic AI Gateway extends these governance capabilities to generative AI, managing model access while filtering PII and enforcing safety protocols across LLMs and AI agents. Erste Group’s Sr. Solutions Manager, Jürgen Neulinger, employs fallback mechanisms that redirect traffic during quota limits or model issues, ensuring uninterrupted operations.

Databricks enhances workflow efficiency at scale while maintaining rigorous governance. Lakeflow Jobs offers unified orchestration for data, analytics, and AI workloads, boasting 99.95% uptime and supporting millions of workflows daily. Wood Mackenzie, for instance, achieved an 80-90% reduction in processing time by standardizing ETL pipeline orchestration with Databricks, while managing 12 billion data points weekly. The platform’s serverless compute option delivers scalable performance with pay-as-you-go pricing, eliminating setup requirements.

For distributed training, Databricks supports tools like Ray, TorchDistributor, and DeepSpeed. Companies are seeing tangible results: Block generated $10 million in productivity gains through Mosaic AI automation, and Condé Nast reduced costs by $6 million. Additionally, its intelligent repair system, which retries only failed nodes, minimizes both time and expense.

Microsoft Foundry, previously known as Azure AI Studio, consolidates AI development into one cohesive platform. It provides access to an impressive library of over 11,000 models, including foundational, open-source, reasoning, and multimodal options. Some standout models include GPT-5, Claude Opus 4.6, and DeepSeek-R1. The platform also utilizes Foundry IQ, a contextual RAG engine that processes more than 3 billion daily search queries, grounding AI responses in enterprise-specific data.

With support for MCP and A2A protocols, the platform ensures secure AI interactions. This vast model catalog and interoperability eliminate the hassle of juggling multiple vendor relationships or integration points, streamlining operations and reducing complexity. The secure integration framework also lays the groundwork for cost-effective deployments.

Azure AI provides flexible deployment models tailored to different budgets, ensuring cost efficiency across workloads:

For instance, cached input tokens for GPT-5.2 Global cost just $0.18 per 1M, a sharp reduction from the standard rate of $1.75, resulting in a 90% savings.

Organizations running interrupt-tolerant AI tasks on Spot VMs can lower compute costs by as much as 90% compared to on-demand pricing. To further optimize expenses, the platform automatically removes inactive fine-tuned model deployments after 15 consecutive days of inactivity, eliminating unnecessary hosting charges. Pre-purchasing Agent Commit Units (ACUs) unlocks additional tiered discounts of 5%, 10%, or 15%, depending on the commitment level.

Beyond cost efficiency, Microsoft prioritizes governance and compliance, holding over 100 certifications, with more than 50 tailored to specific regions and countries. To enhance security, the company employs 34,000 full-time engineers focused on safeguarding its systems.

The Foundry Control Plane offers a centralized interface for managing and enforcing governance across AI agents, models, and tools. Integration with Microsoft Purview simplifies compliance with complex regulations like the EU AI Act, GDPR, and HIPAA by translating them into actionable technical controls.

To ensure data sovereignty, Data Zone deployments restrict processing to one of 27+ defined geographic locations. The platform also features an AI Red Teaming Agent, which automates vulnerability assessments and regression testing prior to deployment. Azure OpenAI Service further guarantees reliability with a minimum availability SLA of 99.9%.

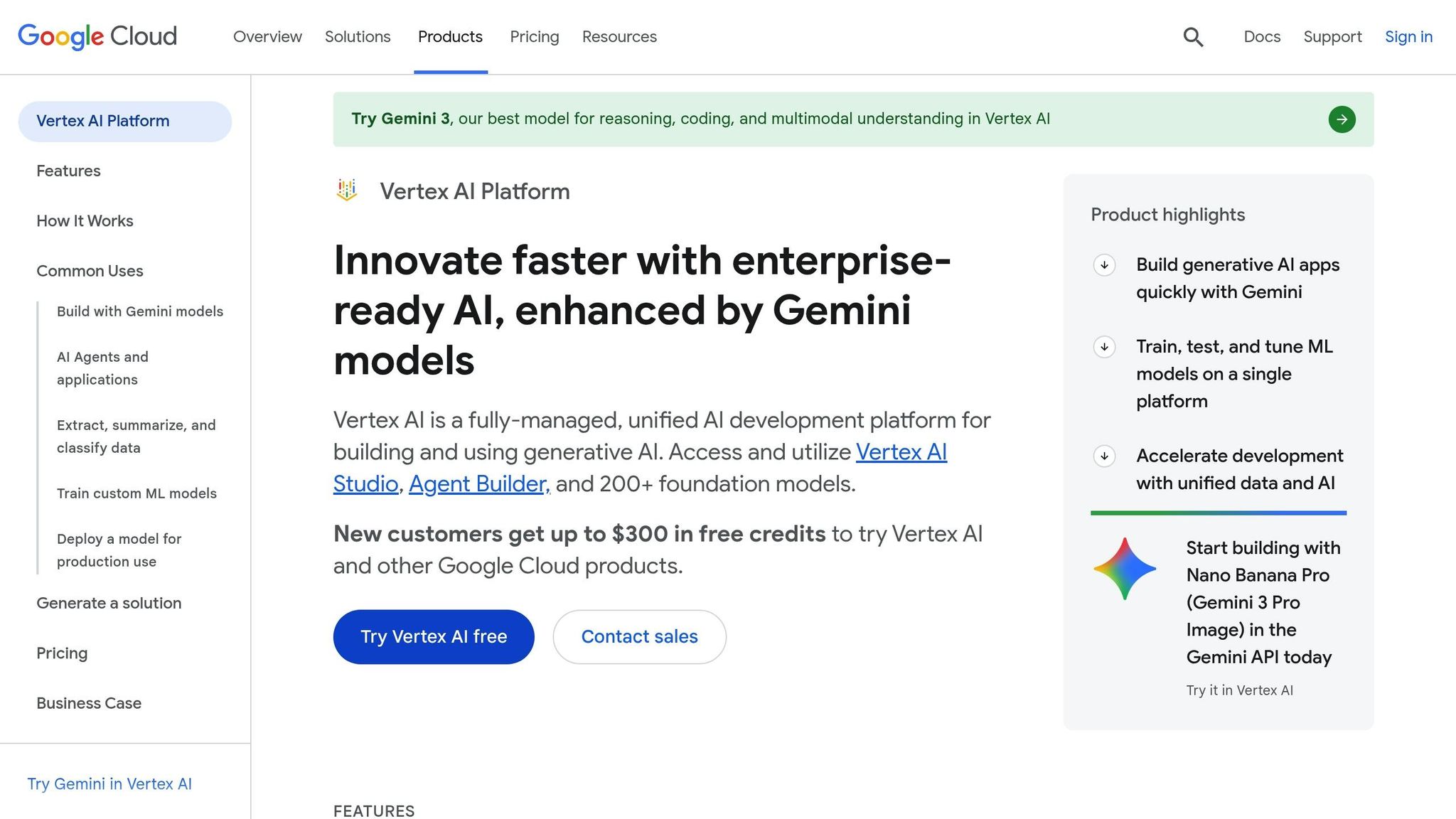

Alphabet Vertex AI continues to push the boundaries of AI ecosystems with seamless model integration and heightened security. Through its Model Garden, the platform offers access to over 200 enterprise-ready models, including Google's Gemini series, Anthropic's Claude, and open-source options like Llama and Gemma. With a 2 million token context window for Gemini models, Vertex AI is well-suited for handling complex documents and extended conversational tasks.

The platform supports the Agent2Agent (A2A) protocol, an open standard backed by over 50 partners, enabling secure collaboration between agents built on frameworks like ADK, LangGraph, or Crew.ai. Additionally, the Model Context Protocol (MCP) connects agents to a wide array of external data sources through a growing library of compatible connectors. By combining these features with cost-efficient operations, Vertex AI provides a powerful and scalable solution for diverse AI needs.

Vertex AI offers flexible options to help teams manage costs effectively. Serverless training is ideal for quick experiments, while dedicated training clusters on reserved capacity deliver consistent performance for teams with steady, high-demand workloads.

The pricing model is designed to be accessible for various team sizes:

These rates make it feasible for teams to automate machine learning workflows without overspending.

Vertex AI emphasizes security and compliance at every level. Its Model Armor feature provides runtime protection against risks like prompt injection and data leaks, while each agent is assigned a unique Agent Identity through IAM, ensuring clear audit trails and precise security controls. Importantly, customer data, model weights, and prompts are never used to train Google's foundation models. When teams fine-tune a model, the original remains intact, and custom versions stay securely within the customer’s environment.

Gemini Enterprise serves as a centralized marketplace for managing and governing custom-built agents. By integrating with the Security Command Center, it adds Agent Engine Threat Detection, enabling organizations to detect and respond to potential threats targeting deployed agents. With tools like Google Cloud Trace, Cloud Monitoring, and Cloud Logging, the platform ensures comprehensive tracking and auditing of agent behavior.

"The accuracy of Google Cloud's generative AI solution and practicality of the Vertex AI Platform gives us the confidence we needed to implement this cutting-edge technology into the heart of our business and achieve our long-term goal of a zero-minute response time." - Abdol Moabery, CEO, GA Telesis

Vertex AI Pipelines streamline machine learning workflows by automating and orchestrating processes using Kubeflow or TFX frameworks. Meanwhile, the Agent Engine takes care of infrastructure management, scaling, security, and monitoring for AI agents in production. For large-scale Python and ML workloads, the platform integrates Ray on Vertex AI, offering a managed framework for distributed computing and parallel processing.

Grounding capabilities powered by Google Search - responsible for 99% of the world's search data - help reduce inaccuracies and improve response reliability. The Feature Store acts as a centralized hub for sharing and reusing machine learning features, accelerating the development and deployment of new applications across teams. This combination of scalability and efficiency positions Vertex AI as a key player in simplifying and optimizing enterprise AI workflows.

Amazon has developed a solution designed to simplify AI integration while improving governance and scalability.

The Amazon SageMaker Unified Studio brings together data engineering, analytics, and AI tools into a single interface. It integrates services like Amazon Bedrock, AWS Glue, and Amazon Athena to create a governed workspace. Its Generative AI playground enables teams to compare up to three leading foundation models side-by-side, streamlining decision-making and experimentation.

Amazon Bedrock Flows introduces a visual builder that connects foundation models, prompts, and AWS services like Lambda, enabling seamless workflow creation with minimal coding. This system supports workflows for both structured and unstructured data. Pre-configured Blueprints further simplify the setup of Bedrock Agents, Knowledge Bases, Guardrails, and Flows, allowing teams to quickly deploy resources.

"Now, with Amazon SageMaker Unified Studio, we can deploy a single data worker tool for data engineers and ML scientists. We are also automating data infrastructure deployment, allowing us to simplify the process for our customers and enhance their experience." - Zeeshan Saeed, Chief Technology and Strategy Officer, Adastra

Amazon SageMaker Unified Studio emphasizes strict governance and compliance measures, ensuring secure and controlled access to resources. The platform enforces project-based isolation, restricting datasets and resources to authorized users. Specialized IAM roles, such as the Model Consumption Role for playgrounds and the Model Provisioning Role for projects, allow organizations to precisely manage who can access specific models.

Amazon Bedrock Guardrails play a crucial role in maintaining application integrity by blocking harmful content, filtering hallucinations, and identifying sensitive PII. The SageMaker Catalog enhances transparency by tracking the complete lineage of data and AI assets, making it easier to meet regulatory requirements. Tools like SageMaker Clarify monitor models for bias and accuracy, ensuring they align with responsible AI principles. For example, NatWest Group reported a 50% reduction in the time it takes for data users to access new tools, highlighting the platform's efficiency in governance.

SageMaker Unified Studio also focuses on scalability and streamlined workflows. Serverless notebooks eliminate the need for manual infrastructure management by automatically scaling compute resources. Integration with Athena Spark enables large-scale data processing in an interactive environment without requiring users to manage clusters. Meanwhile, Amazon Q Developer translates natural language prompts into code and SQL statements, speeding up development for users of varying skill levels.

In January 2025, NTT DATA adopted Amazon SageMaker Unified Studio to unify data management across warehouses and lakes. By leveraging the platform’s lakehouse architecture and analytics tools, the company reduced time-to-value for customer data projects by 40%. The visual ETL tool generates Spark code from intuitive flows or natural language prompts, allowing teams to build scalable data integration processes without needing advanced Apache Spark expertise.

"Amazon SageMaker Unified Studio reduces the time-to-value for our customers' data projects by up to 40%, helping us with our mission to accelerate our customers' digital transformation journey." - Akihiro Suzue, Head of Solutions Sector, NTT DATA

IBM Watsonx takes a major step forward in creating a unified environment for AI development and deployment. By integrating watsonx.ai, watsonx.data, and watsonx.governance, it ensures data engineers and AI developers can collaborate on shared assets without switching platforms. This seamless architecture bridges workflow gaps, allowing for smoother project execution and deployment.

The platform's AI Gateway offers a single access point to IBM Granite models, third-party options like OpenAI, Anthropic, Google Gemini, Mistral, Llama, and open-source models from Hugging Face. This "bring your own model" approach gives organizations the flexibility to tailor foundation models to their specific needs. Additionally, watsonx Orchestrate acts as a coordination hub for AI agents, integrating with enterprise systems like Workday, SAP, Salesforce, and ServiceNow. With over 100 domain-specific agents and 400+ prebuilt tools available, businesses can skip the hassle of building custom integrations and instead focus on scaling their operations. By unifying workflows, IBM Watsonx aligns with the broader industry trend toward fully integrated AI ecosystems.

Watsonx.governance simplifies the often-complex task of model lifecycle management, whether models are hosted on-premises or in the cloud. Its Factsheets feature automatically logs key details like performance metrics, risk data, and development activities, making audit preparation effortless. Built-in compliance tools support adherence to global standards, including the EU AI Act, ISO 42001, and NIST AI RMF.

The platform also integrates with IBM Guardium AI Security to detect unauthorized "shadow AI" deployments and monitor for vulnerabilities. Real-time alerts flag issues such as bias, toxicity, and performance drift. For instance, at the US Open, Watsonx.governance increased fairness in tournament data from 71% to 82% by eliminating bias. Vodafone, another user, saw a 99% improvement in turnaround time for journey testing through these governance tools.

Watsonx doesn’t just unify and govern - it also scales operations to improve efficiency. Its resource management automation and ability to scale storage and usage translate into tangible business benefits. IBM itself handled 94% of over 10 million annual HR requests through orchestrated AI agents. Similarly, Blendow Group cut document summarization and analysis time by 90%, while AddAI reduced unanswered customer service queries by 50%.

Watsonx.ai supports deployment across hybrid cloud environments, including IBM Cloud, AWS, and on-premises infrastructure, ensuring flexibility and avoiding vendor lock-in. It also streamlines the AI lifecycle, from data preparation to deployment, with MLOps pipelines and collaborative tools. Real-world results include Dun & Bradstreet reducing supplier risk evaluation time by over 10% and IBM cutting Red Hat Ansible Playbook creation time by 40%.

"The next wave of enterprise AI isn't another app - it's a workforce of AI agents woven into your business." - IBM

When evaluating platforms for integration, cost, governance, and scalability, four key factors emerge: model support, cost management, governance tools, and scaling options. Prompts.ai stands out by offering seamless model integration, cost efficiency, and the freedom to avoid vendor lock-in. This flexibility allows teams to choose the best model for each task without compromise.

Microsoft Azure AI showcases its dominance in enterprise adoption, with Copilot active in over 1 million enterprise seats and a 33% quarter-over-quarter revenue increase in Q4 2024 for AI services. Amazon Bedrock, backed by a $4 billion investment in Anthropic's Claude models, provides access to 100+ foundation models and elastic AWS scaling. Alphabet's Vertex AI, powered by Google Cloud's infrastructure, integrates deeply across Google's ecosystem, which reported $33.1 billion in Q4 2024 revenue. Meanwhile, Databricks, with its data-centric focus, achieved $1.6 billion in annual recurring revenue by mid-2024, following its $1.3 billion acquisition of MosaicML.

In governance, IBM Watsonx leads with built-in compliance tools. Azure AI and Vertex AI offer enterprise-grade security tied to their cloud infrastructures, while Amazon Bedrock includes HIPAA compliance features. Prompts.ai enhances governance through unified workflows and audit trails across models, ensuring teams maintain control without sacrificing speed or efficiency.

Scalability is another area where these platforms excel under enterprise workloads. Azure's cloud-native auto-scaling and AWS's elastic infrastructure handle significant demands effectively. Databricks focuses on scaling data-to-AI pipelines through its lakehouse architecture. Prompts.ai simplifies scaling by providing unified access to multiple models in a single interface, allowing teams to deploy new models in minutes instead of months. According to Frost & Sullivan's 2024 report, 89% of organizations see revenue growth from platforms that efficiently integrate advanced models.

The ability to quickly move from trial to production is a critical differentiator. Platforms that offer transparent pricing, broad model access, and integrated governance tools enable organizations to prioritize results over managing complex infrastructures. This comparison highlights how each platform addresses industry challenges, helping businesses choose the best unified AI ecosystem for their needs.

Unified AI ecosystems simplify operations by bringing together scattered tools, controlling costs, and addressing security vulnerabilities. These platforms transform fragmented workflows into cohesive, real-time operations by consolidating models, data, and processes into one reliable source. This integration enhances collaboration and enables actionable insights across various functions.

Effective governance is key to scaling AI. Companies that prioritize AI governance achieve 12 times more production-ready AI projects than those that do not. Platforms like Prompts.ai, Microsoft Azure AI, and IBM Watsonx integrate compliance measures, audit trails, and identity management directly into their workflows, turning governance from a challenge into a strength. This approach helps businesses move quickly from experimentation to full-scale implementation.

To make the most of AI, start by defining clear objectives for its integration into your workflows. Evaluate current processes to identify areas where AI can improve efficiency or cut costs. Establish a governance committee with representatives from IT, legal, security, and business departments to ensure compliance with regulations. Look for platforms offering built-in connectivity and standardized frameworks - protocols like MCP (Model-to-Cloud-Platform) and A2A (Agent-to-Agent) make system communication smoother and reduce manual integration efforts.

The ideal unified AI ecosystem should offer transparent pricing, avoid vendor lock-in, and provide access to a wide range of models. With the AI market projected to grow from $279.22 billion in 2024 to $3,497.26 billion by 2033, rapid deployment will be essential for staying competitive. Focus on impactful use cases that deliver quick, measurable results to gain organizational support and momentum. A unified AI ecosystem can help you achieve faster deployment and drive meaningful business outcomes.

A unified AI ecosystem serves as a centralized hub that brings together various tools, models, and workflows to simplify AI operations. This setup ensures smooth collaboration between different AI models, such as large language models (LLMs), enhancing automation and scalability while maintaining control and oversight. By minimizing the overuse of scattered tools and making better use of resources, these ecosystems enable businesses to handle intricate AI tasks more effectively. They are particularly valuable for enterprises looking to expand AI initiatives while keeping operational costs in check.

Most enterprise AI projects stumble because of difficulties in integrating and coordinating various tools and workflows. A few key culprits include tool sprawl - where multiple, disconnected AI applications are in use - manual workflows, and the absence of a centralized orchestration platform. These problems lead to inefficiencies, fragmented data, and added complexity, making it hard for organizations to scale their AI efforts and fully realize their return on investment (ROI).

To select the best unified AI platform, prioritize options that bring together tools, models, and workflows in one centralized hub. Look for features like smooth integration, automation, governance tools, and the ability to scale. Platforms such as prompts.ai provide access to multiple AI models, automated cost management, and real-time monitoring, making it easier to optimize workflows and cut costs. Choose a platform that matches your operational objectives and technical requirements to achieve peak efficiency.