AI workflows break complex tasks into smaller, manageable steps for consistent, scalable results. They save time, reduce costs, and enhance productivity. Organizations using AI workflows report 60–80% faster production times and up to 5× output increases. Tools like Prompts.ai simplify workflow creation by unifying 35+ leading LLMs, offering real-time cost tracking, and providing templates for quick deployment. Here's what you need to know:

Prompts.ai combines cost transparency, tool integration, and community-shared templates to help you build secure, scalable AI workflows. Start small, refine continuously, and scale confidently.

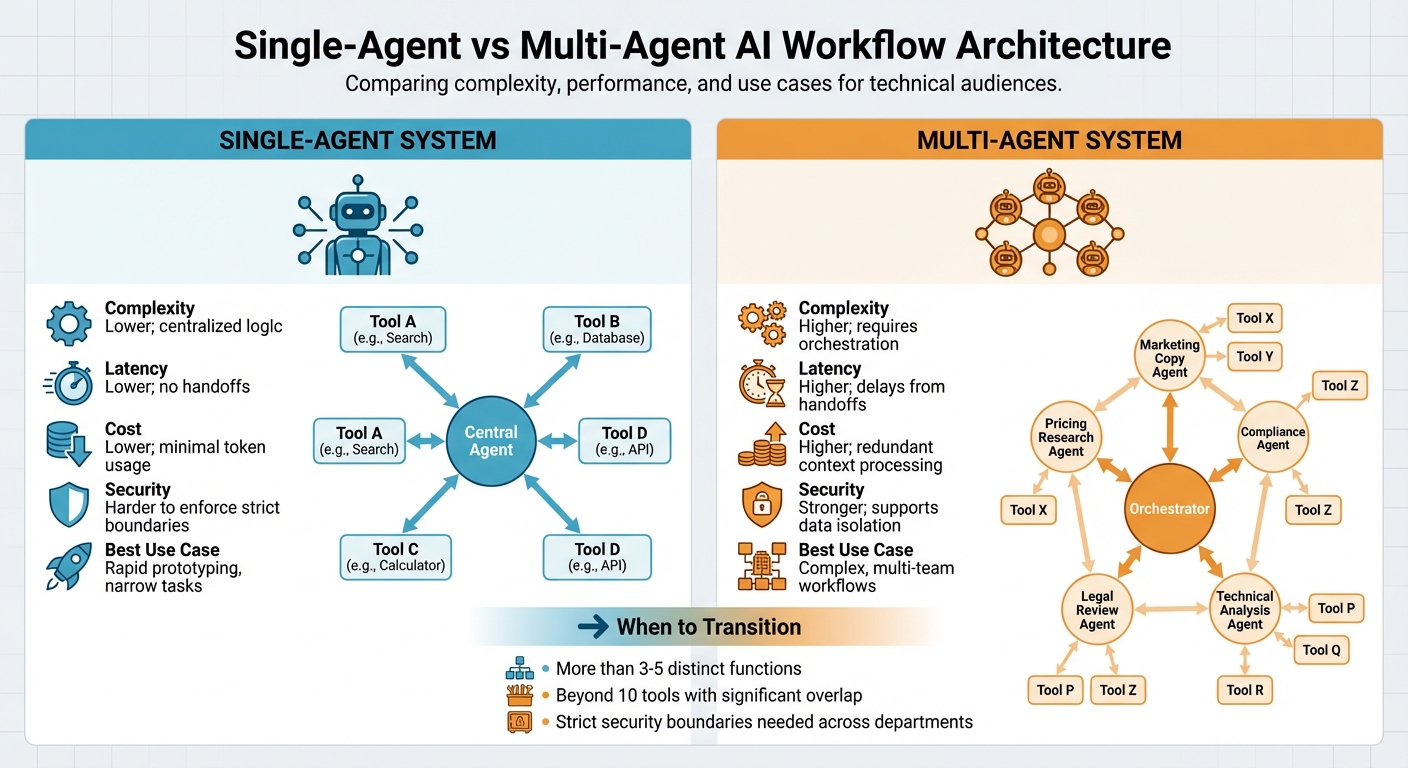

Single-Agent vs Multi-Agent AI Workflow Architecture Comparison

Before diving into code or crafting prompts, it’s critical to define what success looks like for the user. Avoid ambiguous goals like "generate a summary." Instead, aim for specific, actionable outcomes such as "help select the best vendor" or "align project priorities across teams." This focused approach ensures your workflow delivers practical value rather than just producing text.

Establish clear boundaries between what the AI handles and what remains a human responsibility. For instance, your workflow might excel at extracting data, validating formats, or identifying inconsistencies, but it shouldn’t make decisions on budgets or finalize contracts. To monitor performance, track key metrics like 95th percentile latency (p95) exceeding 10 seconds, success rates dropping below 95%, or token usage surpassing 150% of the baseline. Start with high-impact, low-risk tasks - those that are repetitive and time-consuming but where errors have manageable consequences. As the Oronts Engineering Team wisely points out:

"The difference between a prototype and a production system isn't the model you use. It's everything around the model."

Once objectives are clear, the next step is selecting the right architecture for the complexity of your task.

For straightforward tasks, a single-agent architecture is often the best starting point. This setup consolidates logic, context, and tool execution into one system, making it easier to debug and maintain. Conditional prompting can be used for role emulation, allowing you to test and refine workflows before considering a multi-agent approach. Transition to a multi-agent system only when your workflow spans more than 3–5 distinct functions, involves significant tool overlap (typically beyond 10 tools), or requires strict security boundaries across departments.

In a multi-agent system, tasks are divided among specialized agents. For example, one agent might handle competitive pricing research, another drafts marketing copy, and a third ensures compliance. While this approach can increase latency due to handoffs and raise costs because of redundant context processing, it offers better security through data isolation and a clearer division of responsibilities. Multi-agent setups are ideal for workflows that involve multiple teams or require strong compliance measures.

| Feature | Single-Agent System | Multi-Agent System |

|---|---|---|

| Complexity | Lower; centralized logic. | Higher; requires orchestration. |

| Latency | Lower; no handoffs. | Higher; delays from handoffs. |

| Cost | Lower; minimal token usage. | Higher; redundant context processing. |

| Security | Harder to enforce strict boundaries. | Stronger; supports data isolation. |

| Best Use Case | Rapid prototyping, narrow tasks. | Complex, multi-team workflows. |

With your architecture in place, refine execution by adopting planning strategies tailored to your workflow. These strategies help break complex tasks into manageable steps. Chain-of-Thought (CoT) prompts the AI to outline its reasoning step-by-step, making it ideal for logical, multi-step tasks that don’t require external tools. ReAct (Reason + Act) combines reasoning with task-specific actions, creating an iterative loop of Thought, Action, and Observation - perfect for dynamic workflows involving tool usage. Self-Refine focuses on quality by generating output, critiquing it, and iteratively improving it. A 2025 study on M1-Parallel demonstrated that running parallel plans and stopping early upon success can cut latency by up to 2.2x.

For workflows that require efficiency, upfront decomposition allows you to create a complete plan before execution, enabling parallel work. However, this method can falter if conditions change mid-task. Interleaved planning adapts the plan as new information emerges, offering more flexibility. As-needed decomposition (ADaPT) takes a reactive approach, breaking tasks into smaller parts only when a subtask fails or becomes unexpectedly complex, avoiding unnecessary overhead.

| Strategy | Description | Best Applied When... |

|---|---|---|

| Chain-of-Thought (CoT) | Breaks tasks into logical steps. | Tasks involve logical reasoning without external tools. |

| ReAct | Blends reasoning with actions and observations. | External tools and dynamic adjustments are needed. |

| Self-Refine | Iteratively improves output quality. | Precision and accuracy are top priorities. |

| ADaPT | Splits tasks only when needed. | Task complexity is unclear at the outset. |

| PlaG | Uses directed graphs for parallel execution. | Speed and efficiency are critical for independent subtasks. |

To ensure smooth operations and scalability, building interoperable AI workflows requires careful planning and adherence to key best practices.

The way you manage state plays a crucial role in determining whether your workflow remains efficient or becomes overly complex. Centralized state management relies on a single orchestrator or gateway to handle cost tracking, rate limiting, and access management. This method simplifies tasks like model routing and failover, allowing you to switch models without reconfiguring individual agents. Additionally, centralized caching can cut latency by up to 80% and reduce token costs by 90%. However, this approach carries the risk of a single point of failure.

On the other hand, distributed state management spreads responsibilities across multiple specialized agents that operate in parallel or route tasks based on input type. This setup excels in speed, as subtasks can run concurrently using "Map-Reduce" techniques, where data is processed in chunks and then aggregated. The downside is that distributed systems can introduce complexity in state aggregation and risk "context drift" if agents fail to maintain a shared understanding. Centralized management is ideal for unified governance and cost control, while distributed systems are better suited for scenarios where speed and specialization outweigh coordination challenges. Once state management is in place, effective prompt engineering can further enhance workflow efficiency.

In production workflows, managing context effectively is critical to avoid exhausting context windows. A three-tier memory model - working, episodic, and semantic - helps manage this efficiently. Use selective passing to share only essential information between agents instead of full conversation histories. For long documents, apply overlapping chunking with a 200-token overlap to prevent losing key information that spans chunk boundaries.

Context compression techniques, such as summarization or key extraction, help reduce the token footprint before passing data to the next step. For sequential workflows, a sliding window can retain recent interactions while archiving older context externally. Structured outputs, enforced through exact JSON schemas, ensure machine-parseable results and eliminate "format drift", where agents might return inconsistent formats. Both OpenAI and Claude support Structured Outputs, making them reliable options for this approach. With refined context management in place, the next step is to implement strict safety protocols for tools.

While efficient context management minimizes data loss, enforcing strict tool permissions ensures the integrity of your workflows. Following the principle of least privilege, as outlined in NIST control AC-6, agents should only have access to the systems and data they absolutely need. Assign tools a risk rating - low, medium, or high - based on factors like their ability to write data, reversibility of actions, and financial impact. For example, a tool that sends emails might warrant a medium rating, while one authorizing refunds over $10,000 would require a high rating and human approval.

For tools that modify state, use idempotency keys to prevent duplicate actions during retries - essential for avoiding issues like sending the same email twice due to a timeout. Code-execution tools should run in isolated containers with strict CPU and memory limits, and no filesystem persistence. Additionally, apply input validation using regex or LLM-based classifiers to detect and block prompt injections or PII leaks before they reach production. The Model Context Protocol (MCP) provides a standardized way to discover and securely interact with tools, removing the need for hardcoded integrations with every new data source.

| Feature | Single-Agent Workflow | Multi-Agent Workflow |

|---|---|---|

| System Architecture | Centralized/Monolithic | Distributed/Orchestrated |

| Use Cases | Simple queries, basic summarization | Business intelligence, software development, complex research |

| Advantages | Lower latency, lower cost, easier to debug | Specialized expertise, adaptive planning, handles complex subtasks |

| Disadvantages | Limited by context window, prone to "jack-of-all-trades" errors | High orchestration overhead, potential for plan divergence |

| Integration with Prompts.ai | Direct API connection with centralized prompt management | Requires orchestrator for coordination; benefits from MCP-based tool discovery |

Prompts.ai simplifies the integration of tools and models into your AI workflows by building on secure architectures and well-defined objectives. Once you've set up clear permission boundaries and effective context management, the next step is ensuring your workflows connect seamlessly to the tools and models they require. Prompts.ai tackles this challenge with a unified interface that brings together over 35 leading large language models, eliminating the complexity and inefficiency of managing multiple disconnected tools. These integration features complement the planning and safety measures discussed earlier.

Standardizing tool schemas is key to managing updates and maintaining clean boundaries between tools. Instead of hardcoding integrations for every new data source, Prompts.ai encourages the use of clear schemas that outline each tool's purpose, expected inputs, and required permissions. This flexibility allows you to swap models or add new features without overhauling your entire workflow. Additionally, Prompts.ai's observability features provide real-time monitoring and debugging for tool interactions. This helps you catch issues like prompt injection or data leakage early, ensuring workflows remain secure and efficient.

Scaling AI workflows requires clear cost visibility. Prompts.ai tracks every token used and ties expenses directly to business outcomes, offering unparalleled cost transparency. Automated audit trails further enhance governance by creating detailed compliance documentation. Together, these features enable you to deploy workflows confidently while keeping operations efficient and compliant.

Instead of starting every workflow from scratch, take advantage of Prompts.ai's library of community-shared templates and proven workflow patterns. These resources allow you to research, test, and adapt high-quality implementations to fit your needs, significantly reducing deployment time. The platform also offers a Prompt Engineer Certification program to develop internal experts who can customize these workflows for your organization. With a global community continuously refining patterns based on practical use cases, you can rely on shared expertise to streamline your AI operations without duplicating effort.

Below is a comparison of planning strategies with actionable tips for using them within Prompts.ai:

| Planning Strategy | Description | Ideal Architecture | Strengths | Prompts.ai Implementation Tips |

|---|---|---|---|---|

| Chain-of-Thought (CoT) | Breaks down complex tasks into logical steps | Single or multi-agent | Enhances reasoning for structured problems | Use during the planning phase for task decomposition; test prompts side-by-side with model comparisons |

| ReAct | Merges reasoning and action by using tools in loops | Multi-agent with tools | Excels in dynamic environments | Standardize tool schemas and enable observability for tool calls |

| Self-Refine | Allows agents to iteratively improve their outputs | Single-agent | Boosts quality through self-assessment | Implement LLM-based evaluation with scoring rubrics; monitor progress using trace logs |

Once workflows are operational, ensuring they are refined, scalable, and governed effectively is key to transforming experimental AI into reliable, enterprise-grade systems. These steps not only help deliver measurable ROI but also ensure compliance with evolving regulations.

To move beyond informal testing, a structured approach to error analysis is essential. Start by identifying where workflows fail, then group these issues into themes to uncover the most critical problems. This process powers the Flywheel of Improvement: analyze workflow traces, measure performance through evaluations, refine prompts or architecture, and automate this cycle for continuous enhancement.

For refinement, a multi-layered evaluation strategy works best. Combine code-based assertions for deterministic tasks with LLM-based scoring for subjective outputs. Prompts.ai supports this process with tools like side-by-side model comparisons for real-time testing and trace logs for debugging. For unpredictable edge cases, validation and fallback mechanisms are critical. These ensure workflows degrade gracefully - triggering a fallback model or human review when unsafe or low-quality outputs arise. These measures prepare workflows for scalable operations.

| Refinement Technique | Best For | Implementation in Prompts.ai |

|---|---|---|

| LLM-Based Evaluation | Subjective quality (tone, relevance) | Use scoring rubrics with trace logs to monitor improvements over time |

| Code-Based Assertions | Deterministic tasks (format, length) | Automate checks before outputs are delivered to users |

| Edge Case Handling | Unpredictable inputs | Set up fallback models or human-in-the-loop triggers |

| A/B Testing | Comparing prompt variations | Test across 35+ models to identify the most effective prompts |

Scaling AI workflows requires complete visibility into every interaction. Many organizations use generative AI in at least one business function, but "Shadow AI" - unauthorized tools that bypass governance - remains a significant risk. Prompts.ai addresses this by acting as a centralized inventory, providing a single system of record for all AI models and data sources.

The platform’s real-time cost tracking links every token to specific business outcomes, helping identify workflows that create value versus those that drain resources. Features like version histories, training data provenance, and decision rationales ensure audit readiness. This level of transparency allows organizations to scale confidently, adding teams, models, and use cases without losing oversight or efficiency.

As AI systems scale, strong governance is essential to maintaining integrity. With 60% of legal, compliance, and audit leaders identifying technology as their top risk concern by 2026 - but only 29% of organizations having a comprehensive AI governance plan - proactive measures are critical.

Start with a risk-based classification system that categorizes workflows into High, Medium, and Low risk tiers:

"We need to be thinking, 'What AI do we have in the house, who owns it and who's ultimately accountable?'" - Maria Axente, Head of AI Public Policy and Ethics, PwC

To ensure accountability, establish a cross-functional oversight committee with representatives from legal, compliance, IT, and business teams. Prompts.ai’s automated guardrails monitor for issues like drift, bias, and unauthorized data usage, blocking unsafe outputs before they reach production. Standardized documentation - such as model cards, data sheets, and factsheets - ensures audit readiness, especially as regulations like the EU AI Act impose fines of up to $35 million or 7% of annual turnover for non-compliance.

Finally, define clear rollback criteria to remove systems from production in cases of performance drops or ethical concerns. Provide role-specific training so all stakeholders understand their responsibilities within the AI lifecycle. This structured approach ensures that scaling AI systems doesn’t come at the expense of governance or compliance.

Building efficient AI workflows is about more than just implementing technology - it’s about scaling operations, ensuring compliance, and achieving measurable returns. With the rise of agentic workflows, AI agents now handle entire processes, working alongside human teams rather than acting as standalone tools. To succeed, careful planning is essential: identify 3-5 key metrics early on, decide between single-agent systems for repetitive tasks or multi-agent setups for complex operations, and use strategies like Chain-of-Thought or ReAct to break challenges into manageable steps.

Prompt engineering and governance are essential for creating reliable workflows. By managing context, enforcing tool safeguards, and standardizing schemas, you can build robust systems. Federated governance, combined with features like automated bias detection, toxicity monitoring, and red-teaming, ensures compliance and security. Continuous evaluation - tracking accuracy, safety, latency, and cost - helps refine workflows over time, adapting to changes in models without starting from scratch. These governance practices provide the operational oversight needed for long-term success.

Observability and cost transparency are what separate experimental AI setups from production-ready systems. Real-time monitoring and cost tracking connect every token to business outcomes, helping identify high-value use cases while cutting wasteful processes. Prompts.ai simplifies this by offering centralized visibility across 35+ models, complete with FinOps controls, audit trails, and shared community workflows.

Start with quick-win pilots, then expand to multi-agent systems while rigorously testing prompts and context to ensure consistent results. Explore open-source tools before committing to custom builds, and establish clear traceability and permission protocols to prepare for future upgrades. This iterative method - starting with broad plans and refining over time - keeps workflows flexible as AI capabilities grow. These strategies outline the essentials for creating effective AI workflows.

Use Prompts.ai to put these best practices into action. Tap into community workflows, manage costs effectively, and scale securely to streamline AI orchestration with ease.

When tasks grow too intricate for one agent to handle or demand higher levels of reliability, scalability, or specialized expertise, it’s time to consider a multi-agent workflow. These systems shine in scenarios where multi-step processes require collaboration between agents with distinct roles or skills. Begin with a single-agent setup and expand gradually as the complexity of your tasks increases, keeping the system organized and dependable. Leverage tools specifically designed for state management and role coordination to make this shift smoother and more efficient.

To maintain a compact context while preserving critical details, employ rolling windows to emphasize the most recent data. Use summarization techniques to distill older information into concise formats, and apply chunking to break down large documents into manageable sections. By managing context layers effectively, you can minimize redundancy and noise, ensuring the AI model operates efficiently without losing essential information.

To prepare a workflow for production, monitor critical metrics such as system reliability, error rates, response latency, throughput, and cost control. These metrics provide insight into the workflow's stability, performance, and ability to scale effectively in practical scenarios.