Startups in 2026 are racing to deliver AI-driven products while battling complex workflows, high costs, and limited DevOps resources. The latest orchestration tools simplify AI operations, reduce expenses, and enable fast deployment, even for small teams. Here's what you need to know:

Each tool offers governance, security, and automation tailored to startups. Whether you prioritize cost savings, compliance, or scalability, these platforms empower teams to manage AI workflows efficiently.

Prompts.ai serves as a centralized platform for managing over 35 leading large language models, including GPT-5, Claude, LLaMA, and Gemini, all within a secure interface. For startups in 2026, dealing with an array of AI tools, this platform tackles a major challenge: minimizing tool overload while maintaining the flexibility to choose the best models. Founded by Emmy Award-winning Creative Director Steven P. Simmons, the platform brings a fresh approach to streamlining AI workflows for teams of all sizes. Below, we explore its cost structure, integration options, automation features, and governance tools.

Prompts.ai offers a range of pricing plans tailored to various needs. Personal plans start at $0/month on a pay-as-you-go basis, while individual plans are priced at $29/month, and family plans at $99/month. Business plans range from $99 to $129 per user monthly, utilizing a pay-as-you-go TOKN credit system that can cut costs by as much as 98% compared to subscribing to multiple models individually. This pricing strategy helps startups manage their budgets effectively by avoiding unexpected subscription fees, a common issue for smaller companies keeping a close eye on expenses.

The platform supports seamless integration with popular tools and frameworks, offering Python APIs and pre-built connectors for machine learning ecosystems like TensorFlow and PyTorch, as well as data platforms such as dbt and Snowflake. Its compatibility with Kubernetes-based systems makes it easier to manage complex workflows across multiple cloud environments and toolchains, without requiring extensive custom coding.

Prompts.ai simplifies machine learning workflows through features like automated pipeline creation, hyperparameter tuning, and one-click model retraining. With adaptive batching and automated model chaining, deployment timelines can shrink from weeks to mere hours. These capabilities are especially beneficial for startups aiming to roll out AI-powered features quickly, even without dedicated DevOps teams.

The platform includes robust governance tools, such as role-based access control, data lineage tracking, and audit logs that comply with SOC 2 and GDPR standards. These features ensure multi-user isolation and strict policy adherence, making it an excellent choice for startups in regulated industries. By establishing governance protocols from the outset, companies can avoid the expense and effort of retrofitting compliance measures as they grow.

Prefect 3.0 brings startups an efficient way to manage complex machine learning (ML) workflows with unmatched flexibility. Its revamped engine allows any Python function to run seamlessly, whether on a laptop, AWS Lambda, or Kubernetes, without requiring code modifications. The platform can handle over 100,000 tasks per minute while reducing orchestration overhead by more than 90%, simplifying transitions between environments.

Prefect uses a per-user pricing model, moving away from charging based on workflow volume or task execution. Its Free Hobby tier supports up to 2 users and 5 deployments, making it ideal for early-stage teams. For serverless operations, Prefect employs a credit-based payment structure where you pay only for runtime, with credits included in all cloud plans. In 2024, Endpoint's Data Engineering team successfully migrated 72 pipelines from Astronomer to Prefect Cloud. This switch led to a 73.78% reduction in monthly costs while tripling production volume. Sunny Pachunuri, Data Engineering and Platform Manager at Endpoint, highlighted that Prefect eliminated the need for retrofitting, making integration highly efficient.

Prefect integrates seamlessly with distributed computing frameworks like Ray and Dask, enabling startups to scale ML workloads across varied computing environments. Its work pools separate code from execution, allowing smooth transitions between Docker, Kubernetes, ECS, and Google Cloud Run. For example, Cash App's ML Tools team, led by Machine Learning Engineer Wendy Tang, migrated their fraud prevention infrastructure to Prefect. This setup allowed them to manage compute needs across AWS, Google Cloud, and Databricks. Using work pools and Access Control Lists, they customized Python package management for different model types while leveraging distributed computing to scale complex tree-based and LLM models. These integrations directly enhance ML automation capabilities.

Prefect supports dynamic task mapping, scaling up to 20,000 tasks across workers. Its result caching feature stores LLM responses, reducing redundant API calls during retries and significantly cutting costs for AI workloads. Andrew Waterman from Actium Health demonstrated this efficiency by using Prefect and Dask to parallelize hyperparameter tuning. He completed 350 experiments in just 30 minutes - a process that would typically take two days. Startups can easily turn existing Python scripts into managed workflows by adding a simple @flow decorator, eliminating the need for rigid DAG structures.

Prefect Cloud is SOC 2 Type II certified and employs a hybrid execution model to ensure sensitive data remains within your infrastructure. Workers poll the Prefect Cloud API for instructions, eliminating the need for inbound connections. Only metadata, such as logs and run history, is sent to the control plane. The platform also provides robust security features, including role-based access control and Single Sign-On integration. Prefect 3.0 introduces transactional semantics, allowing tasks to be grouped into atomic units. This ensures failures can roll back to a clean state, preventing data corruption. With 99.99% uptime, Prefect supports over 100,000 teams globally.

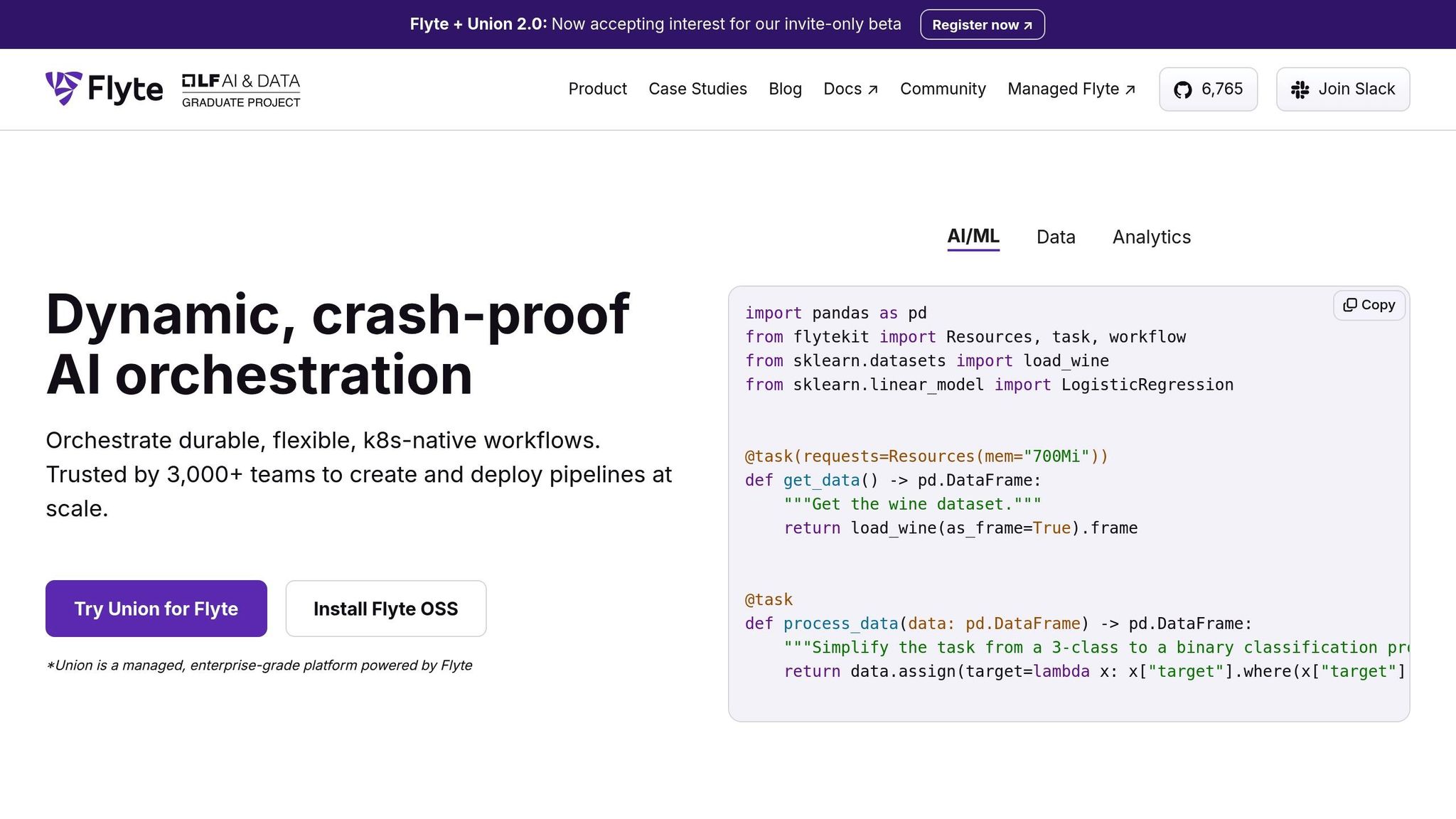

Flyte 2.0 offers a container-native orchestration platform designed to help startups build reliable, production-ready AI pipelines without the hassle of managing complex infrastructure. Trusted by thousands of teams, it has a proven ability to streamline processes and improve efficiency. Seth Miller-Zhang, Senior Software Engineer at ZipRecruiter, shared the impact of adopting Flyte:

"We got over 66% reduction in orchestration code when we moved to Flyte - a huge win!"

This reduction highlights Flyte's ability to simplify orchestration, making workflows easier to manage and more cost-effective. It's an ideal fit for startups looking to deploy quickly and maximize resources.

Flyte is available as a free, open-source platform under the Apache 2.0 license, making it accessible even for startups with tight budgets. For those needing managed infrastructure, Union.ai provides enterprise-grade hosting with pricing tailored for startups. Flyte also includes features like spot instance support through a simple interruptible=True flag, which can cut compute costs by up to 90% compared to on-demand options. With its scale-to-zero capability, startups are charged only for active workflows, avoiding unnecessary expenses from idle infrastructure.

Flyte's Agents Framework uses a gRPC-based layer to integrate seamlessly with hosted services like OpenAI APIs, Databricks, and Snowflake, all without requiring tight coupling to infrastructure. The platform automatically persists dataframes in Apache Arrow format, enabling smooth transitions between tools like Pandas, Spark, and other engines. Flyte also supports SDKs for Java, Scala, and JavaScript while offering native plugins for distributed frameworks such as Ray, PyTorch, and TensorFlow. Its use of Python type hints ensures data compatibility is validated at compile-time, helping catch errors before compute resources are used.

Flyte 2.0 is built to handle large-scale operations with features like map tasks and dynamic resource allocation during runtime. Its warm-container model avoids cold starts by reusing pre-loaded environments, while Kubernetes-native execution ensures elastic autoscaling. Startups can specify GPU types, including MIG-partitioned Nvidia GPUs, using the native Accelerators framework. The stateless Agent Framework adjusts to demand, efficiently managing integrations with external services. These features allow startups to scale effortlessly while automating workflows dynamically.

Flyte enables developers to create workflows using standard Python, with tools like the @dynamic decorator allowing workflows to adapt based on real-time data. Automated caching through versioned tasks prevents redundant computation during iterative development, while ImageSpec simplifies dependency management by letting developers specify them directly in Python, bypassing Dockerfile creation. Jeev Balakrishnan, Software Engineer at Freenome, praised Flyte's reliability:

"It's not an understatement to say that Flyte is really a workhorse at Freenome!"

Flyte ensures reliability with self-healing workflows that recover from failures using built-in retries and checkpointing. Its immutable execution model guarantees that workflow runs cannot be deleted, providing a complete audit trail for compliance. The platform supports multi-tenancy through "projects" and "domains" (development, staging, production), enabling startups to isolate environments and allocate resources effectively. Automated data lineage tracking records all transformations, simplifying debugging and ensuring compliance with regulatory requirements. These features give startups the confidence to scale AI workflows securely and efficiently.

Dagster reshapes orchestration by organizing data assets - like tables, ML models, and notebooks - into a unified graph, giving teams a clear view of how their tools interact. Emil Sundman, Data Engineer at Bizware, compares its impact to dbt's influence on transformation:

"Dagster changed my understanding of orchestration like dbt did for transformation."

By focusing on assets, Dagster simplifies the debugging and scaling of machine learning pipelines, especially in fast-moving startup environments. Let’s break down its cost structure, integration capabilities, scalability, automation, and security features.

Dagster provides a free, open-source version for developers and small teams. For managed infrastructure, Dagster+ offers three pricing tiers:

Credits are used at a rate of 1 per asset materialization or task execution. Additional costs include $0.005 per compute minute for serverless operations and $0.03 per credit for overages. Cost tracking for Snowflake and BigQuery is included in the Pro/Enterprise plans. Startups leveraging Dagster+ Pro have reported $1.7 million in faster time-to-value over three years, reflecting its strong return on investment for scaling teams.

Dagster's Dagster Pipes feature allows code execution in any programming language across external environments, all while maintaining full observability and lineage tracking. The platform also includes Dagster Components, which convert Python scripts into reusable modules. With YAML-based configurations, it supports tools like dbt, Fivetran, DLT, Snowflake, and Power BI.

Built-in connectors for AWS, GCP, Azure, Tableau, and Looker ensure smooth integration across the data ecosystem. Additionally, Dagster supports the Model Context Protocol (MCP), enabling AI-assisted pipeline creation with safety guardrails. This eliminates the need for custom "glue code", empowering non-engineering stakeholders to build production-ready pipelines.

Dagster+ Pro accommodates hybrid and multi-tenant architectures, giving startups the flexibility to orchestrate workflows on either their own cloud or Dagster's infrastructure. The platform uses partitions and Snowflake MERGE patterns to process only updated data, optimizing backfills and parallel workloads.

Its branch deployments feature creates isolated staging environments for every pull request, allowing teams to test changes safely. EvolutionIQ, for example, reduced client onboarding time from months to less than a week by consolidating their fragmented ML systems. Tom Vykruta, CEO of EvolutionIQ, highlighted the transformation:

"We would not exist today as a company if we didn't move to a single unified codebase, with a real data platform beneath it."

Dagster replaces traditional cron-based scheduling with declarative automation, triggering ML training based on data freshness and quality. Built-in retry mechanisms with exponential backoff handle failures seamlessly, and freshness policies ensure models are trained on up-to-date data. The platform significantly cuts testing and debugging times for ML model updates, reducing them from hours to minutes.

When paired with Metaxy, Dagster enables sample-level versioning for multimodal data pipelines, offering precise control over complex workflows. Steven Ayers, Principal Data Engineer at RS Group, shared his experience:

"The new homepage is the first thing I check every morning. In just a few seconds, I know exactly what happened overnight and what needs my attention."

Dagster's hybrid architecture ensures that customer data and pipeline code remain within a startup's private cloud, with Dagster never accessing sensitive raw data. The platform is SOC 2 Type II and HIPAA compliant, making it suitable for handling Protected Health Information (PHI). Security features include enterprise-grade SSO, RBAC, SCIM, and mandatory HTTPS for all requests. Data stored in Postgres, Redis, and S3 is encrypted at rest.

Dagster Compass acts as a developer-focused data catalog, surfacing metadata, lineage, and ownership directly from code. It also automates PII tagging to meet GDPR and CCPA compliance standards. With unified audit logs tracking all user actions and system changes, and multi-tenancy to isolate teams' code and data, Dagster delivers robust governance and security for growing organizations.

ML Orchestration Tools Comparison: Features, Pricing, and Capabilities for Startups 2026

Startups exploring these tools face a mix of opportunities and challenges, shaped by their technical capabilities, budget limits, and security priorities. Open-source solutions eliminate licensing fees, offering cost savings, but often demand more technical expertise and infrastructure management. On the other hand, managed services simplify operations with dedicated support, though they come with ongoing costs.

Here’s a closer look at each tool’s strengths and trade-offs, followed by a comparative table for quick reference.

Flyte 2.0 stands out with its scale-to-zero autoscaling and Python-based workflows. This setup helps startups cut infrastructure costs during idle periods and avoids the need to learn a new domain-specific language. Its self-healing workflows, complete with retries, ensure reliability for long-running AI tasks.

Prefect offers a hybrid model that combines local orchestration with optional managed services, giving startups flexibility. This approach supports teams transitioning from prototypes to full-scale production deployments.

Dagster takes an asset-centric approach, focusing on simplifying debugging and improving visibility into how data, models, and notebooks interact. Its hybrid architecture keeps sensitive data within private environments, meeting compliance needs. It also replaces traditional time-based scheduling with data-triggered workflows through declarative automation.

For startups with strict data protection requirements, governance features like role-based access, audit logging, and certifications are essential to secure partnerships and meet regulatory standards. Additionally, platforms that act as infrastructure-agnostic metadata layers allow teams to switch orchestrators without rewriting code, minimizing the risk of vendor lock-in as MLOps tools evolve.

The table below summarizes these tools' features and trade-offs to help startups weigh their options:

| Feature | prompts.ai | Prefect | Flyte | Dagster |

|---|---|---|---|---|

| Cost Model | Pay-as-you-go TOKN credits; $99-$129/user/month | Free open-source; managed hosting | Free OSS; Union.ai managed service (private beta) | Free OSS; commercial plans available |

| Interoperability | 35+ LLMs unified interface; enterprise integrations | Python-native; broad ecosystem support | Pure Python; integrates with any agent framework | Multi-language execution and tool integrations |

| Scalability | Enterprise-grade | Hybrid execution; local and cloud orchestration | Multi-container pipelines; scale-to-zero autoscaling | Hybrid/multi-tenant architecture |

| ML Automation | Real-time FinOps; prompt workflow optimization | Event-driven triggers; flexible scheduling | Dynamic workflows with caching, retries, and self-healing | Declarative automation; asset-based orchestration |

| Governance/Security | Enterprise audit trails; compliance controls | Role-based access; audit logging | Native multi-tenancy; automatic versioning | SOC 2 Type II, HIPAA compliance; RBAC |

This analysis underscores how tailored orchestration solutions can simplify AI workflows for startups, tackling key challenges like cost control, security, and deployment efficiency.

If managing costs is a priority, prompts.ai’s pay-as-you-go TOKN credit system - ranging from $99 to $129 per user per month - offers a flexible approach. This model ties expenses directly to usage, potentially slashing costs by up to 98% compared to subscribing to individual models separately.

For startups focused on security and compliance, prompts.ai delivers robust features like role-based access controls, audit logging, and built-in multi-tenancy. With SOC 2 and GDPR compliance baked in from the start, your data governance needs are covered, avoiding expensive adjustments as your business grows.

Prompts.ai also accelerates experimentation and deployment with efficient caching, debugging tools, and automated pipelines. These features minimize redundant computations and shorten deployment timelines from weeks to just hours, even without dedicated DevOps resources. One-click model retraining further streamlines the process, keeping teams agile.

If your startup is developing agentic AI systems or managing multiple large language models, prompts.ai’s unified interface integrates over 35 top models - including GPT-5, Claude, LLaMA, and Gemini. Real-time FinOps tracking, adaptive batching, and automated model chaining provide granular cost control while reducing tool sprawl.

Additionally, prompts.ai supports Python-native workflows and integrates seamlessly with TensorFlow, PyTorch, dbt, Snowflake, and Kubernetes for scalable operations. Trial credits and flexible plans allow you to test orchestration patterns on actual workloads, ensuring the platform aligns with your specific needs. By merging advanced orchestration techniques with practical requirements, prompts.ai helps startups optimize resources without compromising on performance.

To choose the best orchestration tool, consider the complexity of your AI workflows, your team's skill level, and your budget. For startups in the early stages, platforms like Prompts.ai offer centralized access to over 35 large language models (LLMs) along with built-in cost management tools. As your operations grow, prioritize platforms that provide features like automation, governance, and scalability. Beginners might find no-code options more approachable, while experienced teams may lean toward open-source tools that allow for greater customization. Select a solution that addresses your current requirements while leaving room for future expansion.

The fastest way to move Python machine learning scripts into prompts.ai is through AI-driven workflows and prompt engineering. Begin by exporting the logic and data flow of your scripts. Next, use AI prompts to modify and tailor the code as needed. Finally, bring everything into prompts.ai’s orchestration platform, which consolidates models and simplifies migration, helping you save both time and effort.

Prompts.ai simplifies managing LLM costs, especially as usage scales. By integrating over 35 models into a single interface, offering flexible pay-as-you-go pricing, and leveraging smart task routing, it helps businesses cut AI expenses by as much as 98%. At the same time, it ensures smoother and more efficient operations.