Manage your AI prompts smarter, faster, and with less hassle. Centralized prompt workflows are transforming how teams handle AI operations by organizing all prompts into a single system. With features like version control, performance tracking, and metadata tagging, this approach eliminates inefficiencies and boosts productivity.

Organizations using structured AI workflows report:

Prompts.ai is a specialized platform that integrates over 35 leading LLMs (e.g., GPT-5, Claude, Gemini) into one secure interface. It offers:

In contrast, spreadsheets and basic tools struggle to meet the demands of modern AI teams, lacking key features like versioning, multi-model testing, and compliance tracking.

For enterprises, researchers, and professionals, centralized workflows are no longer optional - they’re the infrastructure for scaling AI effectively.

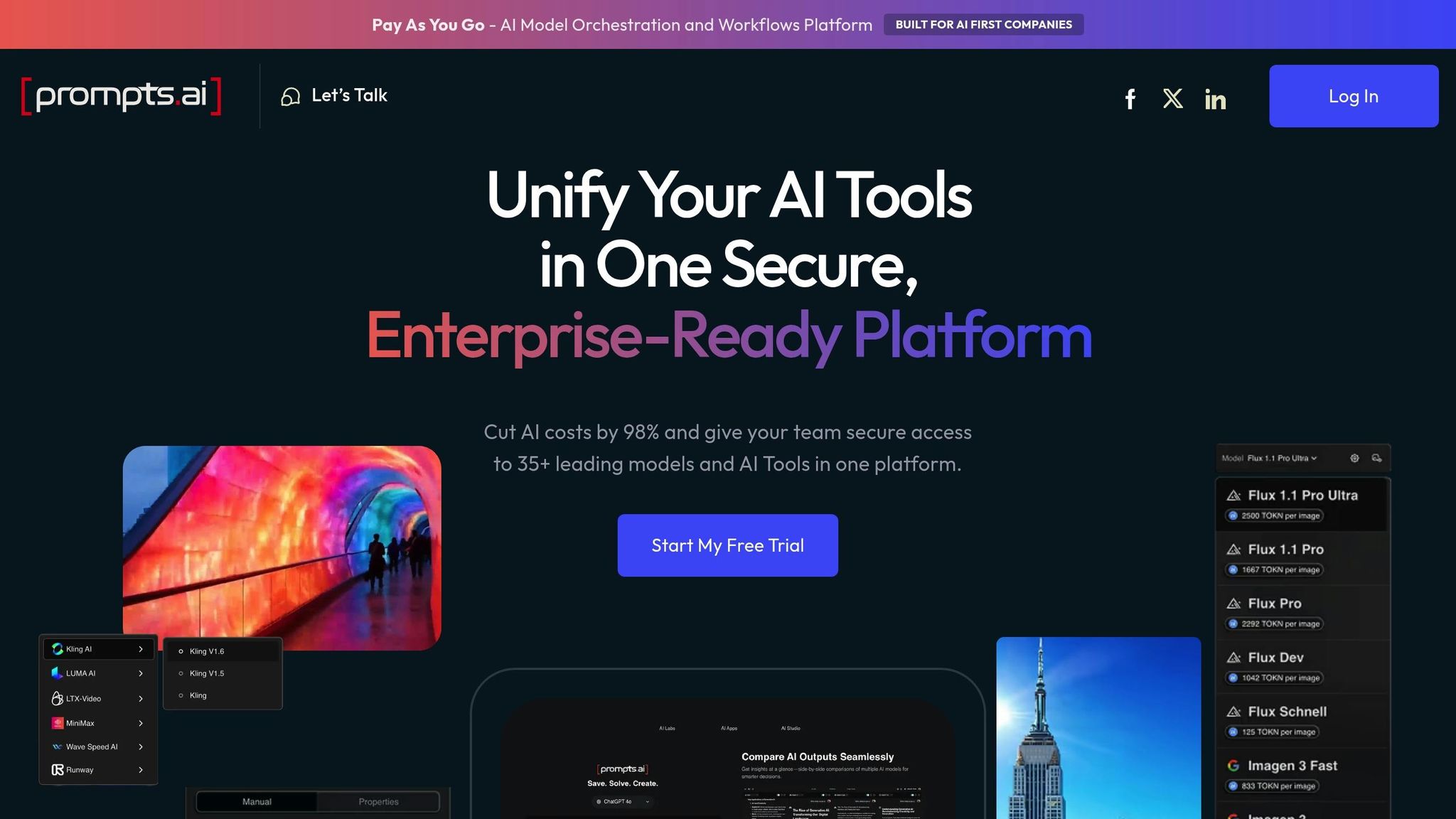

Prompts.ai is a powerful AI orchestration platform designed for enterprise use. It brings together over 35 top large language models - including GPT-5, Claude, LLaMA, Gemini, Grok-4, Flux Pro, and Kling - into one secure and user-friendly interface. By consolidating these models, the platform removes the hassle of juggling multiple subscriptions and logins.

Prompts.ai stands out for its cost-effective approach. Using a pay-as-you-go TOKN credit system, it eliminates the need for recurring subscription fees. This setup allows businesses to align their AI expenses directly with usage, potentially cutting AI software costs by up to 98%. Real-time cost controls also simplify financial tracking by tying each credit to specific projects, making budgeting and reconciliation much easier.

The platform simplifies model integration by offering access to more than 35 LLMs through a single API. This enables users to compare models side by side and ensures production traffic is automatically routed to the best-performing model. By consolidating SDKs, API keys, and authentication flows, Prompts.ai removes the complexity of managing multiple providers.

Prompts.ai prioritizes secure and compliant operations. It provides features like role-based access controls, audit trails, and approval workflows to ensure prompts meet organizational standards before going live. Teams can also track version histories for compliance documentation and set spending limits for specific departments. These tools help businesses meet regulatory requirements while maintaining operational security.

The platform is built to scale effortlessly, whether for small teams or large enterprises. Users, models, and business units can be expanded in minutes. The Prompt Engineer Certification program promotes best practices across teams, while an active prompt engineering community helps share and grow institutional knowledge. This centralized approach to prompt management not only standardizes workflows but also boosts productivity by up to 10×, making it an ideal solution for organizations aiming to scale efficiently.

Spreadsheets and text files might be fine for simple tasks, but they fall short when it comes to managing the complexities of modern AI workflows. These traditional tools simply weren’t designed to handle the intricacies of AI prompt management, leaving teams struggling to keep up with growing demands. Let’s take a closer look at why they often fail to meet the mark.

The fragmented nature of traditional tools becomes glaringly obvious in today’s multi-model environment. Teams are forced to juggle multiple API keys, authentication processes, and SDKs for each LLM provider they use. This scattered setup not only complicates switching between models but also makes comparing their performance more tedious than it should be. Without a unified interface to streamline these tasks, engineers end up spending valuable time on logistics instead of focusing on improving AI outputs.

One of the biggest drawbacks of traditional tools is their lack of built-in governance features. Without proper version tracking, organizations risk losing control over prompt changes, leading to inconsistent and unpredictable AI outputs. It’s no surprise that 95% of AI pilot programs fail to deliver measurable results, with unmanaged prompt changes being a major culprit. On top of that, 70% of IT security leaders worry about the accuracy of AI outputs. Without safeguards like role-based access controls or approval workflows, ensuring compliance and maintaining security becomes an uphill battle.

As AI adoption grows, traditional tools quickly hit their limits. Managing thousands of prompt versions or running bulk evaluations can bog down basic interfaces, making them painfully slow. Teams often find themselves wasting time trying to locate or recreate prompts scattered across various files. Open-source solutions might seem like an alternative, but they require significant resources for setup, scaling, and maintenance, adding even more strain. And when tasks grow more complex and context windows expand, these tools struggle with issues like context rot - where accuracy declines as token counts increase. Such challenges highlight the pressing need for a centralized system capable of keeping up with the evolving demands of AI workflows.

Prompts.ai vs Traditional Workflow Tools: Feature Comparison for AI Prompt Management

When comparing a specialized AI orchestration platform like Prompts.ai to traditional workflow tools, the differences become clear across key areas. The table below highlights how each approach performs for teams handling centralized prompt workflows.

| Criteria | Prompts.ai | Traditional Workflow Tools |

|---|---|---|

| Prompt Experimentation | Offers seamless versioning and deployment through an intuitive UI, allowing for quick iterations on quality, cost, and latency | Lacks native prompt orchestration, relying on manual workarounds and custom integrations |

| Evaluation Capabilities | Provides custom evaluators (deterministic, statistical, LLM-as-a-judge), pre-built tools, and human-in-the-loop assessments | Limited to basic analytics with no AI-specific evaluation workflows |

| Observability | Features real-time monitoring, distributed tracing, alerts, and dataset creation from production data | Includes risk prediction but lacks AI-specific tracing and prompt-focused observability |

| Collaboration | Includes custom dashboards and SDKs (Python, TypeScript, Java, Go) tailored for non-technical teams, eliminating engineering dependencies | General collaboration tools that require custom setups for AI prompt management |

| Integration Flexibility | Simplifies integration and management with unified access to 35+ large language models | Supports 6,000+ app integrations for non-AI tasks, offering general-purpose connectivity |

| Cost | Operates on a pay-as-you-go model using TOKN credits, aligning expenses with actual usage | Lower entry barriers with freemium and subscription models designed for general project management |

| Ease of Use | Involves a steeper learning curve due to AI-specific optimizations | Features familiar interfaces for general tasks without AI-specific complexities |

The comparison underscores the strengths of specialized orchestration in managing AI workflows effectively. Platforms like Prompts.ai excel in areas such as native prompt versioning, composability, and LLM observability - features that traditional tools simply cannot replicate without significant manual effort. While general workflow tools are well-suited for non-AI tasks with their broad scalability and flexibility, they fall short in addressing the unique demands of AI projects. For instance, they lack systematic version control, multi-agent monitoring, and the ability to implement closed-loop improvements based on real-world production data.

Prompts.ai stands out with integrated playgrounds and real-time monitoring, enabling teams to quickly validate prompts and debug production issues. In contrast, traditional tools often require manual processes to achieve similar outcomes. For organizations prioritizing AI-driven initiatives in 2026, experts increasingly recommend specialized platforms that offer end-to-end AI infrastructure. These platforms provide the composability, observability, and debugging capabilities essential for modern LLM workflows, which traditional tools cannot match.

Centralized prompt workflow management has become a key infrastructure requirement for organizations scaling AI operations in 2026. This streamlined approach offers distinct advantages for enterprises, creative teams, and researchers by addressing needs that traditional workflow tools cannot fulfill. Features like native prompt versioning, LLM-specific observability, and structured evaluation frameworks transform experimentation into consistent, repeatable processes.

For enterprises, Prompts.ai stands out with its integrated lifecycle management system, covering experimentation, evaluation, and production monitoring - all within a single interface. With access to over 35 leading models, organizations gain flexibility while maintaining strong governance. The pay-as-you-go TOKN model ensures costs are tied to actual usage, while real-time FinOps controls provide transparency and help manage AI spending across departments.

For creative professionals and domain experts, Prompts.ai simplifies workflows by removing the need for engineering support. Its user-friendly interface allows non-technical team members to iterate on prompts, conduct evaluations, and deploy changes without writing code. This fosters collaboration and speeds up the deployment process, making it ideal for creative teams working on tight deadlines.

For researchers and technical teams, the platform offers advanced tools like custom evaluators, batch testing capabilities, and CI/CD integration for automated testing in development pipelines. Features like distributed tracing and real-time monitoring deliver the insights needed to troubleshoot complex systems. By adopting systematic lifecycle management, organizations can deploy AI agents 5× faster, combining rapid iteration with enhanced observability.

Prompts.ai’s centralized approach addresses coordination challenges by unifying prompt management, cost tracking, and team collaboration. Whether you're handling enterprise-scale deployments, creative projects, or research initiatives, the platform provides the infrastructure needed to streamline operations and enhance efficiency across all AI-driven efforts.

A centralized prompt workflow is a game-changer for organizations juggling complex AI operations across various models, tools, and workflows. By consolidating these elements into a single platform, it tackles common pain points like tool overload, escalating costs, and governance challenges. This streamlined approach is particularly advantageous for AI-driven companies, enterprises managing large-scale AI deployments, and teams focused on improving efficiency, maintaining governance standards, and achieving a higher return on their AI investments.

To move prompts from spreadsheets into Prompts.ai, take advantage of the platform's Sheets feature, designed specifically for handling tabular data. Start by arranging your spreadsheet so that prompts are organized into columns. Once ready, either upload the file in CSV format or link external data sources directly. You can then use AI agents like formulas to dynamically create or adjust prompts. Finally, execute or set up automated runs to efficiently manage and optimize your prompts.

To improve the quality of prompts, focus on tracking important metrics such as output accuracy, relevance, and consistency. These indicators help pinpoint which prompts work well and highlight areas needing adjustment. Implement version control to compare different iterations and assess how changes impact performance. Keep an eye on execution costs to streamline workflows efficiently, and collect feedback from users or stakeholders to gain valuable qualitative insights. By maintaining a systematic approach to tracking and evaluation, you can refine prompts continuously and achieve better outcomes over time.