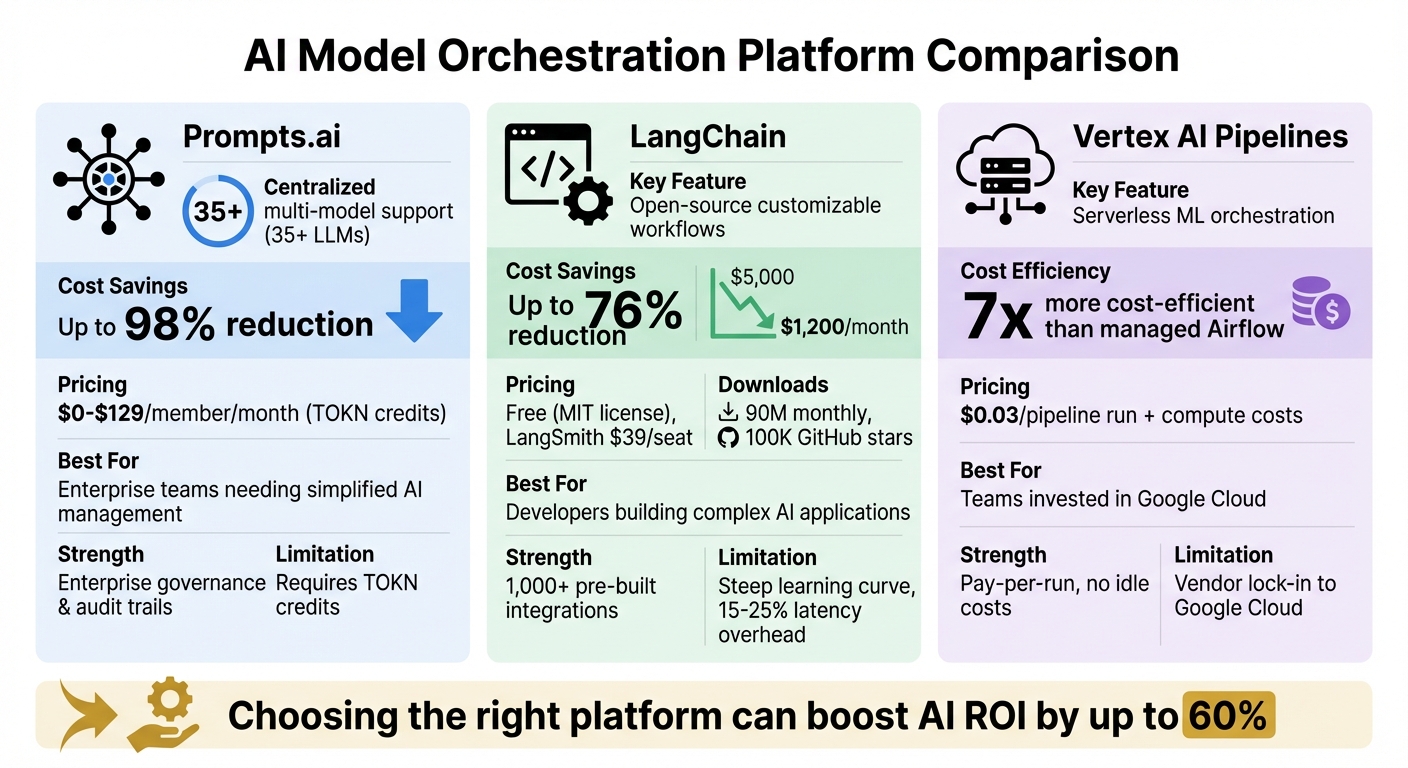

AI model orchestration connects various models, data, and applications into efficient workflows. Without it, organizations face inefficiencies, wasted resources, and high costs. Choosing the right platform can boost AI ROI by up to 60% while cutting expenses. This article compares three leading platforms:

Each tool fits unique needs, whether you prioritize centralized management, advanced customization, or scalable cloud solutions. Below is a quick comparison to help you decide.

| Platform | Key Feature | Main Limitation | Best For |

|---|---|---|---|

| Prompts.ai | Centralized multi-model support | Requires TOKN credits | Simplifying AI management across teams |

| LangChain | Customizable workflows with LangGraph | Steep learning curve | Developers building complex AI applications |

| Vertex AI | Serverless, pay-per-run ML orchestration | Google Cloud dependency | Teams already invested in Google Cloud |

Choose the platform that aligns with your goals and infrastructure to streamline AI workflows and maximize returns.

AI Model Orchestration Platforms Comparison: Prompts.ai vs LangChain vs Vertex AI

Prompts.ai serves as a centralized AI orchestration platform, bringing together over 35 large language models, such as GPT-5, Claude, LLaMA, Gemini, Grok-4, Flux Pro, and Kling. By uniting these models within a single, secure interface, it eliminates the hassle of managing multiple subscriptions and credentials, creating a streamlined environment for AI operations.

One standout feature of Prompts.ai is its ability to perform side-by-side comparisons of different models. Teams can test the same prompt across multiple models, making it easier to choose the one that offers the best mix of quality, speed, and cost. This functionality simplifies decision-making and ensures flexibility as new models become available.

Prompts.ai is built with enterprise-level governance in mind. It provides detailed audit trails that track user access, data flow, and prompts used, ensuring compliance with strict regulatory standards. Role-based access controls protect sensitive projects while still allowing shared infrastructure benefits, giving organizations both visibility and control as they scale their AI usage.

The platform introduces a TOKN credit system, offering a pay-as-you-go model instead of recurring subscriptions. These credits can be used across all 35+ models, potentially cutting AI software expenses by up to 98%. Additionally, built-in FinOps tools help monitor token usage. Pricing begins at $0 per month for exploratory use, with business plans available from $99 to $129 per member per month.

Prompts.ai features a user-friendly, code-light interface that integrates easily with existing systems. It ensures strong data security while simplifying AI workflow management, making it an efficient tool for organizations looking to optimize their AI operations.

LangChain stands out as a developer-focused framework designed for building intricate AI workflows. This open-source orchestration tool has gained significant traction, boasting over 90 million monthly downloads and 100,000 GitHub stars. Licensed under MIT, it’s free to use, though its observability tool, LangSmith, offers a professional plan priced at $39 per seat per month.

LangChain empowers developers to connect multiple large language models (LLMs), APIs, and data sources into cohesive workflows. With LangGraph - an extension that transforms linear chains into directed graphs - teams can create advanced systems featuring cycles, branching, and parallel processing. This makes it ideal for multi-agent orchestration, where agents are fine-tuned with custom prompts and training for specific tasks. LangChain also simplifies integration with providers like OpenAI, Anthropic, and local models through Ollama, offering standardized API methods such as invoke(), stream(), and batch(). This consistency helps streamline operations and enables cost-conscious model selection.

LangChain’s routing capabilities allow queries to be directed to the most budget-friendly model for each task. For example, in April 2025, a mid-sized e-commerce company slashed its chatbot expenses from $5,000 to $1,200 per month - a 76% reduction - by using LangChain and FAISS to implement a hybrid LLM router. Handling 10,000 daily queries, the system routed basic tracking inquiries to GPT-3.5-turbo while reserving Claude-2 for more complex tasks like ethical reasoning and refund denials, all while maintaining a 95% customer satisfaction rate [1]. LangSmith further enhances cost management by enabling trace-level token tracking, which helps teams identify and optimize high-cost features or users.

LangChain’s modular design offers over 1,000 pre-built integrations across LLMs, vector stores, chat models, document loaders, and retrievers. This flexibility allows developers to swap out tools, models, or databases without altering the core application logic. Using LCEL’s declarative syntax and pipe operator (|), developers can chain components seamlessly, benefiting from automatic schema validation and asynchronous support.

As AI automation expert Alex Hrymashevych explains, "LangChain is a production-first orchestration framework for teams building stateful, multi-step agents and RAG-enabled applications."

Vertex AI Pipelines stands out as a serverless option tailored for efficient and scalable ML workflows. This Google Cloud service charges a flat $0.03 per pipeline run, plus associated compute costs, and only provisions resources during execution. A comparison from 2026 highlights its cost advantage: a typical monthly workflow with daily preprocessing and GPU training cost $438 using managed Airflow, but only $65.53 with Vertex AI Pipelines - making it about 7 times more cost-efficient for standard ML tasks.

The platform's serverless architecture and seamless integration set it apart. Workflows are structured as Directed Acyclic Graphs (DAGs), where tasks are containerized, and outputs from one step feed directly into the next. Developers can define pipelines using either the Kubeflow Pipelines (KFP) SDK for general ML tasks or the TensorFlow Extended (TFX) SDK for handling large-scale structured data. With over 1,000 prebuilt "Google Cloud Pipeline Components", teams can connect directly to services like AutoML, BigQuery, Dataflow, and custom model endpoints. This eliminates the need to build complex training and deployment scripts, saving significant engineering time.

Security and compliance are integral to Vertex AI Pipelines. Organizations can enforce least-privilege access through scoped IAM roles and service accounts. VPC Service Controls establish a secure perimeter, ensuring that training data, models, and artifacts stay within the organization's environment. Additionally, Customer-Managed Encryption Keys (CMEK) safeguard data at rest. The platform's Vertex ML Metadata feature tracks the lineage of all artifacts, parameters, and metrics, syncing this information with the Dataplex Universal Catalog for unified visibility across projects.

Matt Olson, Chief Innovation Officer at Burns & McDonnell, remarked: "Vertex AI enables this innovation to scale responsibly by combining deterministic business rules with probabilistic reasoning, making AI a trusted operational capability - not just a productivity tool."

Vertex AI's pay-per-run model eliminates the idle costs often associated with always-on orchestrators. It also provides native access to Tensor Processing Units (TPUs), offering a cost-effective alternative to GPUs for training large language models and transformer architectures. The platform supports conditional execution logic, such as deploying a model only if its accuracy exceeds a set threshold (e.g., 0.85), preventing unnecessary resource use. Additionally, execution caching skips redundant tasks when inputs remain unchanged, further optimizing costs. These features, combined with seamless integration with external execution engines, ensure efficient resource allocation.

Vertex AI Pipelines extends its functionality beyond Google Cloud services. It delegates workloads to external engines like BigQuery for data warehousing, Dataflow for stream processing, and Apache Spark for distributed computing. The KFP SDK includes a local.init() function, enabling developers to test components and pipelines locally before deploying them to the cloud. This helps catch logic errors early and reduces unnecessary cloud usage. Furthermore, integration with Cloud Billing allows pipeline run data to be exported to BigQuery, providing detailed cost analysis across projects and teams.

Vertex AI Pipelines reimagines ML workflow orchestration with its serverless framework, strong governance features, and deep integrations, making it a powerful tool for handling complex AI deployments efficiently.

This section highlights the core advantages and limitations of each platform, helping you weigh their suitability based on your needs and technical setup.

Each tool brings its own set of benefits and challenges, influencing factors like efficiency, productivity, and ease of maintenance. Understanding these distinctions is key to selecting the right fit for your specific use case.

LangChain stands out as a modular, open-source framework offering high flexibility and a rich plugin ecosystem, making it ideal for complex applications like RAG-based chatbots. However, its abstraction layers can add a 15–25% latency overhead compared to direct model calls, as noted by Emmanuel Ohiri. Additionally, the platform’s steep learning curve requires advanced programming skills. While initial development costs are low, ongoing maintenance and debugging demand significant manual effort.

Vertex AI Pipelines, on the other hand, provides a managed, pay-as-you-go service that simplifies infrastructure management within the Google Cloud ecosystem. Its seamless integration benefits teams already working in GCP. However, reliance on Google Cloud can lead to vendor lock-in, and using advanced features often requires specialized knowledge of GCP.

Below is a quick comparison of their strengths and weaknesses:

| Platform | Key Strength | Main Weakness | Best For |

|---|---|---|---|

| LangChain | Flexible, with a robust plugin ecosystem | Latency overhead; steep learning curve | Developers creating custom LLM applications |

| Vertex AI Pipelines | Managed service, easy cloud integration | Vendor lock-in; GCP expertise needed | Teams already invested in Google Cloud |

LangChain is a great choice for developers seeking maximum customization and flexibility across cloud environments. Meanwhile, Vertex AI Pipelines works well for teams looking for a managed solution within Google Cloud, despite the limitations of vendor lock-in.

Having a unified orchestration strategy is crucial for avoiding fragmentation and getting the most out of your AI investments. The right platform for your team will depend on factors like expertise, existing infrastructure, and future growth plans.

For enterprises prioritizing security, governance, and clear cost management, Prompts.ai offers a platform that consolidates over 35 top language models into one secure interface. This eliminates the chaos of managing multiple tools and simplifies AI adoption for teams of any size. It’s an ideal solution for streamlining AI workflows across diverse teams.

For those with advanced technical expertise who want to design highly tailored workflows, LangChain provides an open-source ecosystem with deep customization options for LLM applications. On the other hand, organizations already operating within the Google Cloud ecosystem may prefer Vertex AI Pipelines for its managed orchestration capabilities. Each of these options serves specific needs, helping teams align their AI efforts with their unique objectives.

AI model orchestration is all about organizing, managing, and automating the operation of various AI models, tools, and workflows within a single system. This approach simplifies processes, enhances automation, and ensures proper oversight. As AI systems become increasingly intricate, orchestration plays a key role in making the best use of resources, cutting expenses, and keeping operations efficient. It’s particularly important for scaling AI efforts and enabling smooth interaction between models in enterprise settings.

Choosing the right orchestration platform comes down to your team’s priorities, technical expertise, and the size of your projects. Prompts.ai stands out for AI-focused companies juggling multiple models, offering streamlined cost management and enterprise-grade governance. On the other hand, open-source tools like Apache Airflow provide greater flexibility but demand more upkeep. When evaluating platforms, consider factors like how easily they integrate with your existing tools, their ability to scale with your needs, observability features, and cost control measures. These elements should align with your team’s skills and the complexity of your workflows.

When selecting an AI model orchestration platform, focus on key features that safeguard your operations. Look for data protection measures, compliance certifications such as SOC 2 and HIPAA, role-based access control (RBAC), and audit logs to maintain oversight. Additional tools like encryption, defenses against prompt attacks, and integrated cost management (FinOps) further reinforce security and operational transparency. These elements help ensure your workflows are secure, meet regulatory requirements, and operate with clear accountability.