AI tools are now essential for businesses in 2026. From automating workflows to connecting thousands of applications, these platforms help teams scale operations efficiently. Whether you're managing simple automations or complex AI pipelines, choosing the right tool depends on your team's needs, technical expertise, and workflow complexity.

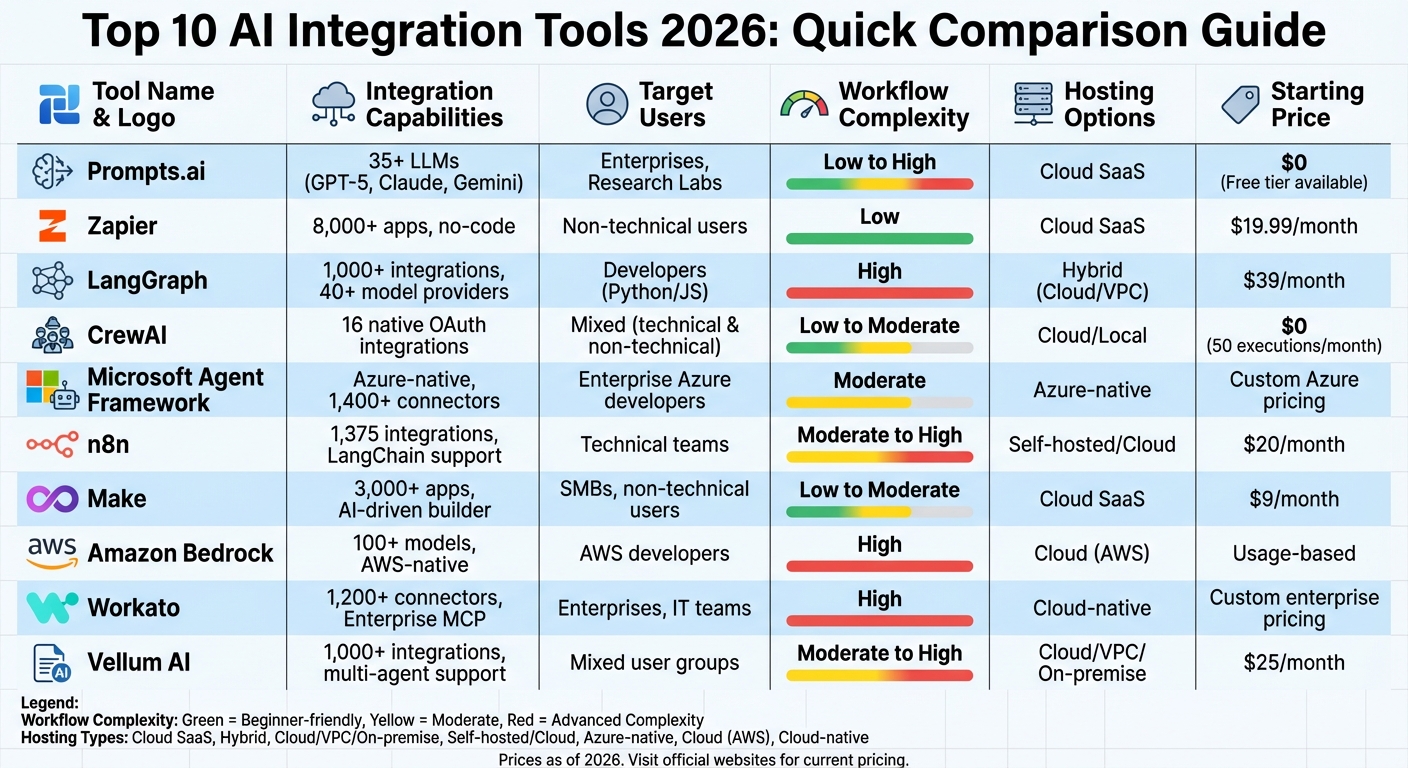

Here’s a quick overview of the 10 best AI integration tools of 2026:

These tools cater to a range of users - from non-technical teams needing no-code solutions to developers managing intricate, multi-agent workflows. Key considerations include integration capabilities, pricing models, and hosting options. For enterprises, governance and security features are critical, while smaller teams may prioritize ease of use and affordability.

| Tool | Integration Capabilities | Target Users | Workflow Complexity | Hosting Options | Pricing Model |

|---|---|---|---|---|---|

| Prompts.ai | 35+ LLMs, unified interface | Enterprises, research labs | Low to High | Cloud-native SaaS | Free to $129/member/month |

| Zapier | 8,000+ apps, no-code builder | Non-technical users | Low | Cloud (SaaS) | Free, $19.99–$69/month |

| LangGraph | Open-source graph workflows | Developers | High | Hybrid (Cloud/VPC) | Free core, $39+/month |

| CrewAI | 16 native integrations | Mixed user groups | Low to Moderate | Cloud/Local | Free to $25/month |

| Microsoft Framework | Azure-native, secure APIs | Enterprises in Azure | Moderate | Azure-native | Custom Azure pricing |

| n8n | 1,375 integrations, LangChain | Technical teams | Moderate to High | Self-hosted/Cloud | Free, $20+/month |

| Make | 3,000+ apps, AI-driven builder | SMBs, non-technical users | Low to Moderate | Cloud (SaaS) | Free, $9+/month |

| Amazon Bedrock | 100+ models, AWS tools | AWS developers | High | Cloud-native (AWS) | Usage-based |

| Workato | 1,200+ connectors, AI agents | Enterprises, IT teams | High | Cloud-native | Custom enterprise pricing |

| Vellum AI | Multi-agent support, 1,000+ apps | Mixed user groups | Moderate to High | Cloud/VPC/On-premise | Free, $25+/month |

Choosing the right tool ensures efficient scaling and cost savings. For simple automations, tools like Zapier and Make are ideal. For technical teams, platforms like LangGraph and n8n offer greater control. Enterprise users may prefer Amazon Bedrock or Microsoft Agent Framework for advanced security and compliance. Each platform is designed to align with specific needs, ensuring productive and scalable AI workflows.

AI Integration Tools 2026: Feature Comparison Chart

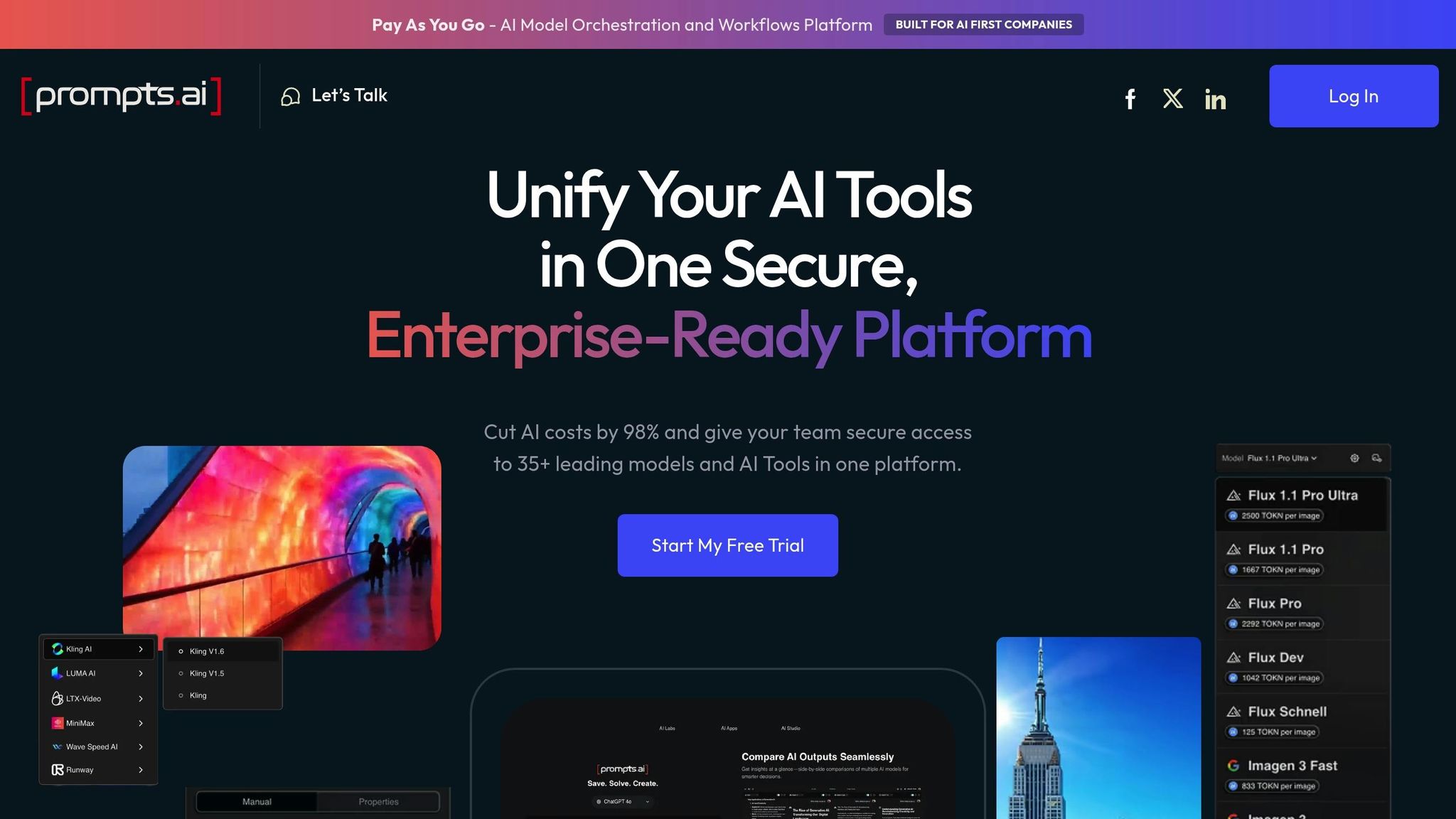

Prompts.ai is a robust AI orchestration platform designed for enterprises, bringing together 35 leading LLMs like GPT-5, Claude, LLaMA, Gemini, Grok-4, Flux Pro, and Kling under a single, secure interface. By consolidating these models, it eliminates the hassle of managing multiple subscriptions and streamlines operations.

At the core of Prompts.ai is its ability to provide seamless access to over 35 top-tier LLMs through one unified interface. This eliminates the need for juggling multiple API keys, billing systems, or authentication processes. The platform supports a broad range of AI functionalities, including text generation, image creation (via Flux Pro), and video modeling (through Kling). This makes it an ideal choice for teams that need diverse AI tools without the burden of maintaining relationships with multiple vendors.

Prompts.ai is designed to meet the needs of enterprises, creative agencies, and research labs. It’s particularly valuable for organizations - ranging from Fortune 500 companies to smaller creative teams - that require compliance controls, transparent cost tracking, and detailed audit trails for AI usage. With features like Prompt Engineer Certification programs and pre-built "Time Saver" workflows, the platform caters to both technical experts and non-technical users, ensuring repeatable and compliant processes for all.

Prompts.ai excels at handling multi-step orchestration, enabling users to create advanced workflows. Through its multi-step RAG (retrieval-augmented generation), the platform breaks down complex tasks into manageable steps, each with its own retrieval and reasoning process. These steps can be linked together, allowing teams to transform one-off experiments into scalable, repeatable workflows. This capability ensures teams can efficiently manage intricate AI processes without losing consistency or control.

The platform is offered as a cloud-native SaaS solution with advanced security features. It includes real-time FinOps tracking to monitor token usage, link spending to outcomes, and reduce costs by up to 98%. Pricing is flexible, starting with a free exploration tier and scaling up to business plans priced between $99 and $129 per member per month, using a pay-as-you-go TOKN credit system.

Zapier has established itself as a leader in the automation world, linking over 8,000 third-party applications through its cloud-based platform. Among these, nearly 500 AI-specific apps are part of its extensive ecosystem. With more than 350 million AI tasks completed and over 3.4 million companies relying on its services, Zapier executes AI workflows over 23 million times each month.

Zapier's strength lies in its ability to connect everyday tools like Gmail and Slack with advanced AI technologies. Key features include Zapier Agents (autonomous AI assistants), Zapier Chatbots (customer-facing bots), and Zapier Central, which simplifies AI-driven data management. Developers can leverage the Model Context Protocol (MCP) to access over 30,000 ready-to-use actions for AI models, enabling seamless connections between custom AI tools and Zapier's ecosystem. This flexibility makes the platform accessible to a wide range of users.

Zapier serves three main user groups:

In April 2025, Marcus Saito, Head of IT and AI Automation at Remote.com, implemented an AI-powered IT helpdesk using Zapier. This solution automatically resolved 27.5% of IT tickets, saving about 2,219 workdays per month and cutting $500,000 annually in hiring costs. Saito remarked: "Zapier makes our team of three feel like a team of ten."

These tailored features support everything from basic to highly complex automation needs.

Zapier accommodates a range of workflow complexities, from simple two-step "Zaps" to intricate multi-step processes. Features like Paths allow workflows to branch based on specific conditions, while Filters ensure actions only occur when certain criteria are met. Users can also add custom JavaScript or Python code steps and incorporate manual approval checkpoints via Slack for human oversight.

In April 2025, Vendasta used Zapier to build an AI-powered lead enrichment system, recovering $1 million in potential revenue and reclaiming 282 workdays annually. Marketing Operations Specialist Jacob Sirrs shared: "Because of automation, we've seen about a $1 million increase in potential revenue. Our reps can now focus purely on closing deals - not admin."

Zapier complements its powerful integrations with flexible hosting options designed to simplify and secure AI workflows. Operating as a cloud-native SaaS platform, Zapier handles infrastructure, authentication, and security. It also supports hybrid connectivity through webhooks or private apps for systems behind firewalls or on-premises. Pricing starts with a free plan for basic two-step workflows, with paid plans ranging from $19.99/month (Professional, billed annually) to $69/month (Team, billed annually). Custom Enterprise pricing is available for organizations requiring advanced security and governance features.

LangGraph is an open-source orchestration framework tailored for developers who need precise control over AI workflows. It supports over 1,000 integrations with AI models, tools, and data sources, seamlessly connecting to 40+ model providers like OpenAI, Anthropic, Google, and Azure. With over 35,000 GitHub stars and powering 90 million monthly downloads in the LangChain ecosystem, LangGraph stands as the most downloaded agent framework by early 2026. It’s a go-to tool for handling complex workflows with precision.

LangGraph structures workflows as directed graphs, where nodes represent specific actions (like LLM calls or custom functions) and edges define transitions. While it integrates natively with the LangChain ecosystem, it also functions independently, offering flexibility. The framework introduced an open Agent Protocol, enabling communication between agents across platforms such as CrewAI and Microsoft Agent Framework via standardized APIs. This interoperability ensures developers can build systems without being tied to a single vendor, making LangGraph a versatile choice for development teams.

LangGraph is built for developers and enterprise engineering teams coding in Python or JavaScript. Most developers become proficient with the framework in just 2–3 weeks. Though not intended for non-technical users, it includes LangGraph Studio, a visual interface that simplifies prototyping and allows for time-travel debugging.

"LangGraph sets the foundation for how we can build and scale AI workloads - from conversational agents, complex task automation, to custom LLM-backed experiences that 'just work'" - Garrett Spong, Principal Software Engineer

LangGraph excels in managing advanced stateful workflows featuring cycles (iterative loops), conditional branching, and parallel execution. Unlike linear automation chains, it maintains a persistent state schema that survives server restarts through durable execution. Developers can use databases like PostgreSQL or Redis for checkpointing, enabling workflows to pause for human approval and resume seamlessly. This makes it ideal for long-running tasks requiring human intervention.

To meet diverse deployment needs, LangGraph offers flexibility through cloud-native SaaS via LangGraph Platform, self-hosted servers for privacy-sensitive projects, and hybrid models combining a cloud control plane with a private VPC data plane. The core library is MIT-licensed and free, while paid plans start at $39 per seat per month for the Plus tier. Custom Enterprise pricing is available for large-scale deployments needing advanced governance.

CrewAI bridges the gap between developers and non-technical users with its hybrid orchestration platform. Supported by $18 million in funding, it powers an impressive 450 million agents monthly and has facilitated 1.4 billion automations. With over 60% of Fortune 500 companies onboard and 100,000 developers trained through DeepLearning.AI, its reach is substantial.

CrewAI boasts 16 native OAuth integrations, including popular tools like Gmail, Slack, Salesforce, HubSpot, and Jira, alongside full compatibility with Google Workspace and Microsoft 365. For broader access, the platform connects to thousands of apps via the Zapier Actions Adapter and hundreds more through Merge and Composio, covering tools like Linear and GitHub. CrewAI’s model-agnostic design ensures it works seamlessly with any LLM provider, from OpenAI and Anthropic to Google Gemini, Azure OpenAI, and AWS Bedrock. Notably, Amazon Bedrock integration alone unlocks 83 different LLM options for specialized tasks, making it adaptable to diverse organizational needs.

CrewAI caters to both technical and non-technical users. Developers can leverage a Python-based open-source CLI framework for local development, while non-technical users can create workflows using Crew Studio’s no-code visual interface. For enterprise clients, the platform offers managed Agent Operations with features like RBAC, SSO, and audit logging. Pricing starts at $0 for 50 executions monthly, with a Professional plan at $25/month (including 100 executions, $0.50 per additional execution), and custom Enterprise pricing that includes HIPAA/SOC2 compliance.

CrewAI simplifies even the most intricate workflows with its advanced orchestration capabilities. Through Flows, users can manage state persistence and implement conditional logic using start, listen, and router steps. The platform supports three process models - sequential, hierarchical, and hybrid - allowing for conditional logic, state tracking, and human-in-the-loop triggers. Event-driven automations are also supported, with real-time triggers from tools like Gmail, Slack, Salesforce, and HubSpot.

"CrewAI mirrors how teams actually work. You've got manager agents that orchestrate workflows and hand off tasks, worker agents that do the actual execution, and researcher agents that dig up and synthesize information." - Jake Nulty, Software Developer & Writer

CrewAI provides flexible hosting solutions, including local CLI development, self-hosted open-source execution, and managed cloud hosting through CrewAI AMP. The cloud option offers production-grade infrastructure with observability integrations for tools like Datadog, Arize Phoenix, Langfuse, and Honeycomb.

Microsoft Agent Framework is designed to empower developers with tools for secure and enterprise-ready automation. As the open-source evolution of AutoGen and Semantic Kernel, it caters to .NET and Python developers, blending AutoGen’s simple agent abstractions with Semantic Kernel’s advanced features like session-based state management, type safety, and telemetry. It integrates seamlessly with Azure OpenAI, OpenAI, Anthropic, and Ollama, while offering access to over 1,400 connectors through Azure Logic Apps. These connectors enable integration with enterprise tools such as SharePoint and Microsoft Fabric, making the framework a versatile solution for modern AI workflows.

With the use of MCP, Microsoft Agent Framework securely links agents to custom APIs and third-party tools. When hosted in the Azure Foundry environment, it achieves a latency of under 100ms for direct API calls. This infrastructure benefits from Microsoft’s extensive security initiatives, supported by a team of 34,000 engineers. The framework also integrates with the broader Microsoft ecosystem, including Microsoft 365 and Azure Functions, ensuring compatibility with widely used enterprise tools.

This framework is designed for C# and Python developers who need precise control over multi-agent workflows and state management. It’s particularly well-suited for enterprise clients in regulated sectors, offering built-in checkpointing to support long-running processes. These features align with modern multi-agent orchestration needs, providing developers with the tools to manage complex execution paths effectively.

"Foundry Agent Service and Microsoft Agent Framework connect our agents to data and each other, and the governance and observability in Azure AI Foundry provide what KPMG firms need to be successful in a regulated industry." - Sebastian Stöckle, Global Head of Audit Innovation and AI, KPMG International

The framework’s graph-based architecture enables it to handle intricate workflows. It supports various execution patterns, including sequential, concurrent, hand-off, group chat, and plan-first ("Magentic") approaches. Built-in checkpointing ensures state preservation for long-running processes, facilitating quick recovery and seamless transitions during human-in-the-loop interactions.

The SDK is free and open-source for local development, giving developers flexibility to experiment and build. For production, hosting options include self-managed setups (on-premises or Azure), embedding within custom applications, or managed hosting through the Azure Foundry Agent Service. The cloud-based option uses a consumption-based pricing model, allowing costs to align with the specific models and tools utilized.

"The new Agent Framework simplifies coding, reduces efforts and fully supports MCP for agentic solutions … We are really looking forward to the productive usage of container‐based AI Foundry agents, which significantly reduces workload in IT operations." - Gerald Ertl, Managing Director, Commerzbank

n8n is a fair-code automation platform that combines visual workflow design with code-based control, offering flexibility and precision. With 1,375 native integrations and 176,000 GitHub stars, it has become a trusted choice for technical teams seeking efficiency and customization. The platform supports top AI models like OpenAI's GPT-4, DALL-E, and Whisper, as well as Google Gemini and Anthropic Claude, through its native nodes. It also features AI Agent capabilities for autonomous reasoning and agent-to-agent interactions.

n8n's extensive 1,375 native integrations cover a wide range of applications, from business tools to AI models. Developers can expand its functionality using the HTTP Request node or by importing cURL commands when a native node isn’t available. The platform supports both JavaScript and Python, including libraries like Pandas and NumPy, for advanced scripting within workflows. By integrating with the Model Context Protocol (MCP), it enables AI models to dynamically select tools based on user intent. A single instance of n8n can process up to 220 workflow executions per second, making it an excellent choice for high-demand environments. This flexibility and scalability make it well-suited for teams requiring tailored solutions.

n8n is designed for developers, technical teams, and enterprise architects who need robust customization and control over their data. For example, Delivery Hero’s IT department saved 200 hours monthly by automating a single ITOps workflow for user management. Dennis Zahrt, Director of Global IT Service Delivery at Delivery Hero, praised the platform for its balance of power and simplicity. Similarly, Luka Pilic, Marketplace Tech Lead at StepStone, highlighted how n8n enabled his team to complete two weeks' worth of API integration work in just two hours, achieving a 25x speed improvement.

n8n excels at managing both simple automations and complex multi-agent orchestrations. With built-in LangChain nodes, it enables sophisticated AI workflows, while features like branching, loops, and merging allow for intricate logic. The "Execute Workflow" node helps teams break down large processes into smaller, reusable components, simplifying debugging and maintenance. For workflows requiring human oversight, the Human-in-the-loop (HITL) Wait nodes pause automations for manual review before AI takes action. This approach ensures greater control and accountability in workflows involving autonomous agents.

n8n offers self-hosted, cloud, and hybrid deployment options. The Community edition is free for self-hosting via Docker or Kubernetes, which can reduce operational costs by 70-90% compared to per-task pricing models. Cloud plans start at $20/month (billed annually) for 2,500 workflow executions, while Enterprise plans begin at approximately $667/month, including features like SSO, SAML, and role-based access control. For businesses in regulated industries, n8n supports air-gapped environments, ensuring sensitive data stays within a company’s VPC. This setup aligns with compliance standards such as GDPR 2.0 and the AI Act, making it a secure choice for organizations with strict data privacy requirements.

Vellum AI stands out as a robust platform designed for creating AI agents that handle multi-step tasks with ease. With over 1,000 integrations covering tools like Salesforce, Slack, HubSpot, and Google Drive, it caters to both technical and non-technical teams. Supporting multiple AI models from providers such as OpenAI and Anthropic, it allows users to compare and switch between LLMs to assess performance. Recognized as a 5/5 star platform on the "Top 20 AI Agent Builder Platforms in 2026" list, it’s celebrated for enabling users to move quickly from concept to functional agents.

Vellum’s provider-agnostic architecture includes pre-built tools, customizable nodes, and SDKs for TypeScript and Python. With features like semantic routing, retrieval-augmented generation (RAG), and tool calling, it empowers teams to streamline AI workflows. Users can connect agents directly via APIs or SDKs, setting up triggers for tasks and app events. This flexibility addresses a major challenge - 46% of product teams in 2026 identified lack of integration with current tools as a top barrier to adopting AI.

Vellum serves a diverse range of users, from marketing and finance teams to developers and enterprise architects. Non-technical users can easily create agents using natural language in the "Prompt-first" Agent Builder, while engineers benefit from advanced customization through SDKs and exportable code.

Pratik Bhat, Senior Product Manager, AI Product, shared: "Vellum made it so much easier to quickly validate AI ideas and focus on the ones that matter most. The product team can build POCs with little to no assistance within a week!"

Max Bryan, VP of Technology and Design, remarked: "We accelerated our 9-month timeline by 2x and achieved bulletproof accuracy with our virtual assistant. Vellum has been instrumental in making our data actionable and reliable."

These endorsements highlight the platform’s ability to adapt to varied user needs while ensuring efficiency.

Vellum excels at managing workflows, from simple prompts to advanced, multi-step processes with conditional logic, loops, recursion, and parallel execution. Its "Control Flow" execution layer ensures precise order of operations, supporting complex architectures like map/reduce on LLM outputs. Built-in error handling with retries and fallbacks further enhances reliability. The platform’s evaluation suites allow teams to conduct regression tests before deploying updates, addressing the issue that 95% of generative AI pilots fail to reach production due to insufficient testing.

To meet diverse security and data residency needs, Vellum offers cloud-native, Virtual Private Cloud (VPC), and on-premise deployment options. A free tier is available for testing and small projects, while paid plans start at $25/month. Enterprise plans, with custom pricing, include advanced governance features such as Role-Based Access Control (RBAC), SSO, audit logs, and environment separation for development, staging, and production workflows. These plans also meet compliance standards for SOC 2, GDPR, and HIPAA.

Make has become a go-to solution for automation, serving over 350,000 customers as of early 2026. The platform offers a visually intuitive interface with 3,000+ pre-built app integrations and 400+ AI-specific app integrations. It supports major AI models like OpenAI (ChatGPT, Sora, DALL-E, Whisper), Anthropic Claude, Google Vertex AI (Gemini), Perplexity AI, DeepSeek AI, Mistral AI, and Hugging Face. With its HTTP app, users can connect to any service with a public API, making it easy to integrate even niche or proprietary systems without pre-built connectors. Additionally, Make supports the Model Context Protocol (MCP), offering a cloud-hosted MCP server and client to streamline connections between internal and external services.

Make's visual canvas and Make Grid enable users to design and monitor AI-driven workflows in real time. These tools are particularly useful for managing complex decision-making tasks. For example, in November 2025, Celonis used Make AI Agents to slash their annual expense auditing costs from $50,000 to just $150. Similarly, in September 2025, Make AI Agents helped reduce farmers' invoicing time from 15 minutes to just 20 seconds. The platform also features Maia, an AI workflow builder capable of creating a 15-module process from a single natural language prompt.

Make is designed to accommodate users across a wide range of technical expertise. Non-technical users benefit from its drag-and-drop interface and Maia AI assistant, which can create and troubleshoot workflows using simple natural language commands. Developers, on the other hand, have access to advanced tools like custom API connectors and MCP support. For enterprises, Make provides features like SOC 2 Type II compliance, GDPR adherence, SSO, and RBAC. The platform has earned high ratings on review sites: 4.8/5 on Capterra (404 reviews), 4.7/5 on G2 (238 reviews), and 4.6/5 on Gartner (20 reviews).

"Make really helped us to scale our operations, take the friction out of our processes, reduce costs, and relieved our support team." - Philipp Weidenbach, Head of Operations, Teleclinic

Make excels at handling advanced workflows. It includes tools like routers for conditional branching and iterators for processing data arrays. The platform also supports multi-step processes, error handling, and data transformations. Error handlers ensure workflows can recover from API failures without manual input. For instance, in December 2024, GoJob used Make and AI integrations to achieve a 50% boost in yearly net revenue. Pricing is flexible, starting with a free plan offering 1,000 credits per month, while the Core plan begins at $9/month (billed annually) for 10,000 credits.

As a cloud-native platform, Make manages infrastructure, scaling, and security updates automatically. This removes the need for server maintenance, while features like the Analytics Dashboard allow users to track workflow performance and resource usage over time. Enterprise plans offer custom pricing, enhanced governance tools, and always-on support for businesses needing tailored solutions.

Amazon Bedrock is a fully managed, serverless AI application platform serving over 100,000 organizations. It offers unified API access to hundreds of foundation models from providers such as Anthropic, Meta, Mistral AI, and Amazon itself. Additionally, the Bedrock Marketplace provides access to more than 100 models. With OpenAI API-compatible endpoints, developers can migrate from the OpenAI SDK by simply updating the base URL. The platform also features the AgentCore Gateway, enabling one-click integration with enterprise tools like Salesforce, Slack, Jira, Asana, and Zendesk by converting APIs and Lambda functions into Model Context Protocol (MCP)-compatible tools.

Amazon Bedrock takes a framework-agnostic approach, supporting popular open-source agent frameworks like LangGraph, CrewAI, LlamaIndex, and Strands Agents through its AgentCore Runtime. Communication is facilitated by Model Context Protocol (MCP) and Agent-to-Agent (A2A) protocols, supporting workloads up to 8 hours and multi-modal payloads up to 100 MB. The platform’s Bedrock Guardrails can block up to 88% of harmful content and achieve 99% accuracy in identifying correct model responses. Distilled models operate 5X faster at 75% lower cost, while Intelligent Prompt Routing reduces expenses by 30%. These features create a flexible and efficient environment for users of varying expertise.

Amazon Bedrock is designed to address the needs of three main user groups:

In 2024, Robinhood demonstrated the platform’s potential by scaling token usage 10X in just six months, reducing AI costs by 80% and cutting development time in half.

"Amazon Bedrock's model diversity, security, and compliance features are purpose-built for regulated industries." - Dev Tagare, Head of AI, Robinhood

These capabilities highlight Amazon Bedrock’s ability to serve a wide range of users, from individuals without technical expertise to large enterprises.

Amazon Bedrock excels in managing both straightforward tasks and complex workflows. Using Tree-of-thought (ToT) and Chain-of-thought (CoT) prompting, the platform supports advanced multi-step orchestrations. Bedrock Flows enables users to visually map out intricate, conditional business logic, while Bedrock Agents autonomously break down tasks and call APIs to complete them. Multi-agent collaboration allows specialized agents to work together under a supervisor agent for handling sophisticated workflows. For instance, Dentsu Creative Brazil used Bedrock Flows to automate book conversions into accessible formats for readers with learning disabilities. According to Thiago Winkler, Executive Director of Operations, the visual interface saved significant manual effort.

As a cloud-native, serverless platform, Amazon Bedrock removes the need for infrastructure management. Users are billed only for resources consumed, with pricing that pauses during I/O wait times. The platform handles scaling and security updates automatically, while AgentCore Runtime ensures secure multi-tenancy through microVM isolation. Native integration with AWS services like Lambda, S3, and DynamoDB simplifies development for teams already operating within the AWS ecosystem.

Workato is an enterprise-grade platform designed to integrate and automate workflows across various departments, offering seamless orchestration for complex processes. It features over 1,200 pre-built connectors compatible with SaaS applications, on-premises systems, databases, and ERP platforms like Salesforce, SAP, and NetSuite. For AI integration, Workato collaborates with leading providers, including OpenAI, Google Gemini, Amazon Bedrock, Azure OpenAI, Anthropic, Mistral AI, DeepSeek, Perplexity, and Ollama. Its Enterprise MCP (Model Context Protocol) adds a layer of enterprise-specific context, allowing AI agents to perform secure, reliable business actions with transactional accuracy. This capability enhances customization and flexibility for future developments.

In addition to pre-built connectors, Workato offers broad integration options through HTTP, SDKs, and support for protocols like EDI, GraphQL, and gRPC. Its Agent Studio provides a visual, low-code tool for creating AI agents, known as "Genies", that can execute workflows and tasks. In Winter 2026, Workato achieved 45 #1 rankings across G2 categories, including iPaaS, Process Orchestration, and Embedded Integration Platforms. The platform was also named a Leader in the Gartner® Magic Quadrant™ for iPaaS for the 7th year in a row. Over the past year, the use of generative AI within automated processes surged by 500%.

Workato caters to both IT professionals and "citizen developers" from business teams, with IT building 56% of automations and business users creating the remaining 44%. This dual focus allows for collaboration across departments, supported by its low-code/no-code design and AIRO, an AI copilot that enables workflow creation through natural language prompts. For enterprises, Workato ensures compliance with SOC 2 Type II standards, offers Role-Based Access Control (RBAC), and provides governance tools for monitoring automation and AI activities.

"One of the best people on my team was stocking grocery stores a few years ago, now she's building automations with our VP of sales." - Carter Busse, CIO at Workato

Workato demonstrates its strength in handling intricate workflows, with 61% of automated processes classified as complex. The platform supports features like conditional logic (IF/ELSE), loops, parallel execution, and Recipe Functions that allow workflows to call and reuse other workflows. Built-in error handling ensures workflows can detect issues, retry actions, or follow alternate paths. It also supports human-in-the-loop processes, with 11% of automations involving manual approvals or exception handling. Over 50% of companies using Workato have deployed automations across four or more departments, showcasing its scalability.

Workato operates as a cloud-native iPaaS, automatically managing infrastructure and scaling resources as needed. For businesses with hybrid requirements, the platform offers on-premises agents to securely access local files, databases, and scripts without exposing internal systems to the internet. All data, including job histories, is encrypted at rest using AES-256 with double encryption. Workato complies with stringent standards like PCI-DSS v4.0.1 Level 1, SOC 2 Type II, and HIPAA, making it a reliable choice for industries with strict security and regulatory needs.

When selecting an AI integration tool, it's crucial to consider your team's expertise, the complexity of your workflows, and specific hosting needs. The table below outlines the strengths of various platforms, helping you align your project requirements with the most suitable option.

| Tool | Integration Capabilities | Target Users | Workflow Complexity | Hosting Options | Pricing Model |

|---|---|---|---|---|---|

| Prompts.ai | 35+ LLMs (GPT-5, Claude, LLaMA, Gemini, Flux Pro, Kling); unified interface with FinOps cost controls | Enterprises, creative agencies, research labs | Low to High (supports varied prompt workflows and side-by-side comparisons) | Cloud-based | Pay-As-You-Go TOKN credits; Plans from $0–$129/member/month |

| Zapier | 8,000+ app connectors; no-code environment | Citizen Automators, small businesses | Low (simple automations) | Cloud (SaaS) | Activity-based; Free plan available, Professional $19.99/month, Team $69/month |

| LangGraph | Graph-based execution (nodes/edges) with an open Agent Protocol for cross-framework communication | Python/JavaScript developers | High (supports cycles, conditional branching, and parallel execution) | Hybrid (Cloud/VPC) | Pay-per-node |

| CrewAI | Role-based collaboration with sequential, hierarchical, and consensual processes | Rapid prototypers | Low to Moderate (approximately a 1-week learning curve) | Cloud/Local | Consumption-based |

| Microsoft Agent Framework | Asynchronous, event-driven architecture with Azure-native deployment | Azure-native enterprises | Moderate (1–2 week learning curve) | Azure-native | Custom enterprise pricing |

| n8n | 1,000+ integrations; native LangChain support; LangSmith debugging | Technical power users, IT Ops | Moderate (handles complex loops with durable execution) | Self-hosted/Cloud | Execution-based |

| Make | "Maia" conversational AI (2026) that generates multi-module workflow graphs from natural language prompts | SMBs, power users | Low to Moderate | Cloud (SaaS) | Usage-based |

| Amazon Bedrock | 83 LLM options; AWS-native orchestration | AWS developers | High | Cloud (AWS) | Custom, usage-based |

| Workato | 1,200+ connectors; Enterprise MCP; Agent Studio for low-code AI agents | IT professionals, citizen developers | High (with many customers deploying complex workflows across multiple departments) | Cloud-native with on-premises agents | Enterprise pricing |

Key Takeaways:

"The killer feature for 2026 is observability. An orchestration platform is useless without a 'Debugger for AI Thoughts.'" – Digital Applied

For privacy-conscious teams, n8n's self-hosted option ensures data remains on-premises. When it comes to durable execution, Temporal is the go-to standard for managing state persistence at scale. Tools like LangGraph Studio and n8n's LangSmith integration enhance debugging capabilities, offering time-travel debugging and AI thought tracing - essential features for production environments.

Selecting the right AI integration tool in 2026 boils down to your team's expertise, the complexity of your projects, and the level of control you need. For non-technical teams managing straightforward automations, tools like Zapier or Make are ideal, offering natural language interfaces that eliminate the need for coding. On the other hand, technical teams handling intricate AI workflows should consider platforms like n8n or LangGraph, which provide execution-based pricing and the granular control required for production-level tasks. The choice of tool should align closely with your workflow complexity and team capabilities.

Budget considerations are equally important. Activity-based pricing can lead to unpredictable costs with frequent iterations, while execution-based models offer more stability. Enterprises already entrenched in cloud ecosystems might lean toward Microsoft Agent Framework or Amazon Bedrock for seamless integration with Azure or AWS. However, these options often come with steeper learning curves and custom pricing structures.

Operational visibility is another critical factor.

"The platform you choose today may not be the one you use in two years... build abstraction layers that let you swap frameworks." – Digital Applied

Features like integrated debugging tools, including time-travel and thought tracing, are essential for scaling prototypes into production. For workflows requiring human oversight or persistent states, robust execution capabilities are a must.

Ultimately, the tool you choose should align with your team's skill set and scale alongside your AI operations. With the AI orchestration market valued at $11.47 billion in 2025 and growing at 23% annually, building abstraction layers now can help avoid costly overhauls as needs evolve or superior tools become available. Seamless orchestration of interoperable AI workflows is the cornerstone of long-term production success.

To select an AI integration tool that fits your needs, focus on critical aspects such as compatibility, user-friendliness, cost, security, and integration capabilities. Assess the specific requirements of your team, their technical expertise, and your budget constraints. Prioritize platforms that offer centralized access to models, automation features, and compliance support. Solutions like Prompts.ai stand out by delivering cost savings, scalability, and robust governance controls, making them ideal for managing varied workflows and ensuring secure operations.

The main distinction lies in their reach and intricacy. Simple automation focuses on repetitive tasks guided by fixed rules, such as moving data or initiating specific actions. While it’s effective, it is confined to predefined processes and lacks flexibility.

Orchestration goes far beyond this by managing intricate workflows. It brings together multiple systems, AI models, and datasets, adjusting dynamically to changing needs. With added capabilities like compliance tracking, orchestration becomes a crucial tool for running advanced AI ecosystems efficiently.

To keep AI workflow costs under control as usage scales, consider platforms like prompts.ai. They offer tools like real-time cost tracking, pay-as-you-go pricing, and intelligent task routing to help you avoid surprise expenses. Additionally, using AI gateways with features such as caching, routing, and budget controls can further lower costs while maintaining predictability, ensuring resources are managed efficiently.