Managing AI costs in 2026 is more complex than ever, with hidden token usage, premium add-ons, and hybrid pricing models driving up expenses. Businesses often underestimate these costs by 40–60%, but with the right strategies, you can cut spending without sacrificing performance.

Here’s how you can take control:

These methods ensure you only pay for what you need while maintaining efficiency. Let’s dive into the details.

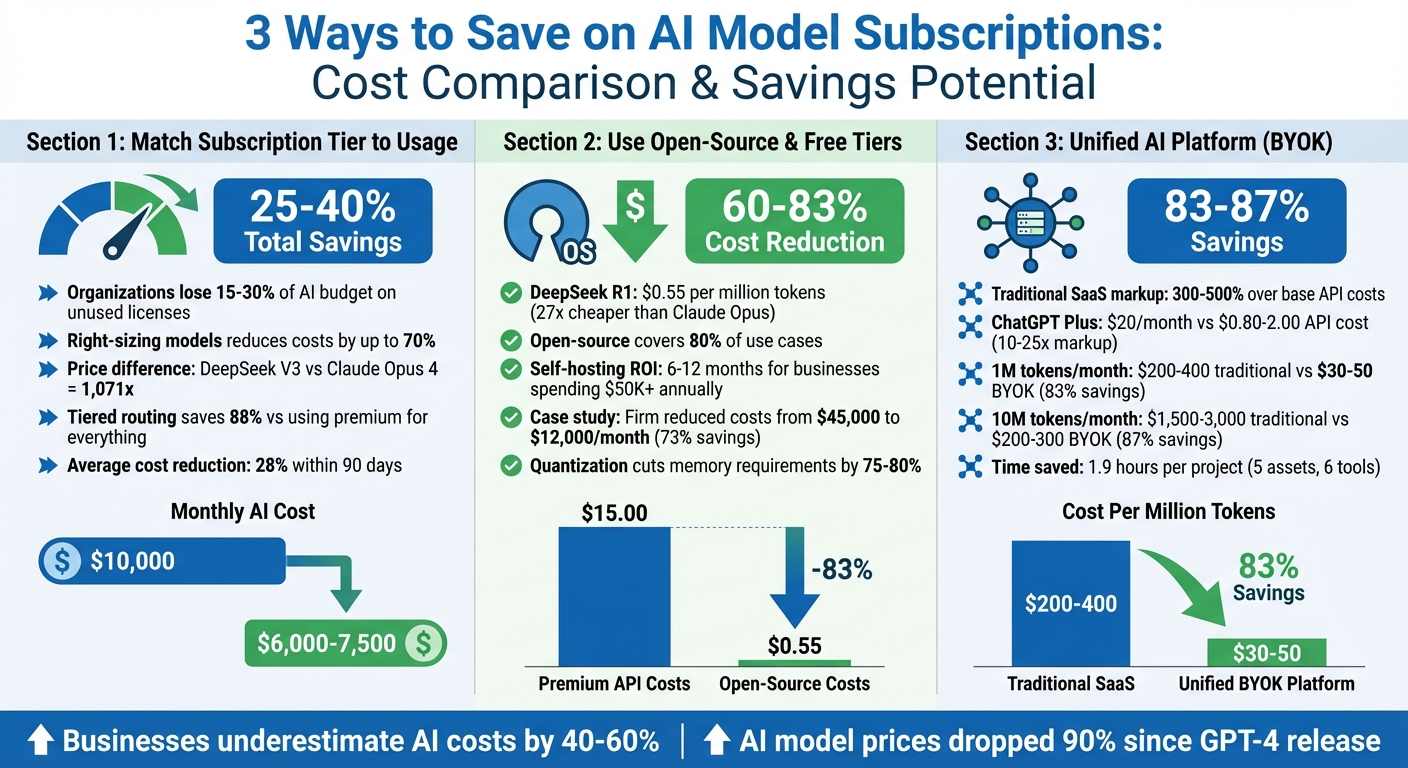

AI Model Cost Comparison and Savings Potential by Strategy

Getting control of AI costs starts with aligning your subscription tier to actual usage. Many organizations lose 15–30% of their AI budget on unused licenses. A quick 90-day utilization audit often uncovers the root of the problem: inactive accounts and unnecessary use of premium models for simple tasks.

The potential savings are hard to ignore. Right-sizing your models can reduce costs by as much as 70% for most workloads. The price difference between the most affordable frontier-class model (DeepSeek V3) and the priciest option (Claude Opus 4) is a staggering 1,071x. Even more impactful, a tiered routing system - where basic tasks like classification or formatting use budget models, while premium models handle complex reasoning - can slash costs by 88% compared to using premium models for everything.

Organizations that conduct audits typically see a 28% cost reduction within 90 days, and a well-executed 6–12 month optimization plan can yield 25–40% total savings. For instance, a $10,000 monthly AI spend could drop by $2,500–$4,000.

Start by exporting login data and identifying users with fewer than five logins per quarter. Then, categorize tasks into three tiers: Low (e.g., classification), Medium (e.g., summarization), and High (e.g., complex reasoning). Assign each task to the most cost-effective model tier. Today’s budget models rival the performance of 2024’s frontier-class models but at a fraction of the cost.

Major APIs include a usage object in their responses, making it easy to track token consumption. Use this data to identify heavy users and evaluate whether they genuinely need premium access or could shift to a lower tier without losing efficiency.

Precise audits lay the foundation for scalable cost management. As your team expands, usage patterns will shift. What works for a small team of ten won’t necessarily scale to a team of a hundred. Automated routing systems can adapt by classifying request complexity in real time, ensuring tasks are assigned to the most cost-efficient models. This "escalation pattern" starts with the cheapest tier and upgrades only when required, keeping costs under control as workloads increase.

The landscape is evolving rapidly: AI model prices have dropped 90% since GPT-4's release, adjusted for performance. Aligning your subscription tier with actual usage not only cuts costs now but also positions you to take advantage of future price reductions. Avoid falling into the "subscription sprawl" trap, where redundant tools with overlapping features quietly drain over $100 per month. Real-time task classification and an interoperable AI workflow ensure your system scales efficiently with your organization’s growth.

Open-source models and free-tier options can slash costs by as much as 86% compared to premium subscriptions. For instance, DeepSeek R1 offers GPT-4-level reasoning for just $0.55 per million input tokens - making it 27 times cheaper than Claude Opus. Additionally, free API providers like Google AI Studio, Groq, and GitHub Models provide no-cost programmatic access, making them excellent choices for development, testing, and even light production workloads. These savings become apparent when comparing the expenses of premium APIs against free or self-hosted alternatives.

Open-source LLMs can cover 80% of proprietary model use cases at a fraction of the cost. By adopting a hybrid routing approach - where simpler queries are directed to free or low-cost models, and only complex tasks are escalated to premium APIs - organizations can reduce expenses by 60–83%. For businesses processing over 2 million tokens daily or spending more than $50,000 annually on APIs, self-hosting open-source models can pay off within 6–12 months. A notable example is a Kenyan cooperative that, in February 2026, developed a crop disease detection tool using only free resources. This tool achieved 86% accuracy, reduced crop loss by 37%, and incurred zero cloud expenses.

Free API tiers like Google AI Studio (up to 1,000 requests daily) and tools such as Ollama make it easier to run models locally, significantly lowering per-token costs during testing. Groq offers free access to models like Llama 4 Scout and Qwen 3, with rate limits ranging from 30 to 60 requests per minute. Using quantization techniques can also cut memory requirements by 75–80%, allowing a 70B parameter model to run on a single consumer-grade GPU, such as the RTX 4090, instead of requiring multiple enterprise-grade GPUs.

"Free tiers aren't for toy projects - they're for validated learning loops. If your model can't prove value on 10% of your data using free resources, scaling it won't fix the underlying problem." - Dr. Lena Park, AI Infrastructure Lead, Mozilla Foundation

Open-source models are now outperforming proprietary benchmarks in some cases. For example, Llama 4 Maverick achieves an 80.5% score on MMLU-Pro, surpassing GPT-4o's 73.3%. A mid-sized financial firm demonstrated the potential of self-hosting when it transitioned from GPT-4 to a self-hosted Llama 3.3 70B model in early 2026. By deploying four NVIDIA A100 GPUs over 12 weeks, the firm reduced its monthly costs from $45,000 to $12,000 - a 73% savings - while maintaining 92% accuracy and achieving full FINRA compliance. As usage scales, the economics become even more favorable. For example, self-hosting a 13B+ parameter model breaks even at just 10% GPU utilization compared to GPT-4 Turbo API costs. Furthermore, over 60% of major model releases in 2026 adopted Mixture-of-Experts architecture, cutting active parameters and inference costs by up to 70%.

AI SaaS tools often come with steep markups, typically ranging from 300–500% over base API costs. For instance, ChatGPT Plus at $20/month can cost 10–25 times more than direct API usage, which is about $0.80–$2.00. Similarly, Gemini Advanced's $20 monthly fee reflects a 20–40x markup compared to its API cost of around $0.50–$1.00.

By adopting a Bring Your Own Key (BYOK) approach, unified platforms eliminate these third-party fees. For users consuming 1 million tokens per month, traditional tools might cost $200–$400, while a BYOK platform reduces this to around $30–$50 - an 83% savings. The cost advantage becomes even more pronounced at higher volumes. For example, processing 10 million tokens with traditional tools could run $1,500–$3,000, whereas a unified platform achieves the same for approximately $200–$300, delivering 87% savings. These dramatic reductions make transitioning to unified platforms a practical and cost-effective choice.

Switching to a unified platform is straightforward and doesn't require technical expertise. Start by auditing your current AI expenses to identify where markups are highest. Then, set up API keys directly with providers like OpenAI or Anthropic through their dashboards. These dashboards also allow you to set monthly spending caps, preventing unexpected charges.

Unified platforms simplify operations by offering a single interface to manage multiple models, eliminating the need to juggle various APIs, logins, or tools. Instead of navigating Discord bots, web apps, and desktop software, you can access models such as GPT-4o, Claude 3.5 Sonnet, and Gemini 1.5 Flash all in one place. For a typical project involving five assets and six tools, this consolidation can save roughly 1.9 hours previously lost to context switching. Beyond these immediate benefits, unified platforms also position you for long-term scalability.

Unified platforms ensure immediate access to new models as soon as APIs are released, while traditional SaaS tools often take months to integrate them. By 2026, creators will rely on a variety of specialized models to remain competitive in areas like video, image, and audio generation. The focus has shifted from mastering a single tool to orchestrating access to the right model for each task - whether it’s speed for client pitches, quality for high-end projects, or cost efficiency for social media content.

"Professional creators in 2026 don't need one perfect tool. They need orchestrated access to specialized models - just like cinematographers don't use one lens for every shot." - Cliprise

This unified strategy not only adapts to evolving usage needs but also keeps workflows agile and cost-effective. When you consider savings from direct subscriptions, reduced time spent switching between tools, and lower management overhead, unified platforms can cut overall workflow costs by as much as 98.8% compared to maintaining separate premium accounts for various tools.

Cutting AI costs goes beyond aligning service tiers - there are several actionable strategies that can make a big difference. One of the most effective yet often overlooked techniques is prompt caching. If you frequently send the same system instructions or reference documents, caching allows previously computed data to be reused, bypassing the need for reprocessing. This can lead to savings of 50%–90%, depending on your provider. For example, Anthropic and Google offer 90% discounts on cached content, while OpenAI provides a 50% discount. To make the most of this, structure your prompts so that static content appears at the beginning, while dynamic queries are placed at the end, increasing the likelihood of cache hits.

Another smart approach is task-based model selection. Research shows that the majority of AI tasks - around 80% - don’t require expensive, high-end models. For example, straightforward tasks like email classification or data extraction can be handled by a lightweight model such as Gemini 2.0 Flash, which costs just $0.10 per million input tokens. Compare that to Claude Opus 4, which charges $15.00 per million tokens - a staggering 150× price difference for tasks that don’t demand advanced capabilities. A good rule of thumb is the "10× rule": only use premium models if the cost of correcting an error would be ten times higher than using a cheaper alternative. Pairing this with careful management of token overhead can further drive down costs.

Hidden token overhead is another area where expenses can creep up unexpectedly. Things like tool definitions, lengthy conversation histories, or repeated system prompts can add up quickly. To address this, you can summarize older conversation threads instead of resending entire transcripts and review your prompt templates regularly to eliminate redundant instructions.

For tasks that don’t require immediate results, batch processing is a great way to save even more. OpenAI’s Batch API, for instance, offers a 50% discount on standard rates if you can wait a few hours for the results. This is perfect for overnight analytics, bulk content generation, or other non-urgent tasks. When combined with caching and smart model selection, batch processing can significantly lower your overall costs without sacrificing quality.

Lastly, it’s crucial to track spending by project rather than just by provider. Monitoring total bills from providers like OpenAI or Anthropic won’t give you the granular insights needed to identify inefficiencies. Instead, break down usage by workspace, feature, or endpoint to pinpoint high-consumption workflows. Setting budget alerts at 50%, 80%, and 100% thresholds can help you stay ahead of overages and make adjustments in real time.

"Cost optimization is an operations discipline, not a one-time config change." - AI operations expert at AI Costboard

These tactical methods, when combined with broader strategies, lay a solid foundation for better cost tracking and improved ROI analysis.

Many businesses underestimate their AI expenses by as much as 40–60%, often focusing solely on monthly invoices. The reality is that costs include token-based usage fees, hidden overhead, and the engineering time required for building and maintaining integrations. This oversight underscores the importance of accurately tracking ROI. It's worth noting that output tokens are three to ten times more expensive than input tokens, and hidden charges - like repeated system prompts or extra reasoning tokens - can add hundreds of dollars to monthly costs.

A better approach is to measure cost per successful task rather than total spending. For example, if you're automating customer support, calculate the cost per resolved query. Dive into API usage data to account for all token consumption. A single 2,000-token system prompt sent 1,000 times daily could cost up to $900 per month on premium models like Claude Opus 4, making it essential to audit recurring inputs.

Don't forget to include setup and operational expenses beyond subscription fees. Engineering work for integration and prompt optimization can surpass infrastructure costs, sometimes exceeding $500,000 annually. Additionally, retries caused by model failures and token inefficiency - where some models consume more tokens for the same output - can inflate costs. For workloads exceeding 200 million tokens per day, compare cloud API fees with the costs of self-hosting, factoring in hardware expenses (e.g., NVIDIA H100s), power, and cooling.

Apply the standard ROI formula: (Total Savings + Added Revenue – Total Costs) ÷ Total Costs × 100. This calculation works hand in hand with cost-control strategies. To track deflected interactions, multiply the number of AI-handled tasks by the average cost of a human-handled task. For instance, nib Group’s virtual assistant "nibby" cut human support by 60%, saving over $22 million. Similarly, Corewell Health used predictive analytics over 20 months to prevent 200 hospital readmissions, saving $5 million by reducing avoidable recovery costs.

"The difference between a $500/month AI bill and a $50/month AI bill usually isn't using less AI. It's using the right AI for each task and not paying for tokens you didn't need to send." - InsiderLLM

To put these insights into practice, start with a two-week sprint to establish a baseline cost per task. Implement cost-saving measures and monitor improvements closely. Set up budget alerts to make real-time adjustments and prevent costs from spiraling. On average, AI investments have a payback period of about 1.2 years (roughly 14 months), but only when you're measuring actual results instead of relying on vendor estimates.

The three strategies outlined here - aligning subscription tiers with actual usage, utilizing open-source or free-tier options, and consolidating tools through unified platforms - offer practical ways to manage AI costs without compromising performance. By carefully selecting models, you can avoid overspending on premium options for simple tasks, while open-source solutions now handle roughly 80% of enterprise needs at a fraction of the cost of proprietary models. Additionally, unified routing systems ensure tasks are assigned to the most cost-effective models, minimizing token waste and improving efficiency.

"The organizations winning in 2026 aren't just choosing cheaper models - they're implementing systematic optimization across their entire AI stack." - AI Pricing Master

Achieving long-term savings requires ongoing attention to cost management. Over time, prompt templates can drift, and scaling AI requests without proper cost controls can lead to unexpected spending spikes. To avoid this, consider automating logging to capture the usage object from every API response, setting budget alerts at 50%, 80%, and 100% thresholds, and conducting monthly audits to identify inefficient prompts or unused licenses.

To select the best model tier, start by evaluating the complexity of your tasks and their associated costs. For straightforward tasks, such as basic classification or simple Q&A, smaller and less expensive models are often sufficient. On the other hand, reserve higher-tier models for demanding activities like coding or conducting security reviews. Categorize tasks based on complexity and direct simpler ones to cost-effective models, while leveraging advanced models only for high-priority or intricate work. This approach ensures you manage expenses efficiently without compromising on performance.

Self-hosting an open-source model becomes a smart financial choice when your usage reaches a level where infrastructure and maintenance costs are less than the recurring API fees. For premium models, this tipping point generally falls around 5–10 million tokens per month. At higher usage scales, this approach can translate into savings of millions of dollars each year for organizations.

To keep token costs under control, pay close attention to system prompts, conversation history, tool definitions, and reasoning tokens. Keeping track of these factors ensures better oversight of usage and helps prevent surprise charges.