Generative AI is reshaping industries, driving enterprise spending from $1.7 billion in 2023 to $37 billion in 2025. Startups now power 76% of enterprise AI use cases, up from 53% in 2024. The focus has shifted from simple chatbots to Agentic AI - systems that plan, execute, and self-correct autonomously. These solutions address three critical business needs: cost control, system compatibility, and advanced AI capabilities.

Here’s a quick look at the top startups leading this transformation:

These startups deliver measurable results like 10× productivity gains, 90% cost reductions, and faster deployment times. The shift toward Agentic AI highlights the growing need for systems that act independently, integrate seamlessly, and deliver clear ROI for businesses.

Top 10 Generative AI Startups Comparison: Features, Costs, and Key Metrics

Prompts.ai brings together 35+ top-tier large language models - such as GPT-5, Claude, LLaMA, Gemini, Grok-4, Flux Pro, and Kling - into a single, streamlined interface. This eliminates the hassle of juggling multiple subscriptions, dashboards, and administrative tasks. Teams can effortlessly switch between models, compare outputs side-by-side, and choose the most suitable option for each task. This consolidated access simplifies operations while laying the groundwork for better financial and operational management.

The platform features real-time FinOps tools to monitor token usage. Businesses can set spending caps, allocate budgets across departments, and directly link AI expenses to measurable outcomes. By offering pay-as-you-go TOKN credits instead of fixed monthly fees, Prompts.ai ensures costs align with actual usage. This approach can reduce AI software expenses by up to 98% compared to managing multiple standalone subscriptions. This level of transparency empowers finance teams to make data-driven decisions and avoid budget overruns.

Prompts.ai is used by Fortune 500 companies, creative agencies, and research labs to streamline AI workflows across areas like sales, customer support, and software development. The platform also provides a Prompt Engineer Certification program, enabling internal experts to design reusable "Time Saver" prompt templates. This shifts AI usage from one-off experiments to consistent, repeatable processes, boosting productivity without requiring every team member to master AI intricacies.

Prompts.ai addresses prompt injection risks with input validation, delimiter separation, and automated JSON checks. It ensures structured response formats and uses automated validation to confirm AI outputs meet regulatory standards. For decisions with compliance implications, human-in-the-loop (HITL) workflows require manual oversight before final approval. These measures create detailed audit trails for every AI interaction, helping businesses demonstrate compliance during regulatory reviews while maintaining the efficiency needed for real-world operations.

Anthropic has positioned Claude as more than just an AI assistant - it's a fully integrated copilot and intelligence layer for businesses. In February 2026, they introduced Claude Cowork and an enterprise plugin system, enabling seamless functionality within tools like Microsoft Excel, PowerPoint, Slack, and Google Workspace. This eliminates the inefficiency of constantly transferring data between applications.

The results speak for themselves. By October 2025, Novo Nordisk's NovoScribe, built on Claude through Amazon Bedrock, slashed clinical report writing time from over 10 weeks to just 10 minutes - a 90% time reduction. Review cycles were also cut in half. Similarly, Palo Alto Networks leveraged Claude via Google Cloud Vertex AI to support 2,500 developers, boosting feature development speed by 20-30% and reducing onboarding time for new developers from months to weeks. At IG Group, Claude saved 70 hours weekly in analytics and marketing workflows, achieving a full return on investment within just three months. These examples highlight how Anthropic's AI solutions deliver measurable efficiency gains across industries.

Anthropic's growth has been nothing short of impressive. By early 2026, the company reached a $14 billion run-rate with triple-digit annual growth, serving eight of the Fortune 10 companies. Their pricing structure is competitive: $3 per million input tokens and $15 per million output tokens for Claude 4 Sonnet, and $15/$75 for Claude 4 Opus. Additionally, prompt caching can reduce costs by up to 90%.

What sets Anthropic apart is its multi-cloud availability across AWS (Bedrock), Google Cloud (Vertex AI), and Microsoft Azure (Foundry). This flexibility allows businesses to optimize workloads across AWS Trainium, Google TPUs, and NVIDIA GPUs without being tied to a single vendor. This hardware-agnostic approach helps enterprises scale efficiently while keeping costs in check.

Anthropic prioritizes safety and governance through its Constitutional AI framework, which trains models based on a fixed safety constitution. This approach makes Claude more cautious and reliable, with a prompt injection attack success rate of just 4.7% - far lower than many competitors. As a Public Benefit Corporation, Anthropic operates under the oversight of the Long-Term Benefit Trust, ensuring safety remains a core focus over commercial interests.

For enterprise customers, Anthropic offers a zero-retention policy, guaranteeing that customer data is never used to train foundational models. The Claude Enterprise plan includes essential features like Single Sign-On (SSO), SCIM for identity management, role-based access controls, and centralized usage analytics. Administrators can oversee private plugin marketplaces and monitor tool usage across teams. Claude 4 models meet AI Safety Level 3 (ASL-3) standards and are HIPAA-compliant, making them a strong choice for healthcare organizations. These robust features underscore Anthropic's commitment to secure and enterprise-ready generative AI solutions.

Mistral AI provides a streamlined ecosystem for deploying generative AI across various infrastructures. At the heart of this system is the Mistral AI Studio, a centralized hub offering developers instant API access to its entire model lineup with transparent pricing. The platform supports deployment on Azure Foundry, Amazon Bedrock, Google Cloud Model Garden, and IBM WatsonX. For organizations with stringent data requirements, it also enables self-hosted, on-premises, and VPC environments.

Through a partnership with NVIDIA, Mistral AI ensures optimized performance across data centers, PCs, and edge devices. Using NVIDIA’s TensorRT-LLM and NIM microservices, the platform achieves impressive results. For example, NVIDIA GB200 NVL72 systems process over 5,000,000 tokens per second per megawatt when running Mistral Large 3. The platform also includes specialized models like Codestral for coding, Voxtral for speech-to-text, and Document AI for OCR, simplifying workflows across various enterprise needs.

Mistral's sparse Mixture-of-Experts (MoE) architecture delivers advanced AI capabilities while keeping expenses under control. Mistral Large 3, with its 675 billion total parameters (41 billion active at a time), significantly reduces operational costs. Token pricing is set at $0.50 per million input tokens and $1.50 per million output tokens on Azure Global Standard. For cost-conscious enterprises, Mistral Medium 3 offers robust performance at a fraction of the cost - eight times lower than earlier models.

In July 2025, Capgemini integrated the Mistral coding stack across its global delivery teams, particularly in sectors like defense, telecom, and energy. This initiative, led by Alban Alev, VP Head of Solutioning at Capgemini France, reduced development, review, and testing time by 50%, all while maintaining strict code ownership and compliance standards. The Mistral 3B variant, capable of processing up to 385 tokens per second on an NVIDIA RTX 5090 GPU, is particularly suited for edge deployments where cost efficiency and low latency are priorities.

Mistral AI's advancements are transforming operations across industries. CMA CGM, for instance, introduced "MAIA", an AI assistant powered by Mistral, to over 155,000 employees in 160 countries, enhancing productivity in global maritime operations. Similarly, AXA equips more than 140,000 employees with secure AI tools for text analysis, while Mirakl uses Mistral’s technology to automate the management of over 10 million products monthly.

"Leveraging Mistral's Codestral has been a game changer in the adoption of private coding assistant for our client projects in regulated industries. We have evolved from basic support for some development activities to systematic value for our development teams."

- Alban Alev, VP Head of Solutioning, Capgemini France

In another example, Abanca, a leading Spanish bank, deployed Mistral models in a fully self-hosted setup in July 2025. This approach ensured compliance with European banking regulations on data residency and network isolation. Additionally, the company’s Devstral Medium achieved a 61.6% score on the SWE-Bench Verified benchmark, outperforming models like Claude 3.5 and GPT-4.1-mini in handling complex coding tasks.

Mistral AI provides an AI Governance Hub for managing technical documentation and supports deployment in sovereign cloud environments to protect data privacy. This flexibility allows industries with strict regulations to maintain complete control while accessing advanced AI features. Organizations can export model weights for on-premises use while utilizing cloud APIs for additional capacity or experimentation, ensuring sensitive data remains secure.

The platform also offers custom model training and distillation services, enabling businesses to fine-tune models with proprietary datasets without sacrificing performance. This adaptability, combined with support for diverse deployment environments, makes Mistral AI especially appealing to industries like financial services and defense, where both cutting-edge AI and strict compliance are critical. By offering this level of control and customization, Mistral AI positions itself as a key player in delivering practical, enterprise-ready generative AI solutions.

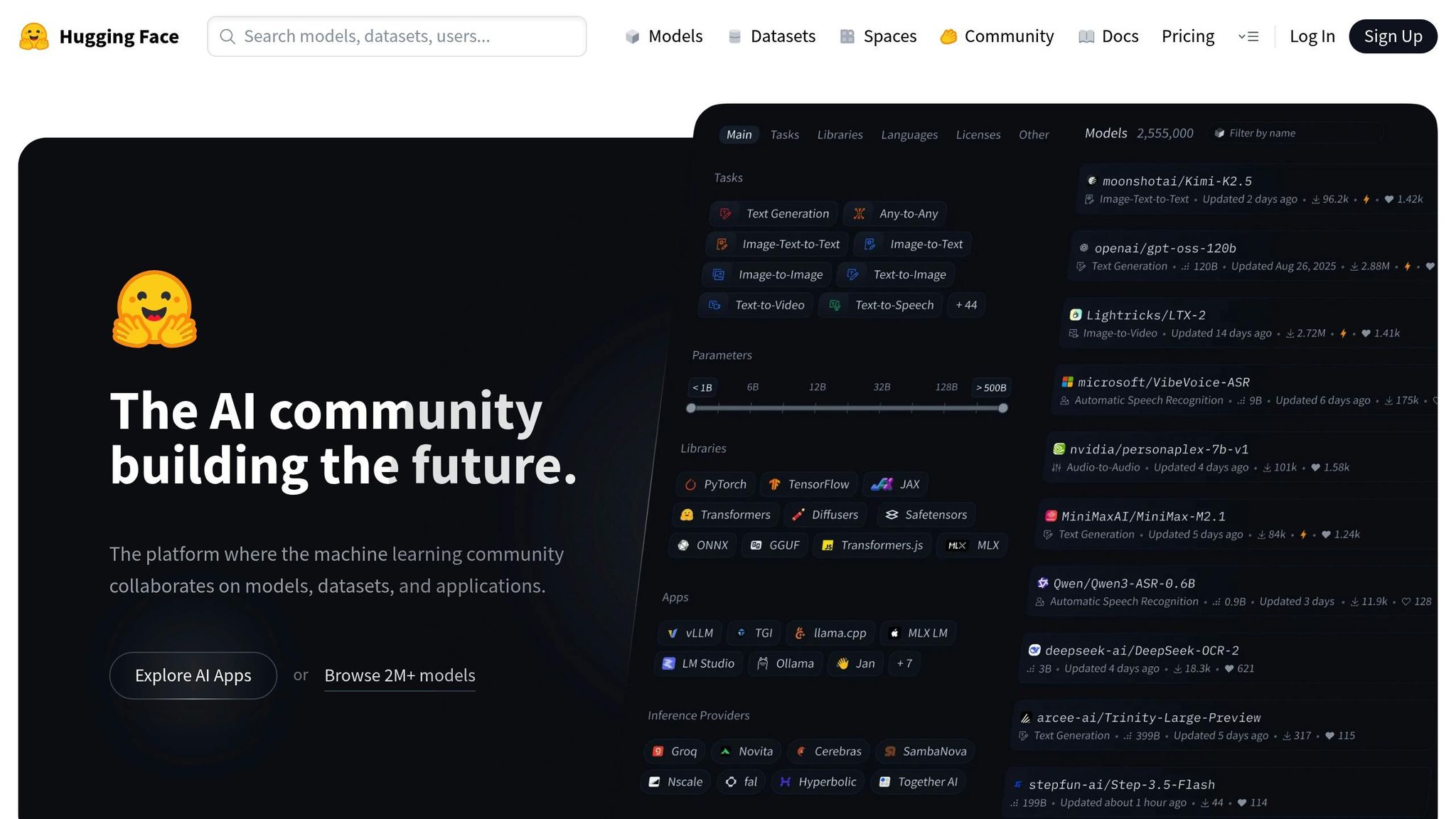

Hugging Face stands out as a central hub for machine learning, hosting an impressive collection of 2 million models, 500,000 datasets, and 1 million Spaces. Its Transformers library offers a standardized Python API compatible with over 40 model architectures, including BERT, GPT, Llama, and T5. This streamlined setup allows developers to switch between models with minimal effort, supporting frameworks like PyTorch, TensorFlow, and JAX.

The platform simplifies complex AI tasks through its Pipeline system, which reduces tasks like sentiment analysis or image recognition to just a few lines of code. Additionally, the Unified Inference API provides access to more than 45,000 models through a single, cost-free interface. Often referred to as the "GitHub of Machine Learning", Hugging Face has attracted over 50,000 organizations, including major players like Meta, Google, and Microsoft. The Transformers library alone processes around 25 million downloads each month, making it a cornerstone for scalable and efficient AI development.

Hugging Face prioritizes efficiency by focusing on task-specific and distilled models instead of relying on larger, general-purpose systems. These models use 20–30× less energy while maintaining accuracy. For instance, the full DeepSeek R1 model requires at least 8 GPUs, but its distilled counterparts are up to 30x smaller and can run on a single GPU.

In October 2024, Henri Jouhaud, CTO at Polyconseil, shared that Hugging Face Generative AI Services (HUGS) cut deployment times for locally ready models from one week to under an hour. Similarly, Research Engineer Ghislain Putois from Orange successfully deployed Gemma 2 on Google Cloud Platform using an L4 GPU, with everything functioning "out of the box" without manual adjustments. Hugging Face's efficiency and scalability have contributed to its $130.1 million revenue in 2024 and a valuation of $4.5 billion, with backing from tech giants like Google, Amazon, Nvidia, and Microsoft.

Hugging Face is widely adopted across industries to address challenges like compliance, personalization, and content generation. Financial firms rely on the platform for compliance research, healthcare providers use it to build tools for clinical note assistance, and retailers create personalized search systems. The platform also supports advanced use cases such as:

Its Parameter-Efficient Fine-Tuning (PEFT) feature enables companies to tailor large models to specific needs with minimal computing resources. Tools like Inference Endpoints, Text Generation Inference (TGI), and vLLM provide scalable, high-throughput model serving on private infrastructure or major cloud platforms. Hugging Face pricing starts at $9/month for Pro plans, $20 per user per month for Team plans, and $20–$50 per user per month for Enterprise plans, with compute usage starting at $0.60 per hour.

Hugging Face ensures enterprise-grade security with features like RBAC, SSO, and audit logs to meet regulatory requirements. It supports managed cloud hosting, on-premises deployment for stricter data control, and hybrid solutions. Organizations can enforce governance by setting up approved model lists and license check workflows to avoid deploying unvetted models. Additionally, SOC 2 compliance ensures secure internal development practices, solidifying Hugging Face as a trusted platform for secure AI workflows.

Scale AI's GenAI Platform simplifies the generative AI landscape by combining data labeling, model fine-tuning, and evaluation into a single, streamlined workflow. This eliminates the need for juggling multiple tools. Enterprises can tap into various models through a unified API, all while maintaining strict security standards. This approach makes it easier to deploy AI solutions across enterprise-level operations.

At the core of the platform is the Data Engine, which provides training data tailored for critical sectors like government, defense, and healthcare. With a focus on Reliable AI Systems, this ensures that enterprises can develop applications where precision and security are paramount. Additionally, the Scale Eval tool enables teams to assess model performance against safety and reliability criteria. This allows organizations to thoroughly validate third-party models before transitioning them from prototype to production.

Scale AI is designed for enterprises that demand rigorous testing and validation. By combining automated evaluation with Human-in-the-Loop (HITL) oversight, the platform ensures that AI outputs meet human values and safety standards. Its Red Teaming services dive deep into identifying vulnerabilities, biases, and potential harms, catching edge cases that automated testing might overlook. For enterprises scaling their generative AI solutions, built-in cost management tools help track and optimize spending effectively.

Scale AI goes beyond development by offering a robust compliance framework to meet enterprise needs. The platform holds certifications like SOC 2 Type II, HIPAA, GDPR, and ISO 27001, ensuring adherence to strict data protection standards. Encryption safeguards data both at rest and in transit, while Role-Based Access Control (RBAC) manages user permissions. Audit trails provide transparency by tracking model versions and data lineage.

"Scale GenAI Platform provides the enterprise-grade security and compliance required to move generative AI from prototype to production." - Scale AI

The platform's governance tools allow organizations to vet models thoroughly and maintain version control, ensuring only approved models are deployed in production. These features make Scale AI especially suitable for industries with strict regulatory requirements, where detailed compliance records and strong security measures are non-negotiable.

ElevenLabs represents the growing trend of integrated AI platforms designed to deliver both high performance and cost efficiency. Initially focused on text-to-speech, the platform has expanded into a complete audio infrastructure. It integrates three main components: ElevenAgents for conversational AI, ElevenCreative for media production, and ElevenAPI for developer tools. This all-in-one system allows businesses to manage tasks ranging from real-time customer interactions to creating professional-grade content seamlessly.

The platform includes specialized models tailored to specific needs. Eleven v3 excels in high-stakes narration across 70+ languages, reducing errors by 68% in complex text scenarios, such as chemical formulas or phone numbers. For real-time applications, Eleven Flash v2.5 offers ultra-low latency at just 75ms, ensuring natural, uninterrupted conversations. A standout feature, Audio Tags, enables creators to fine-tune emotional delivery with commands like [whispers] or [sighs], adding cinematic quality to audio projects. This flexibility ensures precise handling of diverse audio requirements.

ElevenLabs has built its platform to scale efficiently while keeping costs in check. A notable feature, Silence Billing, charges only 5% of the rate during quiet periods, offering savings for low-activity usage. Additionally, Flash and Turbo models cost 50% less per character compared to flagship models, giving businesses the ability to balance budget and quality.

In January 2026, CARS24 adopted ElevenLabs' voice AI solution, achieving a 50% reduction in resolution times and enhancing customer satisfaction. Jayesh Gupta, Head of AI and Innovation at CARS24, remarked:

"With ElevenLabs as our technology partner, we're creating a new standard where every interaction builds confidence. Our voice AI solution is cutting resolution times in half and boosting customer satisfaction."

Other companies have reported similar success. Klarna reduced customer inquiry resolution times by a factor of 10, while Gaia cut production time by 25% using ElevenCreative tools. These results underscore the platform’s ability to drive efficiency while maintaining high-quality outcomes, setting the stage for its robust security and compliance measures.

ElevenLabs employs a comprehensive three-tier guardrail system to ensure safe and reliable operations. This system validates prompts, detects injection attempts, and screens responses for inappropriate content. The platform adheres to SOC 2, HIPAA, and GDPR standards, with regional data residency options available in the US, EU, and India. For industries requiring extra precautions, Zero Retention Mode ensures no audio data is stored after processing.

In 2026, ElevenAgents became the first AI voice agent platform to secure insurance coverage through the AIUC-1 certification, which involves extensive testing to address security, data privacy, and hallucination risks. The platform has been used to create over 2 million agents, facilitating more than 33 million conversations. Clients have reported up to a 66% reduction in cost per call, demonstrating the platform’s ability to combine cost savings with stringent governance protocols.

Synthesia is setting a new standard in video production by streamlining the entire process into a single, browser-based platform. This approach eliminates the need for external agencies, studios, or multiple tools, making video creation more accessible and efficient.

Synthesia brings all aspects of video production into one unified system. Its AI Playground integrates leading generative models like Sora 2, Google Veo 3.1, and FLUX.2, enabling users to create, localize, manage, and publish high-quality video content without leaving the platform.

The platform supports over 140 languages with advanced lip-sync technology and offers a library of more than 230 AI avatars. The Express-2 avatars go beyond simple "talking head" formats, incorporating natural hand gestures and professional body language for a more dynamic presentation. For voice cloning, Express-Voice technology preserves regional accents and dialects across 17 distinct voice profiles, ensuring speakers retain their unique characteristics instead of defaulting to a neutralized voice.

Synthesia’s capabilities translate into a range of practical business applications. It’s particularly effective in areas like Learning and Development (L&D), Sales Enablement, and Internal Communications. Companies are using it to replace lengthy training manuals with engaging videos, craft personalized sales pitches in multiple languages, and deliver updates through AI avatars. The platform’s one-click translation and lip-syncing features also eliminate the need for external dubbing services, simplifying global content distribution.

Real-world examples highlight the platform's impact:

These examples demonstrate how businesses can save time and money while scaling their video production efforts.

Synthesia’s growth reflects its widespread adoption, with a valuation of $4 billion following a $200 million investment in late 2025. By 2025, the platform surpassed $100 million in Annual Recurring Revenue (ARR) and is used by over 60,000 businesses, including 90% of the Fortune 100. Users report efficiency gains of 70–90% and a 62% reduction in production time.

To help organizations manage budgets effectively, the platform includes credit allocation tools with automated alerts at 75%, 90%, and 100% usage.

"What used to take 4 hours now takes 30 minutes." - Rosalie Cutugno, Global Sales Enablement Lead

"100 hours of translation done in 10 minutes!" - Geoffrey Wright, Global Solutions Owner

These time savings directly translate into cost reductions, with companies reporting cuts of 80-90% in video production expenses.

Synthesia prioritizes governance and security, offering features like admin controls to disable publishing, restrict unapproved templates, and enforce brand standards in real time. The platform uses a dual-layer moderation system, combining AI and human review, to block inappropriate content. Every AI avatar is created with the actor's explicit consent, ensuring ethical use of generative technology.

Synthesia is certified for SOC 2 Type II, GDPR, and ISO 42001 compliance and supports SAML/SSO and role-based access controls. In late 2024, the platform’s moderation system blocked 100% of unauthorized avatar creation attempts and successfully stopped 74 out of 75 harmful content attempts during NIST testing. Enterprise clients can also secure sensitive content with SSO requirements and password-protected video pages, ensuring robust security for all users.

Jasper is a comprehensive AI platform tailored for marketing teams, serving over 900 enterprise clients, including 20% of the Fortune 500. It streamlines content creation into an automated, brand-consistent process.

Jasper's LLM-agnostic framework integrates leading models like Gemini, GPT, and Claude while allowing for custom LLMs. Its Jasper IQ hub centralizes brand knowledge, style guidelines, and audience insights, ensuring consistent, on-brand AI-generated content. The platform also features Jasper Studio, a no-code tool that empowers marketing teams to design custom AI apps and workflows without needing technical expertise or prompt engineering. This approach puts control directly in the hands of marketers, eliminating the need for costly technical resources while enhancing productivity.

Jasper addresses three key marketing challenges:

The platform's impact is evident in real-world scenarios. In 2025, iHeartMedia reduced campaign development from weeks to a single day for a top podcast launch. Adidas created 7,500 product descriptions in just 24 hours. Bloomreach increased blog output by 113%, leading to a 40% traffic boost. Meanwhile, Webster First Federal Credit Union achieved a 9x increase in organic traffic.

"It's not just about efficiency gains, it's about augmenting human creativity, scaling expertise, and unlocking new ways to engage customers and drive business outcomes." - Peter So, VP of Digital Innovation, Cushman & Wakefield

Jasper's Content Pipelines automate the entire content lifecycle, seamlessly connecting data, strategy, and distribution. This allows teams to scale their output without adding manual effort. For high-volume tasks, Jasper Grid offers a spreadsheet-like interface to manage content execution systematically.

The platform's success stories highlight its efficiency. Cushman & Wakefield saves over 10,000 hours annually under Peter So's leadership. WalkMe has reduced content creation time by more than 3,000 hours, while Adidas has automated 60% of its SEO tasks.

Jasper's model-agnostic design ensures enterprises can test and integrate new models within 24 hours, optimizing for speed, performance, and cost without being tied to a single provider. This flexibility allows marketing teams to focus on delivering results rather than managing technical complexities.

Jasper includes a Trust Foundation layer that prioritizes security, governance, and compliance. The platform is SOC2 and GDPR compliant, featuring enterprise-grade encryption and secure deployment options. Client data remains proprietary and is never used to train third-party AI models. Brand IQ ensures adherence to brand voice, style, and visual guidelines.

Administrators benefit from features like AI audit logs, role-based permissions, and secure group settings. The platform supports Single Sign-On (SSO) for authentication and offers private spaces to manage data sources and publishing permissions. Teams can assign specific roles to internal members and external collaborators, maintaining control over data access and content distribution. These features cement Jasper's position as a leader in AI-powered marketing workflows.

Adept AI Labs takes generative AI beyond text creation, introducing active, task-focused Agentic AI. Established by AI innovators like David Luan and Ashish Vaswani - key contributors to groundbreaking AI architectures - the company has secured $415 million in funding. Adept’s platform operates within software interfaces, acting as a universal assistant in tools such as Airtable, Photoshop, Tableau, and Twilio. This shift enables AI to seamlessly integrate into workflows, transforming how tasks are executed.

Adept AI Labs has found practical applications across various industries:

The platform achieves notable performance benchmarks. In internal tests, its planning system scored 88, significantly outpacing GPT-4’s score of 59. The Adept Locate tool, used to identify buttons and links on webpages, earned a score of 93. For analyzing PDFs, charts, and tables, its Web VQA (Visual Question Answering) tool scored 88.2.

"True general intelligence requires models that can not only read and write, but act in a way that is helpful to users." - David Luan, CEO of Adept

Adept AI Labs aligns with the growing demand for enterprise-ready AI by simplifying workflow deployment. Businesses can establish new workflows using natural language instructions in minutes, cutting down deployment time and engineering expenses. Its design ensures workflows remain functional despite software updates, eliminating maintenance costs. Using a proprietary language, the platform interacts seamlessly with any website or application, allowing consistent scaling across departments like HR, Finance, and Operations.

Adept emphasizes human oversight, enabling managers to review and adjust an agent’s actions before execution. Its proprietary Adept Workflow Language (AWL) ensures agents follow precise instructions, keeping automation controlled and predictable. To address security and compliance needs, the platform includes a dedicated Trust Center and robust enterprise-grade protections, making it suitable for industries with stringent regulatory requirements. Adept AI Labs exemplifies the evolution toward integrated, scalable AI tailored for enterprise environments.

Vectara provides an Enterprise RAG platform designed to streamline AI agent deployment and management. It tackles the issue of "RAG sprawl", where organizations risk creating fragmented and unregulated AI systems. Instead of building isolated solutions, Vectara offers a unified platform that supports a wide range of applications, from customer service bots to advanced document analysis workflows. This foundation allows businesses to address varied challenges efficiently.

Vectara's platform serves a variety of industries, offering solutions tailored to specific needs:

One notable success story is Conversica's implementation of Vectara's Revenue Digital Assistant™ platform. The results included an 18-point increase in precision, a 30-point boost in recall, and a 25-point rise in F1 scores. Supporting over 100 languages and handling various file formats like PDF, JSON, XML, and Office documents, the platform adapts seamlessly to global operations.

Vectara's architecture is designed for scalability and cost efficiency, eliminating the heavy engineering demands of traditional AI systems. Businesses can scale AI applications 3- to 300-fold within a year, all without requiring a dedicated machine learning team. The platform's Bring-Your-Own-Model (BYOM) feature ensures flexibility, allowing companies to switch between models like OpenAI, Google, or Vectara's own Mockingbird model based on cost and performance needs.

"Vectara's Enterprise RAG platform not only gives us the intelligence and efficiency to streamline title creation, but critically, it protects us from the risk of RAG sprawl by delivering a unified, governed, and secure AI foundation." - Jeff Hummel, SVP of Engineering, Anywhere Real Estate

The centralized system simplifies management, enabling a single administrator to oversee tasks that would typically require an entire AI engineering team. Benefits include a 60% reduction in product defects through automated troubleshooting and a 30% decrease in fraudulent claims via improved document management.

Vectara places a strong emphasis on security and compliance in its AI systems. Its Guardian Agents detect and correct hallucinations in real time using the HHEM (Hughes Hallucination Evaluation Model). Each response is paired with a Factual Consistency Score to ensure quality. Deployment options include SaaS, VPC, or on-premise (air-gapped), meeting stringent requirements like HIPAA and SOC II compliance for industries such as healthcare and finance.

"Vectara's new Mockingbird took HuckAI from being an overly polite librarian to giving answers I would expect from a senior coworker." - Sunir Shah, Founder, HuckAI

The platform also provides detailed observability across AI agents, ensuring workflows are auditable and compliant. This transparency moves away from opaque "black-box" models, giving businesses clear insights into decision-making processes while adhering to both internal policies and external regulations.

The generative AI landscape is rapidly advancing, shifting from basic text generation to agentic workflows capable of handling complex tasks across various systems. The startups mentioned here showcase diverse strategies to tackle this evolution. Anthropic emphasizes safety and constitutional AI, while Hugging Face champions an open-source ecosystem for better interoperability. Meanwhile, ElevenLabs pushes the boundaries of multimodal audio, Scale AI focuses on improving data quality, and Vectara prioritizes seamless enterprise integration.

When evaluating these solutions, look for platforms that emphasize interoperability rather than isolated features. Solutions with robust APIs or open-source frameworks can integrate effortlessly into your existing tech stack. The move toward agentic autonomy signals a departure from simple reactive chatbots to systems that can plan, execute, and self-correct multi-step workflows. For instance, Adept AI Labs exemplifies this shift by navigating user interfaces and completing tasks rather than merely responding to queries.

Cost optimization remains as critical as performance in this space. Open-weight models offer a way to reduce ongoing API expenses while maintaining data security through private hosting. With the generative AI market surging from $191 million in 2022 to $25.6 billion in 2024, 75% of the value generated by these technologies is concentrated in four key areas: customer operations, marketing and sales, software engineering, and R&D. To maximize impact, focus your AI implementation on these high-value areas rather than attempting a full-scale rollout across your entire organization.

"Generative AI could add the equivalent of $2.6 trillion to $4.4 trillion annually across the 63 use cases we analyzed - by comparison, the United Kingdom's entire GDP in 2021 was $3.1 trillion." - McKinsey

Looking ahead to 2026, the defining edge will be the "Context Moat" - a combination of proprietary data, historical preferences, and industry-specific workflows that enhance AI performance. Startups specializing in vertical markets like legal, healthcare, and finance are achieving higher accuracy by embedding domain expertise directly into their systems. When choosing a solution, ensure it offers transparent audit trails and interpretable AI, enabling human oversight to trace decision-making for compliance and governance.

Agentic AI refers to highly advanced systems designed to function autonomously. These systems can sense their environment, reason through complex problems, make decisions, and take actions independently to meet specific business objectives. Unlike traditional AI, which relies on predefined rules and human input, Agentic AI takes a proactive approach, optimizing workflows and making decisions without direct intervention. This capability allows businesses to transition from reactive strategies to proactive ones, driving advancements in areas such as customer operations, supply chain management, and enterprise intelligence.

To choose the most suitable large language model (LLM), focus on the specific strengths and abilities of each option. Some models perform exceptionally well in areas like coding, logical reasoning, or handling multimodal tasks, while others are optimized for privacy or cost-efficiency. Important considerations include the size of the context window, response speed, pricing, and available deployment methods. Align the model’s features with your task demands, budget, and existing infrastructure to achieve optimal outcomes.

Proving the return on investment (ROI) from generative AI means showcasing clear, measurable outcomes like cutting costs, boosting productivity, and driving revenue growth. For example, efficiency gains can be tracked by measuring time saved - small business owners might reclaim up to 13 hours each week. Startups frequently report lowering annual costs by 20% or more while seeing revenue grow by around 5%. To make a strong case, establish specific metrics tied to your business objectives and leverage analytics tools to monitor progress and validate the results.