AI workflows are becoming essential, but poor UX in prompt engineering tools slows teams down. Over 65% of enterprises now use generative AI, yet 27% still rely on manual reviews due to inefficiencies in current tools. The core problem? Complex interfaces, lack of guidance for non-technical users, and limited transparency in how AI interprets prompts.

Here's what effective UX can fix:

For enterprises, good UX means more than productivity - it ensures accuracy, governance, and cost control. Tools with shared workspaces, version control, and audit logs make collaboration easier while maintaining compliance. By prioritizing user-friendly design, companies can reduce errors, cut costs, and make AI accessible to everyone, not just experts.

Enterprise AI Adoption Statistics and UX Impact Metrics

The tools that either frustrate or empower teams often hinge on their UX design. A well-thought-out user experience can eliminate friction and boost productivity, making prompt engineering more accessible and efficient for all users. Below are some standout features that demonstrate how effective UX design transforms this process.

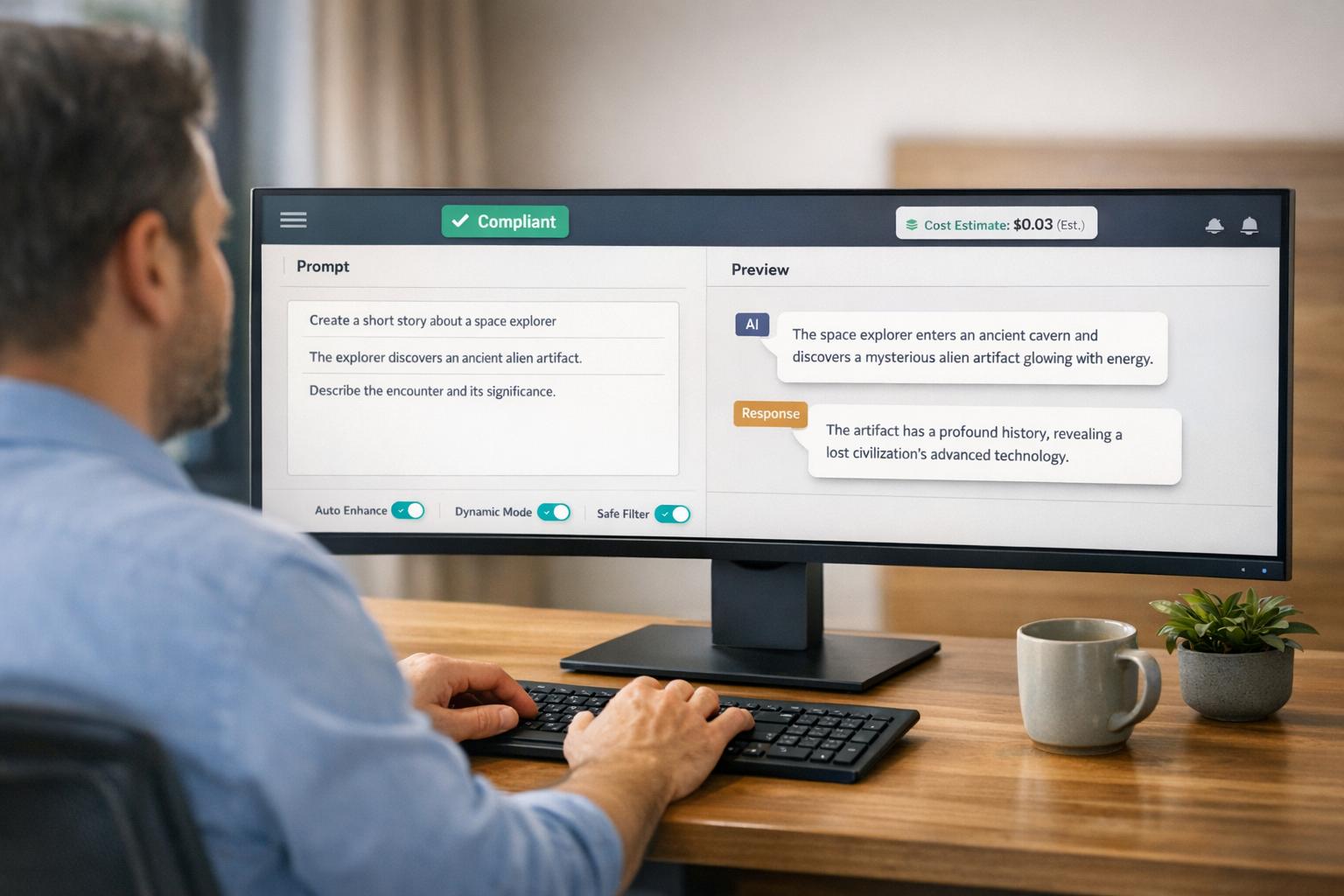

A clutter-free interface can make all the difference by prioritizing clarity over an overwhelming array of options. For instance, in October 2024, LangChain introduced its "Prompt Canvas", a dual-panel layout designed to simplify workflow. This layout separates conversational testing (via the Chat Panel) from the editing workspace, enabling users to refine prompts and test AI responses simultaneously. This eliminates the need to toggle between screens or navigate complex menus, keeping the focus squarely on the task at hand.

Additionally, features like collapsible metadata and streamlined layouts reduce visual clutter. By using progressive disclosure, advanced options are revealed only when necessary, preventing users from feeling overwhelmed. This design principle allows experts to craft precise prompts without being bogged down by unnecessary controls.

Automation plays a key role in turning prompt engineering into a faster, less repetitive process. In 2024, Promptitude introduced "Wizard Mode", which breaks down complex prompt forms into manageable, step-by-step stages. This feature is particularly helpful for non-technical users in enterprise settings who need to provide detailed inputs without technical expertise.

Another time-saving feature is Custom Quick Actions, which lets expert users create one-click templates for common tasks. Teams can set up actions like "reformat for brand voice" or "adjust reading level", enabling even less experienced members to apply organizational standards with ease. This feature not only speeds up prompt iteration but also ensures consistency across the team, blending flexibility with governance requirements.

Without real-time feedback, users are left guessing when refining prompts. Tools that offer side-by-side comparisons of AI model outputs - such as GPT-4, Claude, or Gemini - help teams make informed decisions about model selection and optimization. This approach moves beyond general transparency, offering actionable insights for better results.

"Non-expert users may find it challenging to identify the changes needed to improve a prompt, especially when they lack domain-specific knowledge and lack appropriate feedback." - Aditi Mishra, Lead Author, arXiv:2304.01964

Visual analytics further enhance the process by illustrating how changes like keyword tweaks or paraphrasing impact output quality. Batch evaluation tools allow teams to test prompts against sample datasets, ensuring that updates don’t inadvertently degrade performance. This level of insight is especially critical for high-stakes applications, where even small errors can have serious consequences, reinforcing the importance of governance and reliability in enterprise workflows.

Creating enterprise-grade prompt software involves more than just functionality - it’s about enabling seamless team workflows and ensuring governance on a large scale. For big organizations, the software must support collaboration, enforce strong security, and manage multiple models effectively, all while keeping the user interface simple and intuitive. By refining the UX in prompt engineering, companies can enhance individual productivity while promoting enterprise-wide collaboration and control.

Shared workspaces are essential for keeping prompt assets organized and easily accessible. They prevent data from being scattered by centralizing prompts into well-structured folders and tags, allowing teams to organize assets by project, team, or model.

Modular prompt design simplifies the management of frequently used content. For example, reusable snippets can store items like legal disclaimers, database schemas, or brand guidelines in one place. This ensures that updates - such as a compliance team modifying a HIPAA disclosure - automatically reflect across all related prompts, maintaining consistency across departments.

Version control is another critical feature, offering transparency in how prompts evolve. Immutable logs keep track of changes, and diffing tools allow side-by-side comparisons of different versions. Requiring descriptive commit messages for updates creates a clear trail, which is invaluable for debugging, compliance checks, or addressing unexpected AI behavior. These features naturally integrate into secure workflows that prioritize accountability.

Release labels (e.g., Development, Staging, Production) provide a controlled environment for testing prompts before they go live. This reduces the risk of untested changes affecting end-users.

Role-Based Access Control (RBAC) ensures that users operate within their designated roles, protecting production environments. Common roles include Admins (with full control over settings and billing), Editors (who can create and modify prompts), and Prompt Users (who can only execute prompts). This approach minimizes risks by restricting production changes to authorized individuals, adhering to the principle of least privilege.

"At scale, prompt governance breaks down when teams can't clearly control who changed what, why it changed, or how it reached production."

– PromptLayer

Audit logs are indispensable for tracking every change made to prompts, including the author, timestamp, and content history. These logs streamline debugging, compliance reviews, and incident investigations, sparing teams from piecing together events from scattered documentation.

Single sign-on (SSO) and centralized API key management enhance security by reducing the reliance on distributing credentials to individual developers. With an "AI Connections" dashboard, teams can manage access efficiently, ensuring better control while simplifying onboarding for new members.

Scalable designs are essential for organizations managing multiple models and departments. These designs build on collaboration and security features to handle complex, multi-layered environments.

Provider-agnostic design allows teams to define a prompt once and use it across various models - like GPT-4, Claude, Gemini, or LLaMA - without needing to rewrite instructions for each. This flexibility avoids vendor lock-in and makes it easier to switch providers based on pricing or performance. Additionally, routing requests to specific regions (e.g., EU-West) helps meet data residency requirements without altering the prompt structure.

Hierarchical organization separates tools into top-level Organizations (for billing and global settings) and project-level Workspaces (for team-specific resources). This setup allows departments to maintain their own environments while sharing resources like snippet libraries or approved templates.

"Key stakeholders do not need to be developers, empower them to contribute and lead your prompts."

– Jared Zoneraich, Founder of PromptLayer

A centralized Prompt Registry or CMS further streamlines workflows by decoupling prompts from application code. This setup enables non-technical stakeholders - such as product managers, legal teams, or subject-matter experts - to refine and update prompts without waiting on engineering teams. By treating prompts as standalone assets that can be versioned, tested, and deployed independently, organizations can speed up AI feature development while maintaining strong governance practices.

Building on intuitive design and streamlined workflows, better UX offers tangible advantages for enterprises.

Streamlined software design eliminates the typical learning curve that can slow AI adoption. By moving beyond basic chat interfaces to structured "Canvas" layouts - featuring dual panels for conversations and real-time editing - teams can refine prompts alongside LLM agents, significantly reducing iteration times. This setup allows non-technical teams, like legal or content departments, to visually manage prompts without needing to code, accelerating feedback cycles for specialized AI tasks.

Custom quick actions further simplify complex processes. These one-click commands embed expert-level standards, enabling team members to meet advanced requirements without additional training or expertise.

Real-time FinOps dashboards integrated into prompt engineering tools provide detailed insights into LLM usage and token consumption. These dashboards highlight spending patterns, identify cost-driving prompts, and assess model efficiency, helping enterprises make cost-conscious adjustments. For example, teams can switch to more efficient models, shorten verbose prompts, or cache commonly used responses to prevent costs from escalating.

Batch evaluations further enhance efficiency by detecting performance issues before deployment. This ensures high-quality outputs while avoiding costly rework. By consolidating version-specific cost impacts and performance metrics, teams can make data-driven decisions about model selection and prompt optimization, cutting waste while upholding quality standards.

Centralized management tools and detailed audit trails revolutionize oversight for enterprise AI operations. Every prompt change is logged with details like author, timestamp, and content history, allowing compliance teams to track decisions without sifting through scattered records.

"Promptions allows users to explore how AI outputs shift with different refinement choices, offering a lightweight way to probe bias, model boundaries, or failure modes through UI interaction."

Dynamic UI controls, such as sentiment sliders or style toggles, enhance auditability by providing structured, trackable inputs for dataset creation and labeling. These tools ensure consistent decision-making that compliance officers can easily review. Additionally, visual comparison tools let teams assess how prompt adjustments affect output quality and safety. This proactive approach helps identify risks early, easing compliance efforts and minimizing exposure to unpredictable AI behaviors.

With clean interfaces, automated workflows, and real-time feedback, enterprises can achieve measurable improvements in how they use AI. Thoughtfully crafted platforms allow for quicker iteration cycles, lower token costs, and better compliance management. By replacing manual trial-and-error processes with dynamic controls for refining prompts, teams can move from frustration to productive collaboration.

The need for such tools is clear: only 5% of users can articulate complex AI requirements without help, and nearly half of U.S. adults face challenges in writing detailed prose. Features like style galleries, sliders, and structured templates help bridge this gap, empowering the workforce to use AI more effectively.

Platforms offering centralized prompt catalogs, version control, and real-time FinOps dashboards make a direct impact on costs and efficiency. These tools prevent budget overruns, maintain compliance, and distribute expert knowledge across teams. For example, they can cut deployment times from over a minute to nearly instantaneous, reduce compliance violations by 75%, and free up 20–30% of team capacity for more innovative work.

As demonstrated, a well-designed user experience not only simplifies workflows but also ensures secure and efficient operations. Choosing an enterprise-ready platform that aligns with your goals can turn AI into a governed, cost-efficient engine for productivity.

Key features in prompt tools focus on making the user experience as smooth and efficient as possible. These include intuitive interfaces that simplify the process of creating prompts and streamlined workflows designed to automate common best practices. Tools often incorporate dynamic controls, such as toggles and checkboxes, allowing users to fine-tune settings with precision. Additional capabilities like prompt sharing, reuse, and visual analytics further improve collaboration and boost overall efficiency. By reducing complexity and encouraging iterative improvements, these features make prompt engineering approachable and productive, even for those without advanced expertise.

Non-technical teams can improve the quality of their prompts by adopting structured methods. Frameworks that include clear context, detailed instructions, specific rules, and relevant examples help guide the process. Another effective technique is iterative refinement - beginning with a simple prompt and tweaking it based on the AI's responses to achieve better outcomes. Additionally, tools with intuitive interfaces simplify the process, enabling teams to work together and fine-tune prompts efficiently, all without needing technical skills.

Enterprises should establish governance controls by creating community-maintained prompt repositories. These repositories should include detailed metadata, licensing information, moderation protocols, and measures to prevent dominance by any single group or perspective. Additionally, prompt management systems should incorporate features like version control, evaluation tools, and collaborative frameworks. This approach promotes accountability while ensuring prompts align with local values and priorities.