AI is transforming how businesses operate, but it introduces new security risks, including data poisoning, model manipulation, and unauthorized tools. Organizations need robust solutions to protect sensitive workflows while staying competitive. This article highlights five leading platforms that secure AI processes without sacrificing efficiency or control:

Each platform offers unique strengths in monitoring, compliance, and integration, helping businesses manage AI risks while optimizing workflows. Below, we detail their features, benefits, and trade-offs to help you choose the right solution.

Comparison of Top 5 AI Security Platforms: Features and Performance Metrics

Prompts.ai brings together over 35 large language models (LLMs) - including GPT-5, Claude, LLaMA, and Gemini - within a single, secure platform. This approach tackles the challenge of tool sprawl, where scattered AI applications make it difficult to maintain oversight and enforce governance.

By consolidating AI tools, Prompts.ai gives security teams a centralized view of all AI activity. Instead of juggling disconnected or unauthorized applications, IT leaders can confidently manage every generative AI system, whether used for internal productivity or customer-facing interactions.

Every prompt and response is filtered through a real-time inspection layer. This process helps prevent sensitive data from being exposed and ensures objectionable content is blocked before it reaches users or customers.

The platform’s FinOps layer monitors token usage across all models, linking AI expenses directly to results. With pay-as-you-go TOKN credits replacing traditional subscription fees, organizations can cut AI software costs by as much as 98%.

Prompts.ai automatically generates audit trails for each workflow, simplifying compliance with industry regulations. This built-in governance ensures AI operations are secure and meet required standards from day one.

Together, these features create a secure and efficient foundation for managing AI workflows.

Check Point Infinity AI brings together on-premises, cloud, and SASE security under one platform. Designed to simplify and strengthen security for AI workflows, it offers real-time threat monitoring and detailed policy controls. Its centralized interface, the Infinity Portal, eliminates the fragmented management systems that can delay threat responses.

At the heart of Infinity AI's monitoring features is Infinity AIOps, which tracks critical metrics like CPU and memory usage across the security ecosystem. When a threat is detected, Infinity Playblocks launches automated responses to neutralize the attack immediately. Additionally, ThreatCloud AI, powered by over 50 AI engines, shares threat intelligence globally in less than 2 seconds. This results in an impressive 99.9% malware block rate and 99.7% phishing block rate.

"With Infinity XDR and Playblocks we are able to see attacks in real-time and it's given us the ability to stop attacks in their tracks. It shortens our time to respond significantly." - Milinko Milincic, Director of Technology, Information Security Architect, Fast Pace Health

The platform supports over 250 pre-validated integrations with popular third-party tools like Microsoft Defender, Microsoft Entra ID, and CrowdStrike Falcon. This allows administrators to reuse policy objects across Quantum Gateways, CloudGuard Network, and Harmony SASE, ensuring consistent controls while reducing operational workload. The Infinity AI Copilot further streamlines processes, cutting administrative task time by up to 90% with natural language-based policy creation and troubleshooting.

In addition to its threat management capabilities, Check Point Infinity AI offers tools for ensuring compliance across GenAI, SaaS, IaaS, and network gateways. The Quantum Policy Auditor automatically detects policies that may violate organizational or regulatory standards, while centralized logging simplifies regulatory reporting. The Infinity AI Copilot adds another layer of support by analyzing access control policies to pinpoint conflicts or misconfigurations that could jeopardize compliance.

Lasso Security is designed to enhance agentic AI workflows, leveraging the Model Context Protocol (MCP). Acting as an inline security gateway, it monitors traffic between users, applications, and LLMs in real-time. By making decisions at the moment of execution, it ensures proactive protection rather than reactive fixes.

Lasso’s model-agnostic design works seamlessly across various AI providers and frameworks. This eliminates the need for separate security setups for individual systems. By supporting a broad range of models, the platform enforces consistent security policies, enabling real-time threat detection without sacrificing efficiency.

At the heart of Lasso is its RapidClassifier™ engine, which processes every prompt, tool call, and output in under 50 milliseconds. Using a three-tier detection system, it combines:

This layered approach delivers an impressive 99.8% accuracy rate for analyzing content, context, and intent, while maintaining verdict latencies under 200 milliseconds. This performance far exceeds the typical 300–1,500 millisecond latencies of most commercial systems.

"Lasso's investigative tools have been incredibly valuable. But they also help to prevent risks proactively by educating our employees about responsible AI usage." - Itzik Menashe, CISO & VP IT Productivity, Telit Cinterion

Lasso Security simplifies integration without disrupting existing workflows. Its open-source security gateway for MCP links LLMs to databases, APIs, and internal tools via a structured interface. Developers can activate Lasso through Gateway, API, or SDK with just one line of code. Additionally, it integrates smoothly with tools like SIEM, SOAR, ticketing systems, and messaging platforms, creating unified telemetry and a consistent audit trail across diverse environments.

By partnering with Scytale, Lasso has bolstered its compliance and governance capabilities. The platform captures detailed records of inputs, context, retrieved data, and applied policies at the moment of execution. This level of traceability enables security teams to reconstruct incidents and demonstrate compliance to regulators. With a custom policy generator and a library of pre-built policies, organizations can meet industry standards efficiently. Moreover, Lasso offers a 570x cost advantage over traditional cloud-native guardrails, making continuous monitoring both effective and cost-efficient.

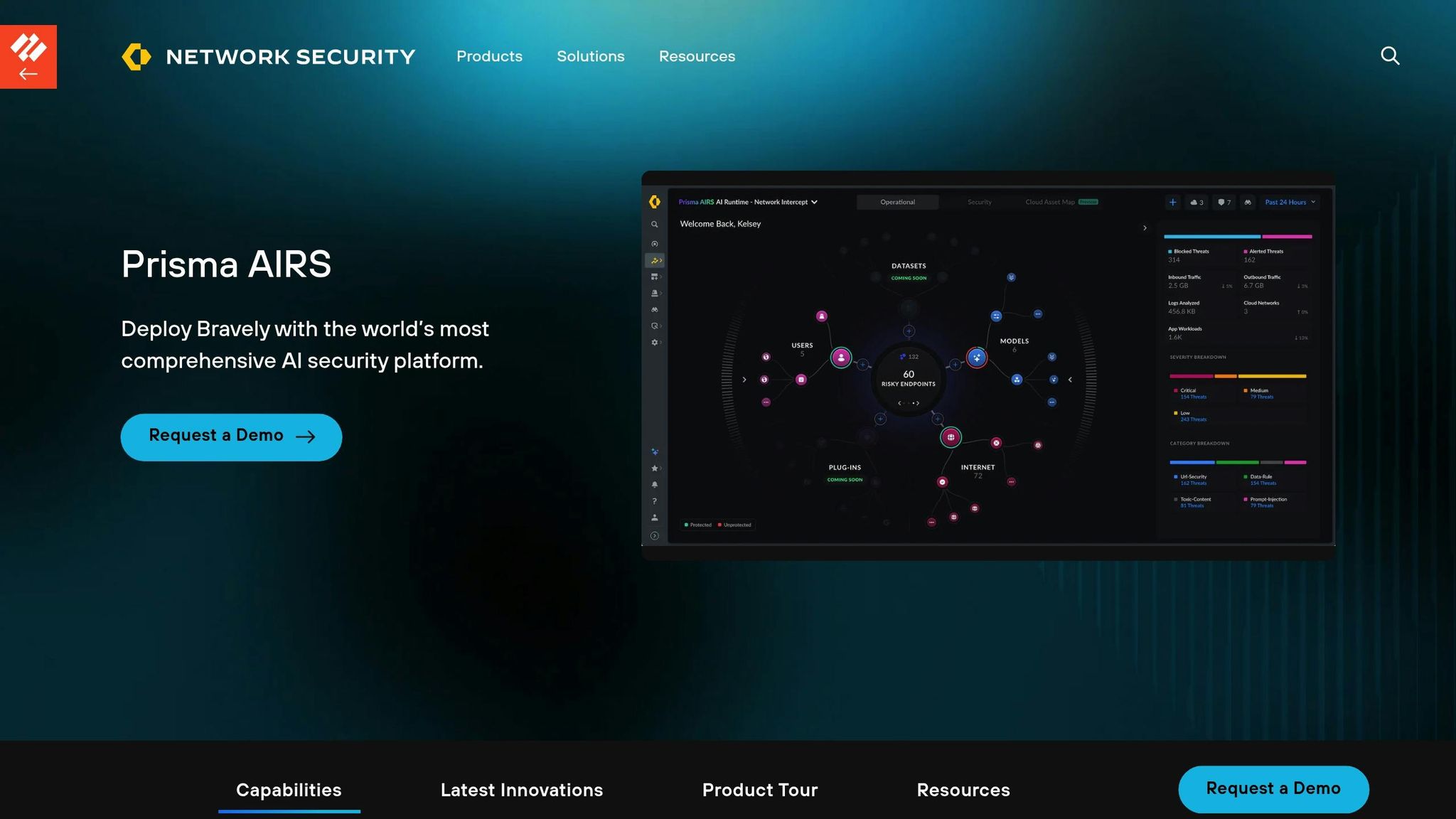

Palo Alto Prisma AIRS secures AI-driven workflows with a dual-layer approach: an AI Runtime Firewall for network-level defenses and an AI Runtime API that integrates security into application code. This setup tackles threats like prompt injections, sensitive data leaks, and model denial-of-service attacks in real time. It’s designed to work seamlessly across both public and private AI models without needing specific adjustments, making it ideal for organizations managing diverse AI systems. The dual-layer structure forms the backbone of the platform’s robust security features outlined below.

The AI Runtime Firewall acts as an inline security layer, continuously inspecting cloud networks for AI-focused threats. It identifies and mitigates risks such as data poisoning, lateral movement, and malicious responses before they disrupt workflows. For autonomous AI systems, the platform pays special attention to challenges like identity impersonation, memory tampering, and misuse of tools - critical issues as AI agents gain more independence.

"What we're excited to see is the ability for us to now look and see how API calls are being made. We can look at malicious detections that are being performed by agent AI tools across the board." - Steve Jablonski, CISO, TELUS Digital

The platform supports payloads of up to 2 MB for synchronous scans and 5 MB for asynchronous requests, with the capability to scan up to 100 URLs per request. In January 2026, Palo Alto expanded its offerings with Brand Reputation Risk Detection and Executive AI Reporting, enhancing its monitoring scope.

Prisma AIRS integrates seamlessly with major cloud providers like AWS, Azure, and GCP through a unified security framework. It also supports the Model Context Protocol (MCP) via a dedicated server that functions as a centralized security gateway, compatible with any MCP-compliant client. Developers can utilize the Prisma AIRS Python SDK (compatible with Python 3.9 to 3.13) to embed scanning capabilities directly into their applications, enabling automated checks for prompts and responses. The platform allows up to 20 applications to be linked to a single deployment profile, simplifying management while ensuring uniform security policies. Additionally, automated deployment using Terraform templates minimizes configuration errors across multi-cloud setups.

The AI Model Security feature scans machine learning models against predefined security rules prior to deployment. This process identifies vulnerabilities such as arbitrary code execution and neural backdoors. The AI Red Teaming service simulates potential attacks and generates risk score reports, helping organizations streamline regulatory documentation. Centralized dashboards provide visibility into model inventories, dependencies, and lineage, creating audit trails that align with standards like SOC 2 and ISO 27001. Security logs capture detailed records of threat activity and API violations, aiding forensic investigations when incidents arise. These auditing tools lay the groundwork for a broader analysis of the platform’s strengths and trade-offs in the next section.

Zscaler Generative AI Security uses its Zero Trust Exchange platform to safeguard the entire AI lifecycle, spanning public tools like ChatGPT, private models, and embedded applications such as Microsoft Copilot. The platform tackles a growing challenge: AI/ML transactions within the Zscaler cloud surged by 3,464.6% year-over-year as of early 2025. During this time, enterprises blocked nearly 60% of these transactions due to security and governance concerns. This rapid growth has led to an ecosystem of over 3,400 AI applications, many of which operate without centralized oversight. Zscaler’s approach provides a structured framework backed by powerful real-time monitoring.

The platform’s AI Guard feature enables high-performance inline inspection, protecting against prompt injections, jailbreaks, and malicious URLs. Security teams gain detailed insights into every input and response, with dashboards highlighting app trends and flagged activities. Data Loss Prevention (DLP) tools, equipped with over 100 predefined dictionaries for PII, PHI, and source code, prevent sensitive data from being leaked in real time. For high-risk interactions, AI applications are displayed in a secure, isolated browser, where users can send prompts while clipboard actions, such as uploads or downloads, are restricted. Additionally, content moderation filters out toxic, off-topic, or competitive outputs to safeguard corporate reputation.

"We had no visibility into [ChatGPT]. Zscaler was our key solution initially to help us understand who were going to it, what were they uploading, and then providing awareness." - Jason Koler, CISO, Eaton Corporation

Zscaler's Workflow Automation feature guides users on best practices when using unsanctioned AI tools. The platform secures AI development environments by offering zero trust access and inline controls for AI IDEs that connect to infrastructure. By consolidating AI security into one platform, it eliminates the need for multiple standalone products, simplifying operations. It also maps and inventories the entire AI ecosystem, including shadow AI, models, and data pipelines, providing organizations with a clear view of their AI assets.

The platform complements its monitoring and integration capabilities with robust compliance auditing. The AI Audit Trail logs all user activity, including prompts, responses, and application usage, ensuring detailed forensic records. AI Security Posture Management (AI-SPM) identifies risks, misconfigurations, and data leaks across the AI lifecycle, promoting well-governed operations. Continuous compliance monitoring ensures adherence to regulations like GDPR, CCPA, and the AI Act. Interactive dashboards provide real-time insights into application trends and at-risk data across departments, while automated reporting simplifies audits and demonstrates regulatory compliance effectively.

Examining the capabilities of these platforms reveals the trade-offs involved in choosing the right solution. Prompts.ai stands out in its ability to unify over 35 language models (LLMs), effectively reducing tool sprawl and cutting costs by up to 98%. Its FinOps layer offers real-time token tracking, providing full visibility into spending while safeguarding sensitive data.

On the other hand, Palo Alto Prisma AIRS shines with its real-time monitoring and zero-trust compliance auditing. Its stack-focused design strengthens integrated security but can pose challenges for organizations that rely on multi-vendor setups, potentially limiting flexibility.

The table below compares the performance of these platforms across key areas:

| Platform | LLM Unification | Real-Time Monitoring | Compliance Auditing | Cost Optimization | Workflow Interoperability |

|---|---|---|---|---|---|

| Prompts.ai | High (35+ Models) | Moderate | Moderate | High (98% cost reduction) | High (Centralized Workspace) |

| Palo Alto Prisma AIRS | Moderate (Stack-centric) | High (Precision AI) | High (Zero Trust focus) | Moderate | Low (Ecosystem lock-in) |

This comparison highlights the importance of balancing advanced security capabilities with predictable costs in AI workflow management. A recurring issue with specialized security platforms is their tendency to prioritize threat detection and compliance while neglecting cost predictability. While security is undeniably critical, managing expenses is equally essential for sustainable AI adoption.

As Jack Pittas, Co-founder of PK Cyber Solutions Inc., puts it:

"AI security is a two-sided story. On one hand, AI can offer tremendous cybersecurity benefits... On the other, it introduces a new cyber attack surface that needs protection."

For enterprises, the challenge lies in finding the right balance between robust security measures and financial control. With 81% of enterprise leaders feeling the pressure to speed up AI adoption despite security concerns, this balance becomes even more crucial.

Selecting an AI security solution that fits your workflow complexity, infrastructure, and budget is essential. Prompts.ai, for instance, stands out by bringing multiple language models together in a centralized workspace, offering cost control alongside seamless AI management. While some stack-specific solutions provide deep integration, they may come with the risk of vendor lock-in.

This balance between capabilities and cost is crucial in a world where data breaches cost an average of $4.88 million, and security teams face over 4,500 alerts daily. Security platforms that focus solely on threat detection without ensuring predictable costs may leave teams struggling to manage resources effectively. Prioritizing solutions that combine strong security features with operational efficiency is vital as AI-related threats and regulations continue to grow.

Take the time to map your data flows across SaaS tools and AI functionalities to identify areas where stringent security controls are needed. For teams managing complex workflows with multiple LLMs, look for platforms offering real-time token tracking and high interoperability. If your organization has a smaller security team, consider solutions that can automate triage and cut false positives by 90%, significantly improving efficiency.

The push toward autonomous security operations is gaining momentum, with 80% of analysts predicting it will become the standard. Your chosen platform should address current security challenges while being flexible enough to adapt to emerging threats. Whether it’s countering the 703% surge in AI-driven phishing attacks or addressing concerns about unregulated AI adoption - highlighted by 38% of enterprise leaders - the key to successful AI adoption lies in securing workflows with interoperable tools and maintaining control over budgets. This approach will be essential for navigating the evolving AI landscape in the years ahead.

To protect prompts and responses while keeping the user experience smooth, implement security measures that operate quietly in the background. By embedding security directly into the network infrastructure, you can provide real-time protection without requiring additional code or agents. Pair this with continuous monitoring, governance protocols, and a "secure by design" strategy to safeguard data pipelines and workflows without compromising efficiency. Incorporating Zero Trust principles further strengthens security while ensuring users remain uninterrupted.

To safeguard sensitive data when using AI tools, implement strong security practices such as AI Data Loss Prevention (DLP) solutions to oversee and manage data flow. Use encryption to secure data, set up strict access controls, and maintain detailed audit trails to track data activity throughout its lifecycle. Regularly evaluate and update security configurations, enforce privacy protocols, and stay prepared for emerging threats. These measures help protect data during AI operations while ensuring adherence to compliance standards.

You can keep a close eye on and manage AI token spending by leveraging tools specifically built to monitor token usage in detail. These tools offer accurate cost tracking and control, helping you manage expenses effectively without compromising the efficiency of your workflows.