Creating AI-powered applications is no longer just about building smarter models - it's about connecting them efficiently to the tools and workflows developers rely on. This article compares prompts.ai, Platform B, and Platform C, three top platforms that simplify AI integration by focusing on interoperability, efficiency, and seamless connections. Here's what you need to know:

Each platform addresses the challenges of authentication, rate limits, and multi-model workflows while prioritizing security, uptime, and developer tools. The right choice depends on your priorities - model access, cost management, or deployment flexibility. Below, we break down their features, strengths, and limitations to help you decide.

Prompts.ai makes integrating AI models effortless, requiring just a single line of code. With support for over 10,000 models - including OpenAI GPT-5.1, Google Imagen-4, and Black Forest Labs Flux-2-Pro - it eliminates the hassle of rewriting logic when switching between providers. This flexibility allows users to test different models quickly, creating a streamlined integration experience.

The platform’s unified API simplifies the challenges of managing separate authentication processes and API schemas for each provider. Prompts are treated like code with version control, enabling you to create, test, and deploy updates without altering your application logic. This approach allows prompt updates to roll out independently of your software release cycle, reducing engineering workload and lowering deployment costs.

Prompts.ai provides developers with advanced tools to optimize workflows. The JavaScript/TypeScript SDK (@promptcompose/sdk) enables programmatic prompt resolution, dynamic variable handling, and A/B testing directly in your codebase. Its Git-native workflow integrates with GitHub, syncing prompt versions and triggering deployments through pull requests. Automated A/B testing is built-in, running experiments and tracking performance automatically. Variants are resolved based on session IDs, eliminating the need for manual tracking.

For production environments, efficient scaling and cost management are key. Prompts.ai uses a tiered API key system to support scaling needs. Development keys offer standard limits for testing, while production keys provide higher rate limits, enhanced SLAs, and advanced monitoring. To optimize performance and control costs, the platform advises using caching strategies like node-cache with a 5-minute TTL to reduce API calls and latency. Additional features include custom rate limits for API keys, IP or domain-based usage restrictions, and connection pooling to reuse SDK instances across multiple requests.

Enterprise deployments benefit from robust security measures. Prompts.ai incorporates HMAC SHA256 webhook signature verification, IP and domain restrictions, and HTTPS-only communication to ensure data protection. Granular access control is supported with read-only keys for dashboards and monitoring tools, adhering to the principle of least privilege. For added security, regular key rotation every 90 days is recommended, with keys stored securely in environment variables instead of being hardcoded into applications.

Clarifai is a robust AI lifecycle platform trusted by over 250,000 users globally. With more than 1 million AI models available, it offers a unified API capable of handling image, video, text, and audio data through a single integration point. By focusing on interoperability, Clarifai simplifies AI workflows, consolidating diverse tasks into one streamlined API.

Clarifai stands out by making model integration straightforward and efficient. Its Mesh workflow engine provides a low-code, drag-and-drop interface, allowing developers to connect multiple models visually. This enables the creation of workflows that can run either sequentially or in parallel. The platform’s Reasoning Engine further enhances performance for AI workloads, achieving 544 tokens per second and a 3.6-second Time To First Actuator (TTFA). These capabilities are designed to optimize the efficiency of modern AI processes. Deployment options include shared compute, dedicated cloud instances, and on-premise setups, offering flexibility to meet varying team needs.

Clarifai offers SDKs for several programming languages, along with a Workbench that provides a unified interface for training, testing, and deploying models. Teams can develop custom detection models and train them directly on the platform at a rate of $4.00 per hour for single GPU tasks. These tools are paired with scalable pricing options, making it easier for projects of all sizes to grow.

Clarifai’s pricing structure begins with a Community Plan, which includes 1,000 free operations per month. The Essential Plan, costing $0 per month, supports up to 30,000 monthly requests and 15 requests per second. For $300/month, the Professional Plan offers a credit for up to 100,000 monthly requests and 100 requests per second. Enterprise plans provide unlimited API calls, support for over 1,000 requests per second, and a 99.99% SLA. GPU pricing is based on usage, with rates like $0.0708 per minute for NVIDIA L4 (24GB), $1.1467 per minute for NVIDIA H100 (80GB), and $0.0006 per minute for Xeon Platinum 8000 S. The Scale to Zero feature helps reduce costs by automatically shutting down idle resources.

For organizations with stringent data residency needs, Clarifai supports VPC, on-premise, and air-gapped deployments. Its enterprise-grade infrastructure has handled billions of predictions, ensuring reliability and security. As noted by Forrester:

"A pioneer in deep learning-based computer vision, Clarifai can tackle near-real-time visual search, facial recognition use cases, and deployment in the most secure, air-gapped environments."

Dedicated cloud instances come with detailed access controls, ensuring sensitive AI workloads remain secure and compliant with organizational requirements.

Platform C takes a unique approach to managing the AI lifecycle by focusing on flexible deployment options and dynamic resource management. With serverless and dedicated inference capabilities, developers gain precise control over balancing costs and performance.

Platform C builds on its strong foundation with advanced workload orchestration tools designed to improve both cost management and performance reliability. Developers can orchestrate workloads across various environments, including shared compute, managed dedicated cloud instances, VPCs, on-premise setups, hybrid models, or edge deployments. Serverless inference is ideal for handling fluctuating workloads, while dedicated nodes ensure stable performance for production tasks. Features like autoscaling, rapid startup times, GPU fractioning, and scale-to-zero functionality keep performance steady during both high-demand and idle periods.

With access to over 11,000 pre-trained concepts, Platform C helps developers avoid the high costs of training models from scratch. Model training costs $4.00 per hour for single GPU jobs, offering a straightforward pricing structure. A Sr. Director of Catalog Operations at a leading e-commerce company shared their experience:

"Clarifai provides an end-to-end platform with the easiest to use UI and API in the market. They've accelerated our AI development at scale allowing 1,000's of workers to label data and train 100,000's of AI models."

These developer-focused tools play a key role in supporting Platform C's flexible pricing and scalable infrastructure, making it an efficient choice for diverse workloads.

Platform C operates on a pay-per-minute model for dedicated compute, with the Clarifai Reasoning Engine costing as little as $0.16 per 1 million tokens. Features like scale-to-zero automatically shut down idle resources to save costs, while GPU fractioning ensures efficient resource use across multiple tasks. A Director of Product Management at a Fortune 500 retailer highlighted the platform's advantages:

"Clarifai was much easier to use than the trillion dollar companies, and their AI significantly outperformed both the niche players and the big guys in accuracy while having inference speeds 7x faster."

For industries with strict regulatory requirements, Platform C offers air-gapped deployments and private data planes to safeguard sensitive information. Deployment options include VPC, on-premise, hybrid, and edge environments, all supported by centralized monitoring and auditing via the unified Control Center. This setup ensures compliance with data residency rules while maintaining operational efficiency, showcasing how adaptable deployment models can meet the needs of diverse enterprise environments.

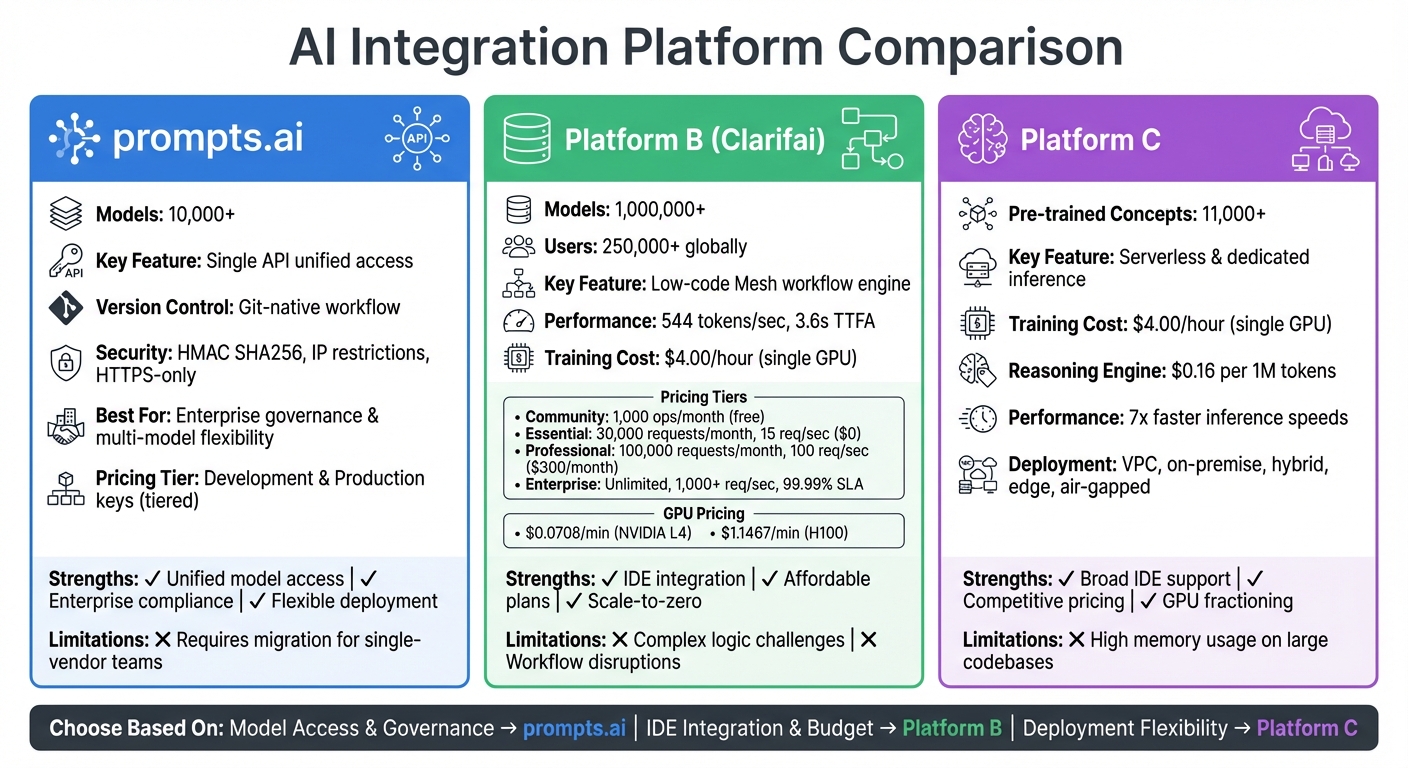

AI Integration Platform Comparison: Features, Pricing and Capabilities

Each solution brings distinct advantages and challenges, catering to various developer needs and priorities.

prompts.ai simplifies workflows by offering unified access to over 10,000 models through a single, secure interface. It ensures enterprise-level governance with audit trails and provides flexibility in deployment to suit different operational setups. However, teams already committed to single-vendor workflows may need to undergo an initial migration process, which could require some adjustments.

Platform B integrates seamlessly with popular IDEs, offering context-aware suggestions that improve efficiency for routine coding tasks. Its free tier and affordable $19/month professional plan make it a strong choice for individual developers and smaller teams. That said, when working with complex logic, the platform may need more precise guidance, potentially disrupting workflows.

Platform C stands out with its broad IDE compatibility, supporting environments like Eclipse, Android Studio, and Sublime, which appeals to developers using less common editors. It also offers competitive pricing, including a free plan, making it accessible to teams of varying sizes. However, its high memory usage on large codebases can slow down performance during demanding development tasks.

| Platform | Key Strengths | Key Limitations |

|---|---|---|

| prompts.ai | Unified access to 10,000+ models; strong enterprise compliance; flexible deployment | Requires migration for teams using single-vendor workflows |

| Platform B | Seamless IDE integration; free and affordable plans | Struggles with complex logic; potential workflow disruptions |

| Platform C | Extensive IDE support, including niche editors; competitive pricing | High memory consumption impacts performance on large projects |

Choosing the right platform depends on your specific needs: whether you prioritize model access and enterprise-level features (prompts.ai), smooth IDE integration for everyday tasks (Platform B), or compatibility with diverse development environments (Platform C). These distinctions help clarify which solution aligns best with your goals.

When deciding on an AI platform, it’s crucial to weigh your priorities against each option’s strengths in model access, deployment flexibility, and security. If your focus is on accessing over 35 leading models within a secure, enterprise-ready framework, prompts.ai offers a unified solution with enterprise-grade governance, audit trails, and versatile deployment options. Teams juggling multiple large language models will benefit from its streamlined interface, which reduces integration complexities while supporting cost management and robust security practices.

For developers seeking smooth IDE integration and budget-conscious pricing, Platform B provides context-aware suggestions but may require additional effort for handling complex logic. Meanwhile, Platform C stands out for its compatibility with niche development environments, offering a variety of integrations at competitive pricing.

With recent reductions in AI API costs, premium platforms are now more accessible than ever. For production-grade AI solutions, consider platforms that manage OAuth flows and authentication infrastructure, as these foundational tasks can otherwise take weeks of development. For rapid prototyping, platforms that provide access to multiple models through a single API key can significantly speed up experimentation.

Security remains a non-negotiable factor in platform selection. Enterprise teams should prioritize SOC 2 compliance, token isolation, and audit capabilities to ensure data integrity, while startups and individual developers can explore free tiers to test workflows before scaling. The ideal platform aligns deployment flexibility, model access, and governance features with both your immediate needs and long-term goals.

Ultimately, the right platform is one that minimizes integration challenges while offering scalable solutions, efficient resource management, and strong security to support your evolving AI journey.

To connect with over 10,000 AI models using just one API, consider platforms that bring together access to multiple providers. These platforms streamline operations by routing all requests through a single endpoint while offering features like load balancing, failover support, and cost management. You can easily switch between models by specifying their names, ensuring smooth and efficient deployment. This method simplifies integration and speeds up development for large-scale AI projects.

You can manage and update prompts independently of your application code by leveraging a prompt versioning system. This involves assigning unique IDs or version numbers to each prompt, allowing you to track changes in an organized way. Updated prompts can be accessed by applications through deployment variables or APIs, eliminating the need for full redeployment. Using tools that offer features like version control, rollback capabilities, and environment labeling simplifies the process, ensuring smooth updates and integration without altering the underlying application code.

To ensure the security of production AI integrations, it's vital to adopt measures like identity-based access control, runtime guardrails, and request monitoring. Incorporating strategies such as zero trust architecture, OAuth2, and secure API exposure can help prevent risks like data leakage, prompt injection, and unauthorized access. Focus on maintaining strong visibility and implementing reliable protections to safeguard sensitive data and uphold system integrity.