Selecting the right generative AI platform is critical for accuracy, speed, and scalability in today's fast-paced, AI-driven world. With 4 billion daily prompts and 73% of marketing teams leveraging these tools, reliability is a top priority. This article evaluates five leading platforms, focusing on performance, cost, and security to help you make an informed decision.

| Platform | Key Features | Pricing (Starting) | Security Standards |

|---|---|---|---|

| Prompts.ai | 35+ models in one interface, FinOps tools | $0.0005 per 1,000 tokens | SOC 2, GDPR, HIPAA |

| SiliconFlow | 2.3× faster speeds, serverless options | Pay-as-you-go | GDPR, CCPA |

| Hugging Face | 2M+ models, 500K datasets | $0.60/hour (GPU endpoint) | SOC 2, GDPR |

| Firework AI | Trillion-parameter support, BYOC | $0.10 per million tokens | SOC 2, HIPAA |

| Cerebras Systems | Wafer-Scale Engine, ultra-fast speeds | $0.10 per million tokens | SOC 2, GDPR, CCPA |

Each platform offers distinct strengths tailored to different needs. Whether you're optimizing costs, scaling enterprise workloads, or exploring cutting-edge hardware, there's a solution here for you.

Comparison of Top 5 Generative AI Platforms: Features, Pricing, and Performance

Prompts.ai is an enterprise-focused AI orchestration platform that brings together over 35 leading language models - including GPT-5, Claude, LLaMA, and Gemini - into one secure, unified interface. Instead of managing multiple subscriptions and logins, teams can access all major models through a single dashboard, eliminating the hassle of juggling different tools. Designed for developers, marketers, and creative teams, the platform simplifies prompt engineering with pre-built templates and workflows, ensuring reliable outputs without the need to handle separate API keys or billing accounts. Below are key metrics showcasing the platform's reliability, speed, and efficiency.

Prompts.ai prioritizes fast and accurate results, delivering text generation in under two seconds with 85–90% reasoning accuracy through optimized fine-tuning. In creative writing benchmarks, it achieves 92% accuracy for nuanced long-form content. By integrating multiple models, users can compare outputs side-by-side, selecting the best option for tasks like code debugging, SEO-focused blog posts, or crafting product descriptions.

The platform’s cloud-agnostic architecture is built to handle high demand, supporting 1 million token context windows and scaling automatically for over 10,000 concurrent users. For example, a marketing firm scaled from 100 to 5,000 daily content generations without experiencing downtime, leveraging Hugging Face for distributed inference. This adaptability makes Prompts.ai suitable for small teams testing prototypes as well as large enterprises deploying AI across departments globally. Its flexible infrastructure is paired with a pricing model that ensures affordability.

Prompts.ai offers a competitive pricing structure, starting at $0.0005 per 1,000 tokens and including a free tier with 10,000 tokens per month. This results in 40–60% savings for high-volume users. Enterprise plans, priced at $99/month per member, provide unlimited access. The pay-as-you-go TOKN credit system eliminates unnecessary subscription costs, aligning expenses with actual usage. Real-time FinOps dashboards offer detailed tracking of token consumption across teams, ensuring transparency and cost control.

The platform adheres to stringent US data security standards, including SOC 2 Type II, GDPR, and HIPAA compliance. It features end-to-end encryption, private model hosting, and zero-data-retention policies. Role-based access controls and detailed audit logs further enhance security, earning the trust of over 500 US firms in healthcare, finance, and legal sectors. These measures address concerns from the 90% of tech leaders who prioritize AI accuracy and data security, providing the governance framework necessary for enterprise-level deployments.

Prompts.ai supports a wide range of applications. For instance, a US-based e-commerce company used the platform to generate 50,000 product descriptions monthly, reducing creation time by 70% and achieving a 98% customer approval rate. Similarly, a development team integrated the platform with Hugging Face to deploy custom Llama models, achieving results comparable to top open-source solutions in self-hosted environments. From automating 80% of social media tasks within Google Workspace to generating and debugging code snippets, the platform is tailored to meet diverse workflow needs. It also ensures outputs are formatted for US-specific conventions, such as MM/DD/YYYY dates, imperial measurements, and dollar-based pricing.

SiliconFlow is an advanced AI inference platform designed to deliver 2.3× faster inference speeds compared to top cloud providers. By reducing latency by 32% across tasks like text, image, and video processing, it ensures high performance without compromising accuracy. Its compatibility with a range of GPUs, such as NVIDIA H100/H200, AMD MI300, and RTX 4090, empowers businesses to optimize throughput while avoiding vendor restrictions. These features make SiliconFlow a dependable choice for efficient AI operations.

SiliconFlow supports context windows from 4,000 to 64,000 tokens, enabling it to handle everything from long-form document creation to complex multi-turn conversations. The platform grants access to leading open-source models like DeepSeek-V3, Llama 3.3 70B, and Qwen2.5 72B via OpenAI-compatible APIs. For creative tasks, it includes multimodal tools such as text-to-speech (CosyVoice2), image generation (FLUX.1), and video generation (Wan2.2). Developers can fine-tune outputs using adjustable parameters like temperature (0.0–2.0) and top_p, while the stream=true option ensures smooth handling of lengthy processes without timeouts.

This robust performance framework makes scaling straightforward and efficient.

SiliconFlow is built to adapt to varying workload demands effortlessly. It offers serverless inference for flexible scaling and reserved GPU options for critical workloads, with hybrid cloud capabilities (BYOC) allowing organizations in regulated sectors to maintain full control over their data. Deploying custom models is simplified with one-click deployment, removing infrastructure management hurdles. The unified AI gateway with smart routing streamlines access to multiple models, automatically scaling resources during traffic surges without requiring manual adjustments.

SiliconFlow’s pay-as-you-go pricing structure is calculated as (Input Tokens × Input Unit Price) + (Output Tokens × Output Unit Price), ensuring users only pay for what they use. Reserved GPU configurations provide the most economical option for high-volume applications, though they require upfront commitments. This flexible model is ideal for startups experimenting with prototypes and enterprises managing consistent workloads, offering both cost control and predictability.

SiliconFlow prioritizes data security with a zero data retention policy and end-to-end encryption for data at rest and in transit. It ensures isolation across computational, network, and storage layers, earning a 4.9/5 security rating. While its primary legal framework aligns with Singapore's Personal Data Protection Act (PDPA), the platform also supports compliance with global standards like GDPR and CCPA. Users retain full ownership of their data, processed solely based on their instructions, ensuring both privacy and control.

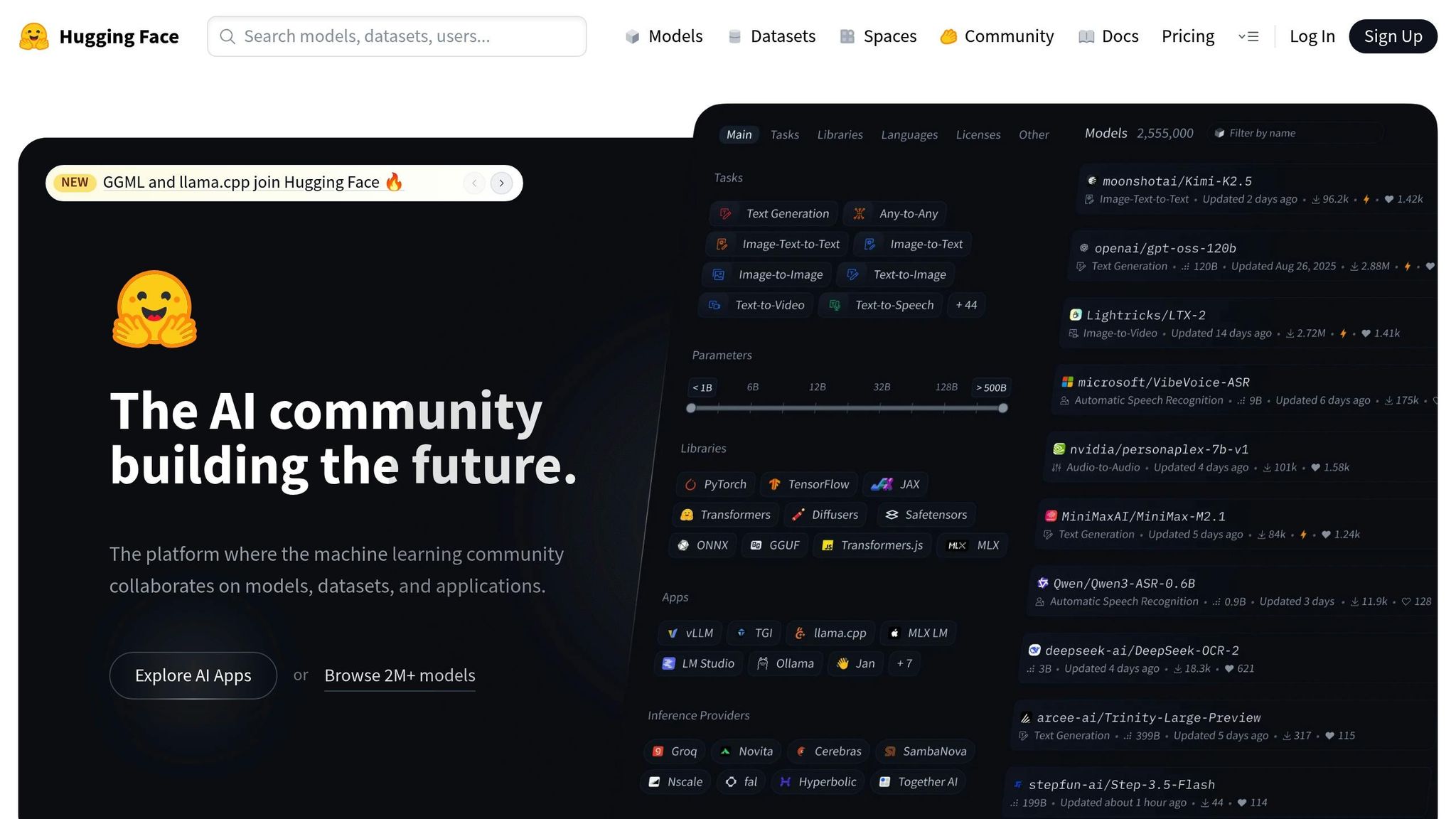

Hugging Face serves as a robust platform, offering access to over 2 million public models, 500,000 datasets, and 1 million AI applications (Spaces). Trusted by major organizations like Meta, Google, Microsoft, and Amazon, it eliminates the need for custom development by providing pre-trained models for text, image, video, audio, and 3D data. This extensive ecosystem supports efficient performance, scalable deployments, and cost-conscious operations.

Hugging Face ensures optimized inference through tools like Text Generation Inference (TGI) and Text Embeddings Inference (TEI), built for speed and efficiency. The platform seamlessly integrates with hardware accelerators such as NVIDIA and AMD GPUs, AWS Trainium/Inferentia, and Google TPUs, ensuring models operate smoothly on advanced hardware. Techniques like 4-bit quantization using bitsandbytes reduce VRAM requirements for a 7B model from 14GB to just 3.5GB, maintaining quality while improving resource efficiency. These tailored optimizations make deployments faster and more effective across diverse infrastructures.

With Inference Endpoints, Hugging Face provides fully managed, production-ready environments for deploying models on dedicated infrastructure, avoiding the challenges of self-hosting. Partnerships with cloud giants like AWS, Azure, and Google Cloud, alongside high-speed inference providers like Groq and Cerebras, enable global scalability with enterprise-grade reliability. A unified API gives developers access to over 15,000 models across various hardware setups, allowing seamless transitions between providers without altering application code. This flexibility supports smooth scaling from initial prototypes to full-scale production.

Hugging Face offers a transparent pay-as-you-go pricing model with no markup over provider rates. Dedicated GPU deployments via Inference Endpoints start at $0.60 per hour, while Inference Providers charge between $0.001 to $0.01 per 1,000 tokens, depending on the model size. Free users receive $0.10 in monthly credits, while PRO and Enterprise users get $2.00. The Enterprise Hub starts at $20 per user per month, including features like SSO, audit logs, and priority support. This pricing model accommodates both startups exploring new ideas and enterprises managing large-scale, long-term operations.

Hugging Face prioritizes security with SOC2 Type 2 certification, ensuring robust safeguards and continuous monitoring. The platform does not store customer payloads or tokens sent to Inference Endpoints, and debugging logs are kept for only 30 days. Security measures include malware detection (ClamAV), pickle scanning to prevent unsafe deserialization, and secrets scanning to identify leaked credentials. Access management features include Multi-Factor Authentication (MFA), Single Sign-On (SSO), and Role-Based Access Control (RBAC). For sensitive workloads, AWS Private Link allows private access to Inference Endpoints without internet exposure. All data in transit is encrypted with TLS/SSL, and the Enterprise Plan offers BAAs for HIPAA compliance. These measures reinforce trust and reliability.

Hugging Face supports a wide range of applications beyond text processing. Libraries like Transformers handle NLP tasks, Diffusers enable creative image, video, and audio generation, and timm focuses on computer vision. The platform supports models for Text-to-Video, Image-to-Text, Text-to-Speech, Automatic Speech Recognition, and Feature Extraction, all accessible through a unified interface. With datasets available in over 8,000 languages, it caters to global needs. The "Spaces" feature hosts over 1 million demo applications, allowing developers to showcase machine learning projects using tools like Gradio and Streamlit, fostering rapid prototyping and team collaboration.

Firework AI stands out as a platform built to meet the highest standards of trust and reliability. It delivers fast, scalable solutions with enterprise-level performance, powered by a proprietary inference engine optimized for open-source models. Supporting over 100 models across text, vision, audio, and image modalities - including Llama 3, Qwen3, Deepseek, and GLM families - the platform employs advanced techniques like quantization-aware tuning, reinforcement learning, and adaptive speculation to enhance both speed and quality. With a globally distributed infrastructure running on cutting-edge hardware such as NVIDIA H100, H200, B200, and AMD MI300X GPUs, Firework AI also offers serverless deployment options that eliminate cold starts.

The platform’s speed advantages are evident in real-world applications. For instance, in 2024, Notion reduced latency from around 2 seconds to just 350 milliseconds by fine-tuning models on Firework AI. This improvement allowed them to scale AI features to over 100 million users.

"By partnering with Firework AI to fine-tune models, we reduced latency from about 2 seconds to 350 milliseconds... That improvement is a game changer for enterprise AI." - Sarah Sachs, AI Lead at Notion

Quora saw a threefold increase in response speed after migrating models like SDXL, Llama, and Mistral, which significantly boosted user engagement. Similarly, Sualeh Asif, CPO at Cursor, noted that the platform maintained high performance with minimal quality loss even after quantization. These advancements integrate seamlessly into a robust scaling framework.

Firework AI is designed to support businesses of all sizes with three flexible deployment tiers:

An example of its scalability is Sentient, which managed a waitlist of 1.8 million users within 24 hours by maintaining sub-2-second latency across complex workflows. The platform also ensures seamless operations with automatic failover, load balancing, and auto-scaling, backed by a transparent pricing structure.

Firework AI employs a pay-per-token pricing model for serverless deployments:

On-demand GPU pricing starts at $2.90 per hour for NVIDIA A100, $4.00 per hour for H100, and $9.00 per hour for B200 instances. For non-real-time tasks, the Batch API offers a 50% cost reduction, and cached input tokens also receive a 50% discount for most text and vision models. Specific rates include $0.04 per image for FLUX.1 Kontext Pro and $0.0015 per minute for Whisper V3 Large audio processing.

Firework AI prioritizes security with SOC 2 Type II certification and HIPAA compliance for healthcare organizations. Its default Zero Data Retention (ZDR) policy ensures that prompt and generation data exist only in volatile memory during processing unless customers opt in otherwise. For added governance, the Bring Your Own Bucket (BYOB) feature allows integration with Google Cloud Storage or AWS S3. Additional safeguards include workload isolation, fine-grained Identity & Access Management (IAM), regular penetration testing, and formal incident response plans. The platform is also working toward ISO certifications (27001, 27701, and 42001) as of February 2026.

Firework AI supports a wide array of applications, from IDE copilots and customer support bots to multi-step reasoning agents and retrieval-augmented generation systems. Its fine-tuning capabilities, applicable to models exceeding 1 trillion parameters, allow businesses to customize solutions to their needs. Developers can access the platform through a Python SDK, RESTful API, or OpenAI-compatible tools.

"Firework AI has been a fantastic partner in building AI dev tools at Sourcegraph. Their fast, reliable model inference lets us focus on fine-tuning, AI-powered code search, and deep code context." - Beyang Liu, CTO at Sourcegraph

This adaptability makes Firework AI an excellent choice for everything from quick prototypes to large-scale production deployments across various industries.

Cerebras Systems has carved a niche for itself by focusing on hardware innovation that directly tackles some of the most pressing challenges in generative AI performance. At the heart of its technology is the Wafer-Scale Engine (WSE-3), a massive chip with 4 trillion transistors - offering 57 times the transistor density of leading GPUs. This chip includes 44GB of on-chip SRAM and provides a staggering 21 petabytes per second of memory bandwidth, which is 7,000 times that of an NVIDIA H100. By storing entire models directly on the chip, Cerebras eliminates the traditional bottleneck of transferring data from external memory, a limitation that often slows down GPU-based systems.

Cerebras delivers impressive speeds by keeping models on-chip. For instance, GPT-OSS 120B runs at 3,000 tokens per second, while Llama 3.1 8B achieves 2,200 tokens per second using native 16-bit precision (FP16). This is 20 times faster than GPU-based systems, and unlike competitors that rely on 8-bit quantization to save bandwidth, Cerebras maintains full accuracy with FP16. This approach also uses just one-third of the power compared to DGX solutions.

"By delivering over 2,000 tokens per second for Scout – more than 30 times faster than closed models like ChatGPT or Anthropic, Cerebras is helping developers everywhere to move faster, go deeper, and build better than ever before." - Ahmad Al-Dahle, VP of GenAI, Meta

Its training capabilities are equally noteworthy. A cluster of 2,048 CS-3 systems can train Llama2-70B from scratch in under a day - a process that traditionally takes a month on GPU clusters. Despite doubling the performance of its predecessor, the CS-3 achieves this without increasing power consumption or costs, making it a game-changer for large-scale AI training.

Cerebras allows for scalability on an unprecedented level. Clusters of up to 2,048 CS-3 systems can be connected via the SwarmX interconnect, delivering 256 exaflops of AI compute. Its Weight Streaming Architecture separates parameter storage from compute, enabling multiple systems to function as a unified device. This eliminates the need for manual sharding or parallelism, simplifying the debugging of trillion-parameter models as though they were being run on a single machine.

With MemoryX units, memory can scale independently, ranging from 24TB to 1,200TB, supporting models with up to 24 trillion parameters. The Cerebras Training Cloud offers flexible scaling options, including "Pay Per Hour" for specific training tasks or "Pay Per Model" where Cerebras handles the entire process. By 2025, the platform plans to expand to 8 global data centers, capable of serving over 40 million tokens per second for high-throughput inference workloads.

Cerebras combines its performance with pricing options designed to suit different needs. Inference costs are token-based, with Llama 3.1 8B priced at $0.10 per million tokens (input and output combined). Larger models, such as Qwen 3 235B Instruct, cost $0.60 per million input tokens and $1.20 per million output tokens, while GPT-OSS 120B is priced at $0.35 and $0.75 per million tokens, respectively.

The platform offers three tiers:

For coding-specific applications, Cerebras Code offers two plans:

Cerebras prioritizes security with SOC 2 Type 2, GDPR, and CCPA compliance, making it a trusted choice for regulated industries. The platform enforces a strict zero-reuse policy, ensuring user data, models, and outputs remain private and are never stored or reused. Security measures include mutual TLS (mTLS), OAuth 2.0, and AES-256 encryption for data at rest, along with TLS for data in transit. Services run on AWS infrastructure with strong access controls, multi-factor authentication, and regular penetration testing.

"With Cerebras' inference speed, GSK is developing innovative AI applications, such as intelligent research agents, that will fundamentally improve the productivity of our researchers and drug discovery process." - Kim Branson, SVP of AI and ML, GSK

Cerebras is already trusted by organizations in healthcare, finance, and national research, including GSK, AlphaSense, and Sandia National Laboratories, further demonstrating its reliability and effectiveness.

Cerebras supports a wide variety of applications, from prototyping to large-scale production. Fully compatible with the OpenAI Chat Completions format, developers can switch by simply updating their API keys. It supports leading open-source models like Llama, Mistral, and Jais, as well as custom architectures such as Mixture of Experts (MoE) and multimodal models. The API offers sub-150 millisecond latency, with a goal of under 50 milliseconds, making it ideal for real-time applications like intelligent search engines and agentic workflows.

"At Perplexity, we believe ultra-fast inference speeds like what Cerebras is demonstrating can have a similar unlock for user interaction with the future of search - intelligent answer engines." - Denis Yarats, CTO and co-founder, Perplexity

Cerebras combines high-speed performance, strong security, and simplified distributed computing, making it a versatile choice for industries ranging from drug discovery and financial analysis to conversational AI and code generation.

When comparing platforms, it's clear that each one offers distinct benefits and challenges, making the best choice highly dependent on your specific goals and technical needs.

Prompts.ai excels in simplifying AI workflows by consolidating access to over 35 language models within a single, secure interface. Its real-time FinOps management ensures enterprise-level governance while slashing AI software costs by as much as 98%. This makes it a strong option for organizations prioritizing unified orchestration and cost efficiency.

Hugging Face, on the other hand, shines in its adaptability for research and custom fine-tuning. However, its extensive range of tools and resources can be overwhelming, often requiring significant technical expertise to navigate successfully.

Ultimately, the right platform depends on what matters most to you - whether it’s streamlined orchestration, cost control, raw performance, research capabilities, or smooth integration with your existing tools. Opt for platforms that align with your current productivity setup and offer reliable APIs for structured, high-quality outputs.

"The advantage of having a single control plane is that architecturally, you as a data team aren't paying 50 different vendors for 50 different compute clusters." - Hugo Lu, CEO

To make an informed decision, test at least 20 tasks that mirror your real-world use cases. This will help you assess the platform’s reliability, speed, and return on investment (ROI).

Choosing the right generative AI platform depends on how well its features align with your specific workflow needs. The platforms reviewed here each bring distinct benefits suited to different organizational goals.

Prompts.ai stands out for its ability to streamline AI workflows by integrating access to over 35 language models in one secure platform. Its real-time FinOps tools help enterprises maintain governance while cutting AI software costs by up to 98%. This makes it a strong choice for those focused on cost management and unified orchestration.

SiliconFlow offers 2.3× faster inference speeds and 32% lower latency, along with flexible GPU configurations and hybrid cloud deployment options. It’s an excellent solution for businesses requiring high-performance processing for text, image, and video tasks.

Hugging Face provides access to an extensive library of over 2 million models and 500,000 datasets, making it a go-to option for research, custom fine-tuning, and prototyping in a wide range of AI applications.

Firework AI combines speed and reliability with its proprietary inference engine, supporting more than 100 models. It also offers flexible deployment options, including serverless and bring-your-own-cloud setups, catering to a variety of enterprise needs.

Cerebras Systems uses its Wafer-Scale Engine to achieve performance up to 20 times faster than GPU-based systems. It supports models with up to 24 trillion parameters while maintaining full precision, making it ideal for organizations handling large-scale, complex AI tasks.

"The best generative AI tools aren't necessarily the most advanced but the ones that integrate seamlessly into your specific creative workflow." - fal.ai

Before committing to a platform, test it against your real-world use cases to assess reliability, output quality, and speed. Begin with free tiers to gauge performance and ensure compatibility with your existing tech stack. Matching the platform to your workflow is key to unlocking its full potential for your AI-driven projects.

To begin, pinpoint your main objectives - whether it’s content creation, automation, or data analysis. When assessing platforms, pay close attention to security, scalability, and how well they integrate with tools such as Slack or Salesforce. Give priority to features like data privacy, compliance, and the quality of results across various tasks. Choose platforms that meet your technical needs and align with your business goals to ensure dependable and efficient operations.

To ensure sensitive information is well-protected and user privacy is upheld when using Generative AI, the platform must include strong security features. These should cover:

These safeguards work together to create a secure environment for handling private information.

To manage token costs effectively, start by auditing your current usage. Monitor API responses to identify hidden expenses such as system prompts or stored conversation history. Utilize cost calculators to estimate expenses, factoring in request volume, tokens per request, and model pricing. To cut costs, opt for smaller models for straightforward tasks, enable prompt caching, and refine prompt length and structure. Make it a habit to regularly review and adjust your usage to keep expenses under control.